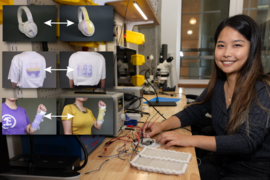

Imagine a world where you could change the designs you see on bags, shirts, and walls whenever you want. Typical clothes would become customizable fashion pieces, while your humble abode could turn into a smart home. That’s the vision of scientists like MIT electrical engineering and computer science PhD student Yunyi Zhu ’20, MEng ’21: technology that can “reprogram” the appearance of personal accessories, home decor, and office items.

At MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL), she’s created clever hardware that can add, say, artwork to a sweater, then swap in a new illustration later. To do this, she coats items with an invisible ink called photochromic dye, which transforms into different colors when exposed to intense light. Her colleagues previously built a device called “PhotoChromeleon” that used a projector to activate this ink, but the system wasn’t portable, so Zhu built the LED-based tool “PortaChrome” to reprogram lower-resolution imagery on the go.

Zhu and her team now have the best of both worlds: a portable device called “ChromoLCD” that programs clear pictures onto T-shirts, tables, and whiteboards. It looks like a small printer on the outside, but inside, ChromoLCD combines the sharpness of liquid-crystal displays (LCDs) with the precision lighting of LEDs. The collective powers of these lights help users stamp designs onto flat surfaces (like walls) and soft ones (like clothes) after they’ve been coated with photochromic dye.

ChromoLCD can embed a digital rose onto a hoodie, for example. Once you’ve painted photochromic ink onto the surface you’d like to redesign, you upload your picture to the device via Bluetooth or USB port. Users can select and preview their designs from ChromoLCD’s display menu, then stamp the device onto their item. Within about 15 minutes, you’ll have a personalized piece, and if you’d like to change it, you can program a new design onto your object.

“We see ChromoLCD as a bridge between consumers and photochromic dyes,” says Zhu, who is also co-lead author on a paper presenting this work. “It’s basically a stamp, and it’s very easy to use. There are no alignment requirements, no 3D object texture creation. You just upload the image you’d like to put on your bag, place it on there, and then you’d have a personalized accessory.”

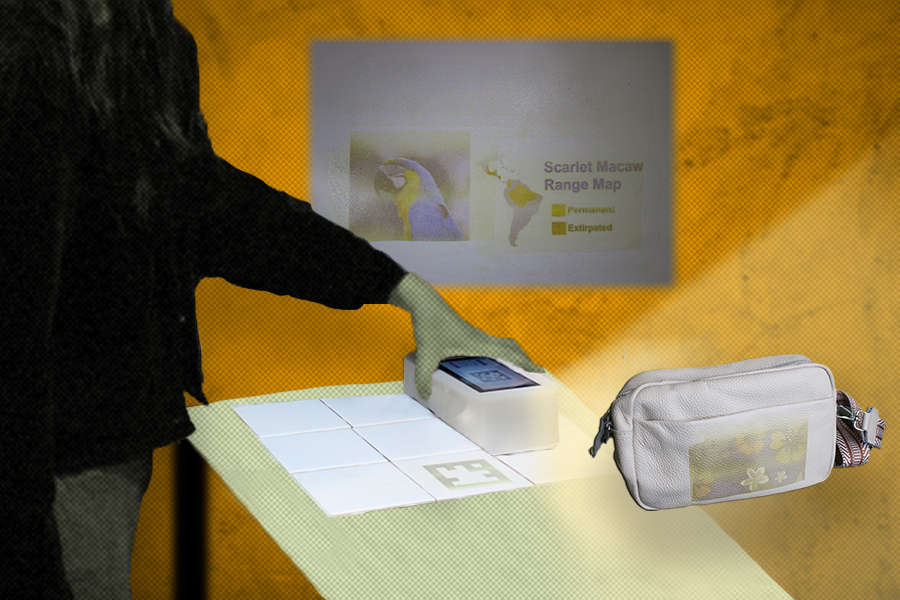

ChromoLCD showed it could add a personalized touch to accessories such as a handbag by stamping on colorful drawings of things like fish and flowers. It also embedded an augmented reality (AR) tag (much like a QR code) on a tiled kitchen counter, which linked to a cooking tutorial a user could watch while preparing a meal. The tool even reprogrammed a whiteboard to display high-resolution reference images, and could potentially turn any whiteboard into an interactive canvas that blends digital visuals with physical sketching.

Welcome to the light show

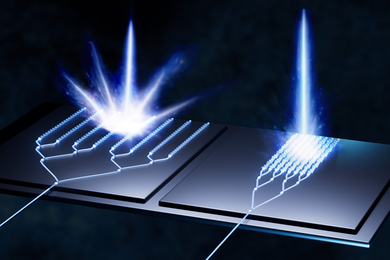

At its core, ChromoLCD is a tower of power. Its display screen sits atop a white shell, which houses a computer chip, a backlight made up of bright ultraviolet (UV) and red, green, and blue (RGB) LEDs, and an LCD panel. In other words, while ChromoLCD works its magic to customize an object, a light show takes place behind the scenes.

The system first produces a black-and-white video that outlines the brightness of particular pixels in the image you select. For example, a picture of a parrot will have some areas that are darker than others, such as the shadows cast under its wing. Then, a UV light darkens (or saturates) the dye on your object, followed by the RGB lights that brighten it up and color in each pixel. It’s kind of like when you open the shades in the morning — what starts as a blast of bright light soon becomes a more colorful visual. These lights are produced at precise frequencies that the LCD maps onto your target object.

Zhu and her colleagues note that these components are fairly easy to purchase, in case you want to make your own ChromoLCD at home. Recreating ChromoLCD could help you turn often-overlooked items into interactive displays that you can modify as you please. “A wall in your office can show your family’s pictures when you miss them, or perhaps a doormat can show a customized greeting for each of your guests,” says Zhu. “It’s sort of like turning the world into your canvas.”

What next?

Combined with PortaChrome and PhotoChromeleon, CSAIL researchers have developed systems that help us digitize our surroundings. The next step for them is to find a way to help with the creative process of what to put there. Currently, you still need to upload a picture or even create a texture image for a 3D object. With the recent advancements we’ve seen from AI in texture generation, though, users could make requests without as much effort. By simply turning on your phone’s camera (or wearing an AR helmet) and pointing it at a particular object, you could ask your generative system to “turn a cup into a medieval-style tankard.” Voilà: you’d have programmed drinkware.

In the meantime, Zhu and her colleagues are bringing photochromic material to larger surfaces by developing a reprogrammer in the shape of a wall-roller. The machine works much like painting a wall, allowing you to place larger designs onto a surface. CSAIL researchers are also exploring swiping and ironing motions, and even implementing their current technology into robots to help them communicate with humans and other machines. The machines would be able to essentially write what they’re doing onto a surface — for example, a Roomba vacuum could tell its robotic counterparts that it cleaned specific areas of a large floor by stamping a clearly displayed, high-resolution message on the ground.

Narges Pourjafarian, a postdoc at Northeastern University who wasn’t involved in the paper, says that ChromoLCD is more than a resolution upgrade over prior MIT projects. “It reframes monochromatic LCD panels as wavelength-selective fabrication tools, rather than merely display endpoints. This approach expands how we think about reprogrammable surface appearance, enabling high-resolution, reconfigurable graphics to be embedded directly into physical environments without the need for stationary projection enclosures. It opens a path toward compact, portable augmentation of garments, countertops, and shared surfaces.”

Zhu wrote the paper with six CSAIL affiliates. They are: MIT undergraduates Qingyuan Li (who is a co-lead author), Katherine Yan, Alex Luchianov, and Eden Hen; Harvard University graduate student and former visiting researcher Emily Guan; and MIT Associate Professor Stefanie Mueller, who is a CSAIL principal investigator and senior author on the work. The researchers will present their paper at the ACM International Conference on Tangible, Embedded, and Embodied Interaction.