MIT.nano has announced the first recipients of NCSOFT seed grants to foster hardware and software innovations in gaming technology. The grants are part of the new MIT.nano Immersion Lab Gaming program, with inaugural funding provided by video game developer NCSOFT, a founding member of the MIT.nano Consortium.

The newly awarded projects address topics such as 3-D/4-D data interaction and analysis, behavioral learning, fabrication of sensors, light field manipulation, and micro-display optics.

“New technologies and new paradigms of gaming will change the way researchers conduct their work by enabling immersive visualization and multi-dimensional interaction,” says MIT.nano Associate Director Brian W. Anthony. “This year’s funded projects highlight the wide range of topics that will be enhanced and influenced by augmented and virtual reality.”

In addition to the sponsored research funds, each awardee will be given funds specifically to foster a community of collaborative users of MIT.nano’s Immersion Lab.

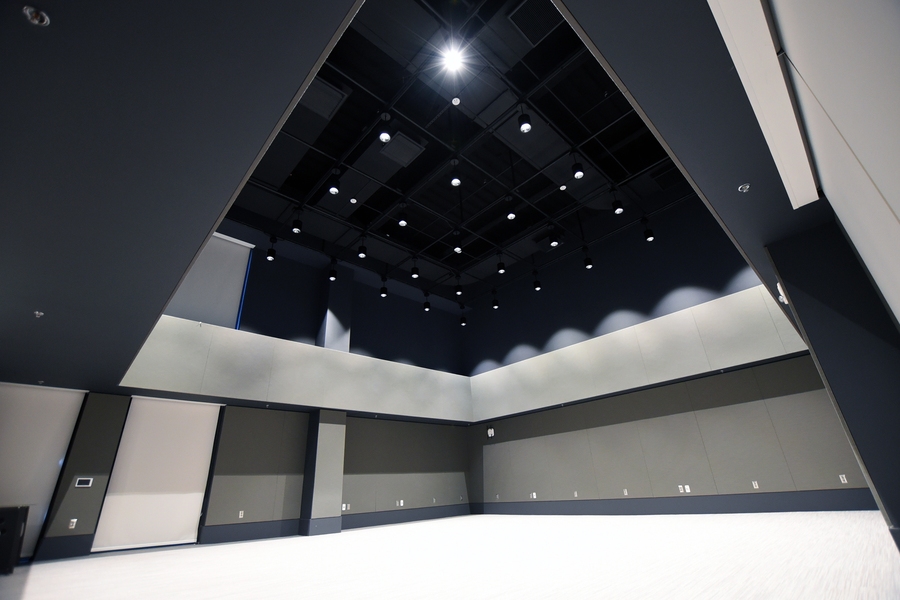

The MIT.nano Immersion Lab is a new, two-story immersive space dedicated to visualization, augmented and virtual reality (AR/VR), and the depiction and analysis of spatially related data. Currently being outfitted with equipment and software tools, the facility will be available starting this semester for use by researchers and educators interested in using and creating new experiences, including the seed grant projects.

The five projects to receive NCSOFT seed grants are:

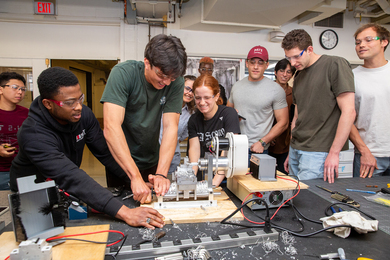

Stefanie Mueller: connecting the virtual and physical world

Virtual game play is often accompanied by a prop — a steering wheel, a tennis racket, or some other object the gamer uses in the physical world to create a reaction in the virtual game. Build-it-yourself cardboard kits have expanded access to these props by lowering costs; however, these kits are pre-cut, and thus limited in form and function. What if users could build their own dynamic props that evolve as they progress through the game?

Department of Electrical Engineering and Computer Science (EECS) Professor Stefanie Mueller aims to enhance the user’s experience by developing a new type of gameplay with tighter virtual-physical connection. In Mueller’s game, the player unlocks a physical template after completing a virtual challenge, builds a prop from this template, and then, as the game progresses, can unlock new functionalities to that same item. The prop can be expanded upon and take on new meaning, and the user learns new technical skills by building physical prototypes.

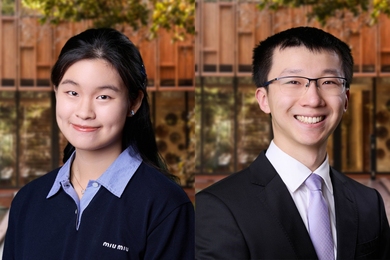

Luca Daniel and Micha Feigin-Almon: replicating human movements in virtual characters

Athletes, martial artists, and ballerinas share the ability to move their body in an elegant manner that efficiently converts energy and minimizes injury risk. Professor Luca Daniel, EECS and Research Laboratory of Electronics, and Micha Feigin-Almon, research scientist in mechanical engineering, seek to compare the movements of trained and untrained individuals to learn the limits of the human body with the goal of generating elegant, realistic movement trajectories for virtual reality characters.

In addition to use in gaming software, their research on different movement patterns will predict stresses on joints, which could lead to nervous system models for use by artists and athletes.

Wojciech Matusik: using phase-only holograms

Holographic displays are optimal for use in augmented and virtual reality. However, critical issues show a need for improvement. Out-of-focus objects look unnatural, and complex holograms have to be converted to phase-only or amplitude-only in order to be physically realized. To combat these issues, EECS Professor Wojciech Matusik proposes to adopt machine learning techniques for synthesis of phase-only holograms in an end-to-end fashion. Using a learning-based approach, the holograms could display visually appealing three-dimensional objects.

“While this system is specifically designed for varifocal, multifocal, and light field displays, we firmly believe that extending it to work with holographic displays has the greatest potential to revolutionize the future of near-eye displays and provide the best experiences for gaming,” says Matusik.

Fox Harrell: teaching socially impactful behavior

Project VISIBLE — Virtuality for Immersive Socially Impactful Behavioral Learning Enhancement — utilizes virtual reality in an educational setting to teach users how to recognize, cope with, and avoid committing microaggressions. In a virtual environment designed by Comparative Media Studies Professor Fox Harrell, users will encounter micro-insults, followed by major micro-aggression themes. The user’s physical response drives the narrative of the scenario, so one person can play the game multiple times and reach different conclusions, thus learning the various implications of social behavior.

Juejun Hu: displaying a wider field of view in high resolution

Professor Juejun Hu from the Department of Materials Science and Engineering seeks to develop high-performance, ultra-thin immersive micro-displays for AR/VR applications. These displays, based on metasurface optics, will allow for a large, continuous field of view, on-demand control of optical wavefronts, high-resolution projection, and a compact, flat, lightweight engine. While current commercial waveguide AR/VR systems offer less than 45 degrees of visibility, Hu and his team aim to design a high-quality display with a field of view close to 180 degrees.