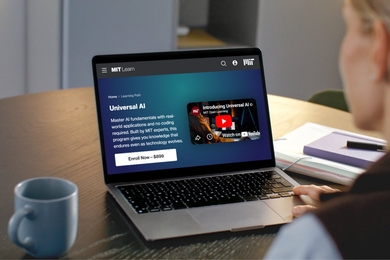

The rapid advance of artificial intelligence has generated a lot of buzz, with some predicting it will lead to an idyllic utopia and others warning it will bring the end of humanity. But speculation about where AI technology is going, while important, can also drown out important conversations about how we should be handling the AI technologies available today.

One such technology is generative AI, which can create content including text, images, audio, and video. Popular generative AIs like the chatbot ChatGPT generate conversational text based on training data taken from the internet.

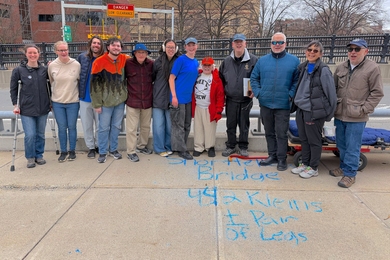

Today a group of 14 researchers from a number of organizations including MIT published a commentary article in Science that helps set the stage for discussions about generative AI’s immediate impact on creative work and society more broadly. The paper’s MIT-affiliated co-authors include Media Lab postdoc Ziv Epstein SM ’19, PhD ’23; Matt Groh SM ’19, PhD ’23; PhD students Rob Mahari ’17 and Hope Schroeder; and Professor Alex "Sandy" Pentland.

MIT News spoke with Epstein, the lead author of the paper.

Q: Why did you write this paper?

A: Generative AI tools are doing things that even a few years ago we never thought would be possible. This raises a lot of fundamental questions about the creative process and the human’s role in creative production. Are we going to get automated out of jobs? How are we going to preserve the human aspect of creativity with all of these new technologies?

The complexity of black-box AI systems can make it hard for researchers and the broader public to understand what’s happening under the hood, and what the impacts of these tools on society will be. Many discussions about AI anthropomorphize the technology, implicitly suggesting these systems exhibit human-like intent, agency, or self-awareness. Even the term “artificial intelligence” reinforces these beliefs: ChatGPT uses first-person pronouns, and we say AIs “hallucinate.” These agentic roles we give AIs can undermine the credit to creators whose labor underlies the system’s outputs, and can deflect responsibility from the developers and decision makers when the systems cause harm.

We’re trying to build coalitions across academia and beyond to help think about the interdisciplinary connections and research areas necessary to grapple with the immediate dangers to humans coming from the deployment of these tools, such as disinformation, job displacement, and changes to legal structures and culture.

Q: What do you see as the gaps in research around generative AI and art today?

A: The way we talk about AI is broken in many ways. We need to understand how perceptions of the generative process affect attitudes toward outputs and authors, and also design the interfaces and systems in a way that is really transparent about the generative process and avoids some of these misleading interpretations. How do we talk about AI and how do these narratives cut along lines of power? As we outline in the article, there are these themes around AI’s impact that are important to consider: aesthetics and culture; legal aspects of ownership and credit; labor; and the impacts to the media ecosystem. For each of those we highlight the big open questions.

With aesthetics and culture, we’re considering how past art technologies can inform how we think about AI. For example, when photography was invented, some painters said it was “the end of art.” But instead it ended up being its own medium and eventually liberated painting from realism, giving rise to Impressionism and the modern art movement. We’re saying generative AI is a medium with its own affordances. The nature of art will evolve with that. How will artists and creators express their intent and style through this new medium?

Issues around ownership and credit are tricky because we need copyright law that benefits creators, users, and society at large. Today’s copyright laws might not adequately apportion rights to artists when these systems are training on their styles. When it comes to training data, what does it mean to copy? That’s a legal question, but also a technical question. We’re trying to understand if these systems are copying, and when.

For labor economics and creative work, the idea is these generative AI systems can accelerate the creative process in many ways, but they can also remove the ideation process that starts with a blank slate. Sometimes, there’s actually good that comes from starting with a blank page. We don’t know how it’s going to influence creativity, and we need a better understanding of how AI will affect the different stages of the creative process. We need to think carefully about how we use these tools to complement people’s work instead of replacing it.

In terms of generative AI’s effect on the media ecosystem, with the ability to produce synthetic media at scale, the risk of AI-generated misinformation must be considered. We need to safeguard the media ecosystem against the possibility of massive fraud on one hand, and people losing trust in real media on the other.

Q: How do you hope this paper is received — and by whom?

A: The conversation about AI has been very fragmented and frustrating. Because the technologies are moving so fast, it’s been hard to think deeply about these ideas. To ensure the beneficial use of these technologies, we need to build shared language and start to understand where to focus our attention. We’re hoping this paper can be a step in that direction. We’re trying to start a conversation that can help us build a roadmap toward understanding this fast-moving situation.

Artists many times are at the vanguard of new technologies. They’re playing with the technology long before there are commercial applications. They’re exploring how it works, and they’re wrestling with the ethics of it. AI art has been going on for over a decade, and for as long these artists have been grappling with the questions we now face as a society. I think it is critical to uplift the voices of the artists and other creative laborers whose jobs will be impacted by these tools. Art is how we express our humanity. It’s a core human, emotional part of life. In that way we believe it’s at the center of broader questions about AI’s impact on society, and hopefully we can ground that discussion with this.