It is no secret that U.S. politics is polarized. An experiment conducted by MIT researchers now shows just how deeply political partisanship directly influences people’s behavior within online social networks.

Deploying Twitter bots to help examine the online behavior of real people, the researchers found that the likelihood that individuals will follow other accounts on Twitter triples when there appears to be a common partisan bond involved.

“When partisanship is matched, people are three times more likely to follow other accounts back,” says MIT professor David Rand, co-author of a new paper detailing the study’s results. “That’s a really big effect, and clear evidence of how important a role partisanship plays.”

The finding helps reveal how likely people are to self-select into partisan “echo chambers” online, long discussed as a basic civic problem exacerbated by social media. But methodologically, the experiment also tackles a basic challenge regarding the study of partisanship and social behavior: Do people who share partisan views associate with each other because of those views, or do they primarily associate for other reasons, with similar political preferences merely being incidental in the process?

The new experiment demonstrates the extent to which political preferences themselves can drive social connectivity.

“This pattern is not based on any preexisting relationships or any other common interests — the only thing people think they know about these accounts is that they share partisanship, and they were much more likely to want to form relationships with those accounts,” says first author Mohsen Mosleh, who is a lecturer at the University of Exeter Business School and a research affiliate at the MIT Sloan School of Management.

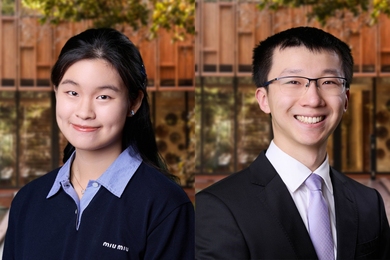

The paper, “Shared Partisanship Dramatically Increases Social Tie Formation in a Twitter Field Experiment,” appears this week in Proceedings of the National Academy of Sciences. In additional to Rand, who is the Erwin H. Schell Professor at the MIT Sloan School of Management and director of MIT Sloan’s Human Cooperation Laboratory and Applied Cooperation Team, the authors are Mosleh; Cameron Martel, a PhD student at MIT Sloan; and Dean Eckles, the Mitsubishi Career Development Professor and an associate professor of marketing at MIT Sloan.

To conduct the experiment, the researchers collected a list of Twitter users who had retweeted either MSNBC or Fox News tweets, and then examined their last 3,200 tweets to see how much information those people shared from left-leaning or right-leaning websites. From the initial list, the scholars then constructed a final roster of

842 Twitter accounts, evenly distributed across the two major parties.

At the same time, the researchers created a set of eight clearly partisan bots — fake accounts with the appearance of being politically minded individuals. The bots were split by party and varied in intensity of political expression. The researchers randomly selected one of the bots to follow each of the 842 real users on Twitter. Then they examined which real-life Twitter users followed the politically aligned bots back, and observed the distinctly partisan pattern that emerged.

Overall, the real Twitter users in the experiment would follow back about 15 percent of Twitter bots with whom they shared partisan views, regardless of the intensity of political expression in the bot accounts. By contrast, the real-life Twitter users would only follow back about 5 percent of accounts that appeared to support the opposing party.

Among other things, the study found a partisan symmetry in the user behavior they observed: People from the two major U.S. parties were equally likely to follow accounts back on the basis of partisan identification.

“There was no difference between Democrats and Republicans in this, in that Democrats were just as likely to have preferential tie formation as Republicans,” says Rand.

The bot accounts used in this experiment were not recommended by Twitter as accounts that users might want to follow, indicating that the tendency to follow other partisans happens independently of the account-recommendation algorithms that social networks use.

“What this suggests is the lack of cross-partisan relationships on social media isn’t only the consequence of algorithms,” Rand says. “There are basic psychological predispositions involved.”

At the same time, Rand notes, the findings do suggest that if social media companies want to increase cross-partisan civic interaction, they could try to engineer more of those kinds of interactions.

“If you want to foster cross-partisan social relationships, you don’t just need the friend suggestion algorithm to be neutral. You would need the friend suggestion algorithms to actively counter these psychological predispositions,” Rand says, although he also notes that whether cross-partisan social ties actually reduce political polarization is unclear based on current research.

Therefore, the behavior of social media users who form connections across party lines is an important subject for future studies and experiments, Mosleh suggests. He also points out that this experimental approach could be used to study a wide range of biases in the formation of social relationships beyond partisanship, such as race, gender, or age.

Support for the project was provided, in part, by the William and Flora Hewlett Foundation, the Reset project of Luminate, and the Ethics and Governance of Artificial Intelligence Initiative.