Imagine having to straighten up a messy kitchen, starting with a counter littered with sauce packets. If your goal is to wipe the counter clean, you might sweep up the packets as a group. If, however, you wanted to first pick out the mustard packets before throwing the rest away, you would sort more discriminately, by sauce type. And if, among the mustards, you had a hankering for Grey Poupon, finding this specific brand would entail a more careful search.

MIT engineers have developed a method that enables robots to make similarly intuitive, task-relevant decisions.

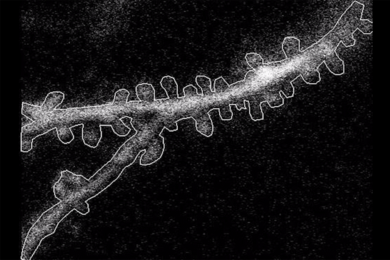

The team’s new approach, named Clio, enables a robot to identify the parts of a scene that matter, given the tasks at hand. With Clio, a robot takes in a list of tasks described in natural language and, based on those tasks, it then determines the level of granularity required to interpret its surroundings and “remember” only the parts of a scene that are relevant.

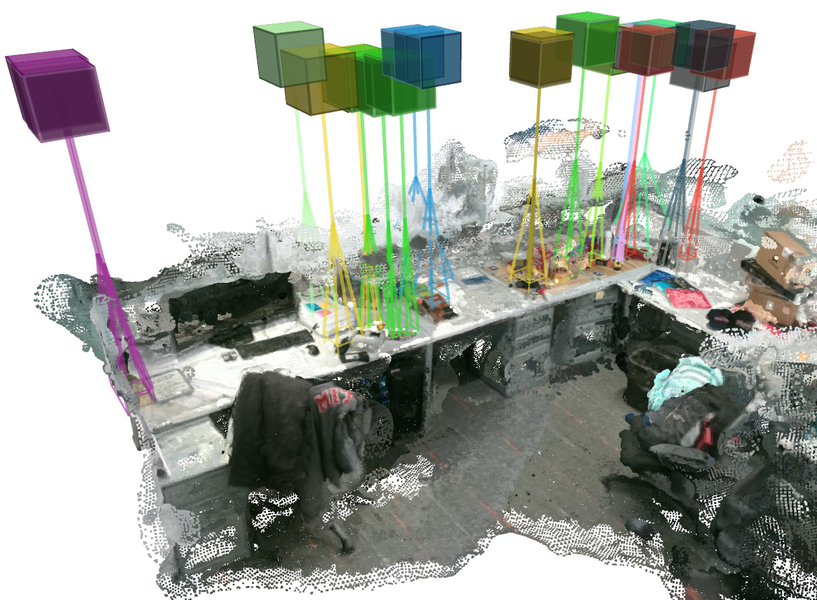

In real experiments ranging from a cluttered cubicle to a five-story building on MIT’s campus, the team used Clio to automatically segment a scene at different levels of granularity, based on a set of tasks specified in natural-language prompts such as “move rack of magazines” and “get first aid kit.”

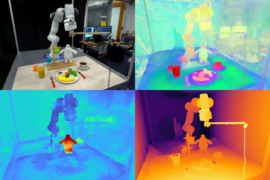

The team also ran Clio in real-time on a quadruped robot. As the robot explored an office building, Clio identified and mapped only those parts of the scene that related to the robot’s tasks (such as retrieving a dog toy while ignoring piles of office supplies), allowing the robot to grasp the objects of interest.

Clio is named after the Greek muse of history, for its ability to identify and remember only the elements that matter for a given task. The researchers envision that Clio would be useful in many situations and environments in which a robot would have to quickly survey and make sense of its surroundings in the context of its given task.

“Search and rescue is the motivating application for this work, but Clio can also power domestic robots and robots working on a factory floor alongside humans,” says Luca Carlone, associate professor in MIT’s Department of Aeronautics and Astronautics (AeroAstro), principal investigator in the Laboratory for Information and Decision Systems (LIDS), and director of the MIT SPARK Laboratory. “It’s really about helping the robot understand the environment and what it has to remember in order to carry out its mission.”

The team details their results in a study appearing today in the journal Robotics and Automation Letters. Carlone’s co-authors include members of the SPARK Lab: Dominic Maggio, Yun Chang, Nathan Hughes, and Lukas Schmid; and members of MIT Lincoln Laboratory: Matthew Trang, Dan Griffith, Carlyn Dougherty, and Eric Cristofalo.

Open fields

Huge advances in the fields of computer vision and natural language processing have enabled robots to identify objects in their surroundings. But until recently, robots were only able to do so in “closed-set” scenarios, where they are programmed to work in a carefully curated and controlled environment, with a finite number of objects that the robot has been pretrained to recognize.

In recent years, researchers have taken a more “open” approach to enable robots to recognize objects in more realistic settings. In the field of open-set recognition, researchers have leveraged deep-learning tools to build neural networks that can process billions of images from the internet, along with each image’s associated text (such as a friend’s Facebook picture of a dog, captioned “Meet my new puppy!”).

From millions of image-text pairs, a neural network learns from, then identifies, those segments in a scene that are characteristic of certain terms, such as a dog. A robot can then apply that neural network to spot a dog in a totally new scene.

But a challenge still remains as to how to parse a scene in a useful way that is relevant for a particular task.

“Typical methods will pick some arbitrary, fixed level of granularity for determining how to fuse segments of a scene into what you can consider as one ‘object,’” Maggio says. “However, the granularity of what you call an ‘object’ is actually related to what the robot has to do. If that granularity is fixed without considering the tasks, then the robot may end up with a map that isn’t useful for its tasks.”

Information bottleneck

With Clio, the MIT team aimed to enable robots to interpret their surroundings with a level of granularity that can be automatically tuned to the tasks at hand.

For instance, given a task of moving a stack of books to a shelf, the robot should be able to determine that the entire stack of books is the task-relevant object. Likewise, if the task were to move only the green book from the rest of the stack, the robot should distinguish the green book as a single target object and disregard the rest of the scene — including the other books in the stack.

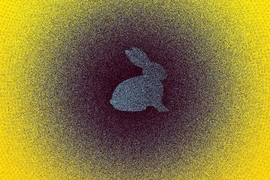

The team’s approach combines state-of-the-art computer vision and large language models comprising neural networks that make connections among millions of open-source images and semantic text. They also incorporate mapping tools that automatically split an image into many small segments, which can be fed into the neural network to determine if certain segments are semantically similar. The researchers then leverage an idea from classic information theory called the “information bottleneck,” which they use to compress a number of image segments in a way that picks out and stores segments that are semantically most relevant to a given task.

“For example, say there is a pile of books in the scene and my task is just to get the green book. In that case we push all this information about the scene through this bottleneck and end up with a cluster of segments that represent the green book,” Maggio explains. “All the other segments that are not relevant just get grouped in a cluster which we can simply remove. And we’re left with an object at the right granularity that is needed to support my task.”

The researchers demonstrated Clio in different real-world environments.

“What we thought would be a really no-nonsense experiment would be to run Clio in my apartment, where I didn’t do any cleaning beforehand,” Maggio says.

The team drew up a list of natural-language tasks, such as “move pile of clothes” and then applied Clio to images of Maggio’s cluttered apartment. In these cases, Clio was able to quickly segment scenes of the apartment and feed the segments through the Information Bottleneck algorithm to identify those segments that made up the pile of clothes.

They also ran Clio on Boston Dynamic’s quadruped robot, Spot. They gave the robot a list of tasks to complete, and as the robot explored and mapped the inside of an office building, Clio ran in real-time on an on-board computer mounted to Spot, to pick out segments in the mapped scenes that visually relate to the given task. The method generated an overlaying map showing just the target objects, which the robot then used to approach the identified objects and physically complete the task.

“Running Clio in real-time was a big accomplishment for the team,” Maggio says. “A lot of prior work can take several hours to run.”

Going forward, the team plans to adapt Clio to be able to handle higher-level tasks and build upon recent advances in photorealistic visual scene representations.

“We’re still giving Clio tasks that are somewhat specific, like ‘find deck of cards,’” Maggio says. “For search and rescue, you need to give it more high-level tasks, like ‘find survivors,’ or ‘get power back on.’ So, we want to get to a more human-level understanding of how to accomplish more complex tasks.”

This research was supported, in part, by the U.S. National Science Foundation, the Swiss National Science Foundation, MIT Lincoln Laboratory, the U.S. Office of Naval Research, and the U.S. Army Research Lab Distributed and Collaborative Intelligent Systems and Technology Collaborative Research Alliance.