Health care is at a junction, a point where artificial intelligence tools are being introduced to all areas of the space. This introduction comes with great expectations: AI has the potential to greatly improve existing technologies, sharpen personalized medicines, and, with an influx of big data, benefit historically underserved populations.

But in order to do those things, the health care community must ensure that AI tools are trustworthy, and that they don’t end up perpetuating biases that exist in the current system. Researchers at the MIT Abdul Latif Jameel Clinic for Machine Learning in Health (Jameel Clinic), an initiative to support AI research in health care, call for creating a robust infrastructure that can aid scientists and clinicians in pursuing this mission.

Fair and equitable AI for health care

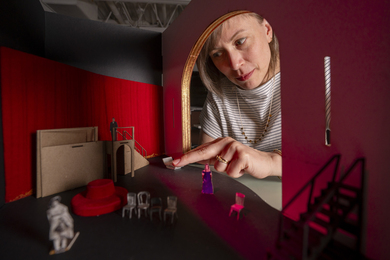

The Jameel Clinic recently hosted the AI for Health Care Equity Conference to assess current state-of-the-art work in this space, including new machine learning techniques that support fairness, personalization, and inclusiveness; identify key areas of impact in health care delivery; and discuss regulatory and policy implications.

Nearly 1,400 people virtually attended the conference to hear from thought leaders in academia, industry, and government who are working to improve health care equity and further understand the technical challenges in this space and paths forward.

During the event, Regina Barzilay, the School of Engineering Distinguished Professor of AI and Health and the AI faculty lead for Jameel Clinic, and Bilal Mateen, clinical technology lead at the Wellcome Trust, announced the Wellcome Fund grant conferred to Jameel Clinic to create a community platform supporting equitable AI tools in health care.

The project’s ultimate goal is not to solve an academic question or reach a specific research benchmark, but to actually improve the lives of patients worldwide. Researchers at Jameel Clinic insist that AI tools should not be designed with a single population in mind, but instead be crafted to be reiterative and inclusive, to serve any community or subpopulation. To do this, a given AI tool needs to be studied and validated across many populations, usually in multiple cities and countries. Also on the project wish list is to create open access for the scientific community at large, while honoring patient privacy, to democratize the effort.

“What became increasingly evident to us as a funder is that the nature of science has fundamentally changed over the last few years, and is substantially more computational by design than it ever was previously,” says Mateen.

The clinical perspective

This call to action is a response to health care in 2020. At the conference, Collin Stultz, a professor of electrical engineering and computer science and a cardiologist at Massachusetts General Hospital, spoke on how health care providers typically prescribe treatments and why these treatments are often incorrect.

In simplistic terms, a doctor collects information on their patient, then uses that information to create a treatment plan. “The decisions providers make can improve the quality of patients’ lives or make them live longer, but this does not happen in a vacuum,” says Stultz.

Instead, he says that a complex web of forces can influence how a patient receives treatment. These forces go from being hyper-specific to universal, ranging from factors unique to an individual patient, to bias from a provider, such as knowledge gleaned from flawed clinical trials, to broad structural problems, like uneven access to care.

Datasets and algorithms

A central question of the conference revolved around how race is represented in datasets, since it’s a variable that can be fluid, self-reported, and defined in non-specific terms.

“The inequities we’re trying to address are large, striking, and persistent,” says Sharrelle Barber, an assistant professor of epidemiology and biostatistics at Drexel University. “We have to think about what that variable really is. Really, it’s a marker of structural racism,” says Barber. “It’s not biological, it’s not genetic. We’ve been saying that over and over again.”

Some aspects of health are purely determined by biology, such as hereditary conditions like cystic fibrosis, but the majority of conditions are not straightforward. According to Massachusetts General Hospital oncologist T. Salewa Oseni, when it comes to patient health and outcomes, research tends to assume biological factors have outsized influence, but socioeconomic factors should be considered just as seriously.

Even as machine learning researchers detect preexisting biases in the health care system, they must also address weaknesses in algorithms themselves, as highlighted by a series of speakers at the conference. They must grapple with important questions that arise in all stages of development, from the initial framing of what the technology is trying to solve to overseeing deployment in the real world.

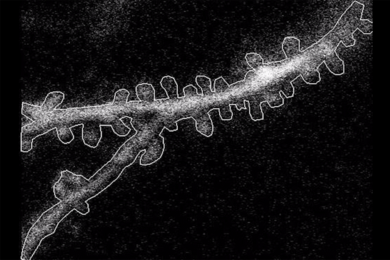

Irene Chen, a PhD student at MIT studying machine learning, examines all steps of the development pipeline through the lens of ethics. As a first-year doctoral student, Chen was alarmed to find an “out-of-the-box” algorithm, which happened to project patient mortality, churning out significantly different predictions based on race. This kind of algorithm can have real impacts, too; it guides how hospitals allocate resources to patients.

Chen set about understanding why this algorithm produced such uneven results. In later work, she defined three specific sources of bias that could be detangled from any model. The first is “bias,” but in a statistical sense — maybe the model is not a good fit for the research question. The second is variance, which is controlled by sample size. The last source is noise, which has nothing to do with tweaking the model or increasing the sample size. Instead, it indicates that something has happened during the data collection process, a step way before model development. Many systemic inequities, such as limited health insurance or a historic mistrust of medicine in certain groups, get “rolled up” into noise.

“Once you identify which component it is, you can propose a fix,” says Chen.

Marzyeh Ghassemi, an assistant professor at the University of Toronto and an incoming professor at MIT, has studied the trade-off between anonymizing highly personal health data and ensuring that all patients are fairly represented. In cases like differential privacy, a machine-learning tool that guarantees the same level of privacy for every data point, individuals who are too “unique” in their cohort started to lose predictive influence in the model. In health data, where trials often underrepresent certain populations, “minorities are the ones that look unique,” says Ghassemi.

“We need to create more data, it needs to be diverse data,” she says. “These robust, private, fair, high-quality algorithms we're trying to train require large-scale data sets for research use.”

Beyond Jameel Clinic, other organizations are recognizing the power of harnessing diverse data to create more equitable health care. Anthony Philippakis, chief data officer at the Broad Institute of MIT and Harvard, presented on the All of Us research program, an unprecedented project from the National Institutes of Health that aims to bridge the gap for historically under-recognized populations by collecting observational and longitudinal health data on over 1 million Americans. The database is meant to uncover how diseases present across different sub-populations.

One of the largest questions of the conference, and of AI in general, revolves around policy. Kadija Ferryman, a cultural anthropologist and bioethicist at New York University, points out that AI regulation is in its infancy, which can be a good thing. “There’s a lot of opportunities for policy to be created with these ideas around fairness and justice, as opposed to having policies that have been developed, and then working to try to undo some of the policy regulations,” says Ferryman.

Even before policy comes into play, there are certain best practices for developers to keep in mind. Najat Khan, chief data science officer at Janssen R&D, encourages researchers to be “extremely systematic and thorough up front” when choosing datasets and algorithms; detailed feasibility on data source, types, missingness, diversity, and other considerations are key. Even large, common datasets contain inherent bias.

Even more fundamental is opening the door to a diverse group of future researchers.

“We have to ensure that we are developing and investing back in data science talent that are diverse in both their backgrounds and experiences and ensuring they have opportunities to work on really important problems for patients that they care about,” says Khan. “If we do this right, you’ll see ... and we are already starting to see ... a fundamental shift in the talent that we have — a more bilingual, diverse talent pool.”

The AI for Health Care Equity Conference was co-organized by MIT’s Jameel Clinic; Department of Electrical Engineering and Computer Science; Institute for Data, Systems, and Society; Institute for Medical Engineering and Science; and the MIT Schwarzman College of Computing.