Sophie Gibert is a PhD candidate in philosophy and assistant director of 24.133 (Experiential Ethics), an MIT class in which students explore ethical questions related to their internships, research, or other experiential learning activities. Gibert, who also serves as a graduate teaching fellow for Embedded EthiCS at Harvard University, which focuses on ethics for computer scientists, was formerly a predoctoral fellow in the Clinical Center Department of Bioethics at the National Institutes of Health (NIH). At MIT, her research centers on ethics, including bioethics, and the philosophy of action. Gibert recently spoke about applying the tools of philosophy to ethical questions.

Q: There are two consistent threads running through your academic career: health care and philosophy. What ties these fields together for you, and what drew you to focus in on the latter for your graduate degree?

A: What brings them together for me is my research area: ethics. I’m particularly interested in the ethics of influencing other people, because in health care — whether you are a clinician or a policymaker, or a clinical researcher — there is always the issue of paternalism (i.e., substituting your own judgment about what’s best for someone else’s). Many health outcomes are influenced by behavior, so questions naturally arise about how far one should go to help people lead healthier lives.

For example, is it manipulative to highlight calorie information on sweets in an effort to prevent obesity? The calorie label is not forcing anybody to make a particular choice, but we think we know that it will lead people toward healthier options. Is that kind of “nudge policy” ethical?

What if the labels are targeted toward diabetics? Scientists have found some correlation between the health outcomes of patients with chronic illnesses like diabetes and the degree to which patients feel they are in control of their choices. Yet, if you make them feel they are in control, that could open them to stigma and blame even though many behaviors are in fact shaped by environment. So, what is the right thing to do?

It’s the job of the clinician or public health policy person to try to improve health, but there will always be choices to make about how to do that. Philosophy provides a path to conceptual clarity in such matters by asking, for example, “What does it mean to be forced to make a choice?” and “When is a choice really yours?”

These are the kind of questions that led me to my dissertation research, which focuses on the question: Why is manipulation wrong? My view counters the commonly held opinion that it has to do with autonomy — that is, the capacity to act for your own reasons. In contrast, I think the core issue relates to what kind of actor the manipulator is and whether it is legitimate for that person to be interfering. For example, while it is legitimate for a woman’s obstetrician to outline the risks of an abortion, it is not legitimate for the same obstetrician to accost a pregnant woman on the street with the same information.

Q: What issues most concern you in the field of bioethics, and what strategies might you suggest for addressing the ethical challenges you see?

A: Many important bioethics issues center on what is OK when doing human subjects research. These concerns were particularly salient when I worked at NIH, because we were located in a hospital where everyone was participating in a clinical trial. Naturally, the researchers there focused a lot on questions of informed consent and whether paternalism is ethical. That’s where I found myself gravitating to philosophy projects having to do with the way we find ethical truth.

In this context, it’s important to remember that human subject research was pretty unregulated until the 20th century, and there were some notorious examples of mistreatment — such as the Tuskegee experiment, in which government scientists deliberately allowed Black men to die of syphilis, even after treatment became available. This led to a period of extreme protection for patients, a trend that swung back a bit in the ’80s, when AIDS patients were demanding the right to participate in research.

Philosophy helps us address bioethical challenges by reasoning out the issues. For example, if you are interfering with someone to prevent harm or give benefit, it matters if there is good reason to believe that the person did not know all the relevant facts. This would be the case, for example, if you gave a person a shove to prevent him from crossing an unstable bridge because you believed he did not know the risks.

In biomedical research, if you have reason to believe people don’t understand the risks of a treatment, you might think it’s OK to interfere with them — perhaps by preventing patients from taking unproven medication. But there is an assumption built in here that ordinary people are not as capable of decision-making as the medical experts. Sometimes that might be true, but I think the medical community errs too much on the side of paternalism. After all, in a lot of contexts outside of medicine, we let people make these decisions — like climbing Mount Everest, even though it’s risky. In medicine, we don’t always allow patients the same agency.

Q: You are assistant director of the 24.133 (Experiential Ethics) class at MIT and serve as a graduate teaching fellow for Embedded EthiCS at Harvard, which focuses on ethics for computer scientists. What lessons do you hope to instill in students taking these classes?

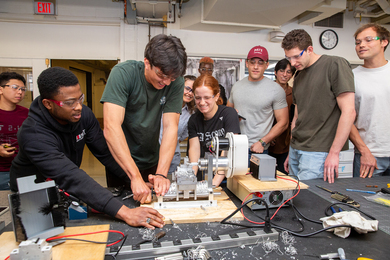

A: We try to show students that they are already doing ethics — because ethics is just the study of what to do. In the class, we provide students with some decision-making frameworks to help them with their choices. For example, we encourage students to look for the values that are inherent in each particular technological product and not always just assume that more technology is better. We have them explore the difference between empirical statements, which say how things are, and normative claims, which say how they ought to be. Then, we have the students practice on topics that are relevant to their experience.

This past summer, one student who took 24.133 had gone through a hiring process involving a robotic interview. So, he explored whether robotic interviewing was more or less biased than human interviewing. Others in the class investigated algorithmic bias, divestment in fossil fuels, and even the ethics of pursuing a career in investment banking.

Throughout the class, students meet in small groups and discuss these kinds of issues, and our hope is that they develop the kinds of thought habits that can make their decision-making processes more rigorous.

Story prepared by MIT SHASS Communications

Emily Hiestand, editorial and design director

Kathryn O'Neill, senior writer