In order to devise new designs for safer, more efficient nuclear reactors, it is essential to be able to simulate the reactors’ performance at a very high level of detail. But because the nuclear reactions taking place in these reactor cores are quite complex, such simulations can strain the capabilities of even the most advanced supercomputer systems.

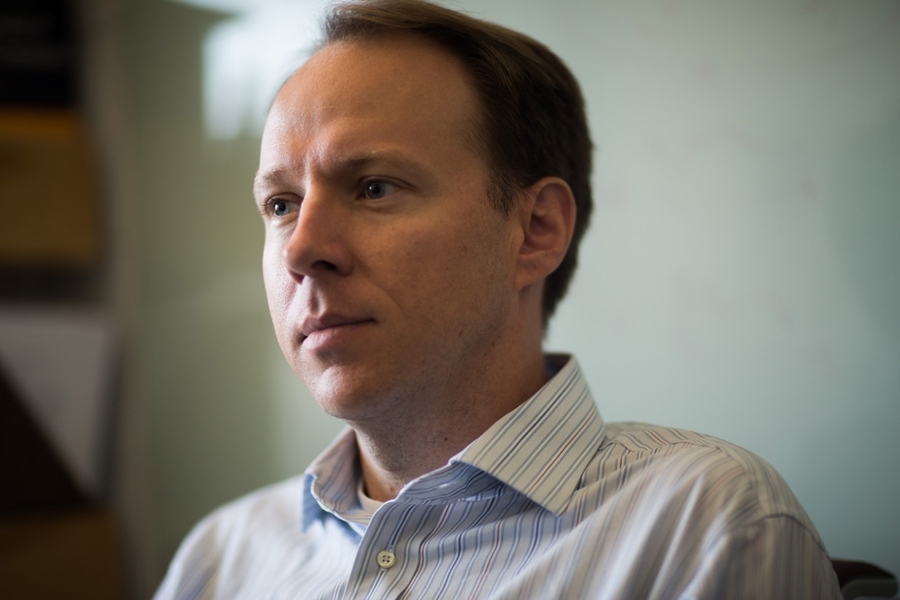

That’s a challenge that Benoit Forget has been tackling throughout his research career: how to provide efficient, high-fidelity simulations on modern computing architectures, and thus enable the development of the next generation of reactors.

Addressing those challenges has earned Forget tenure in MIT’s Department of Nuclear Science and Engineering, where he is now an associate professor.

Forget grew up in the small town of Temiscaming in the province of Quebec, Canada. His father was the principal of the local high school and his mother was a teacher there. “My mom was my French, history, and geography teacher,” he recalls. His graduating class had about 20 students.

“Science was always second-nature to me since I was a kid,” he says. “I spent a lot of time just tinkering around, breaking stuff apart and building it back up.” He also had a very supportive science teacher in high school, he says, who encouraged him to explore.

He began studying engineering at the École Polytechnique de Montréal, where he earned a BS in chemical engineering and an MS in energy engineering, and he did an internship at Hydro-Quebec, the local utility, which had a single nuclear power plant at the time.

It was during those first few years in Montréal that Forget developed his interest in nuclear engineering. “That’s when I had my first modern physics class and studied quantum mechanics, and that’s when I got hooked and wanted to study nuclear engineering. … I had a very good professor who introduced us to some of these concepts,” he says. From that one initial class, Forget made the decision to pursue this area of study.

“The [relatively small] amount of energy in a chemical reaction compared to the energy in one fission event is quite remarkable,” he says. “If you want to produce a lot of power quickly with little fuel or waste, this is the way to do it. So that made it my career choice.”

Forget moved to the United States to work on his PhD in nuclear engineering at Georgia Tech. He received the degree in 2006 and then spent a year and a half working at Idaho National Laboratory, before accepting an appointment at MIT. His wife, an aerospace engineer who received her doctorate a week prior to his, took a job at the MITRE Corporation, allowing them to begin their jobs in the Boston area at the same time. The couple now has a three-year-old son, Thomas, who “keeps us very busy,” Forget says.

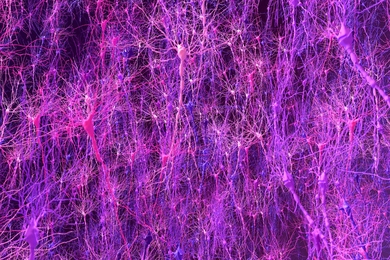

Since arriving at MIT, Forget has concentrated on developing new ways of streamlining the complex software needed to simulate the vast numbers of random interactions that take place inside a nuclear reactor core, in order to better understand how to develop new generations of improved reactor architectures.

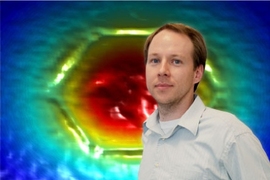

His team, the MIT Computational Reactor Physics Group, consists of two faculty members (he and Kord Smith, the KEPCO Professor of Nuclear Science and Engineering), 15-20 graduate students, and between five and 10 undergraduates. They “focus on modeling and simulation of the nuclear reactor itself — the physics that describes what goes on inside a nuclear reactor, how heat is being generated, where it’s being deposited, and how we extract that heat from the system,” Forget says.

“We focus primarily on the neutron and photon transport in the core, which is essentially the source of the fission reaction — we want to know precisely how much power is being produced where, so we can stay below the temperature limits, the material limits, and control everything else that goes on inside the reactor.” But even using the most efficient, streamlined computer code, simulating the operation of a whole plant for just one instant in time can take 100,000 CPU-hours, he says.

Currently, there are a lot of computer models, developed over the last half-century, that simulate the present generation of nuclear reactors. “These codes perform very well for the current generation,” he says. “But in the near future, there’s a lot of interest in looking at advanced reactors, new concepts, new designs, new materials — all designed to have more inherent safety, better economics, better fuel utilization. All of these cannot necessarily rely on the methods of the past. We’re going to need some more advanced methodologies.”

Since it’s impractical to build test reactors for every new concept, “we rely much more on high-fidelity modeling and simulation,” he says. “We’re still going to need experiments, but we want to design better experiments, so that they provide better information at lesser cost.” That’s where his team’s expertise comes into play. Among other projects, the researchers have developed two large pieces of software, called OpenMC and OpenMOC, which are both open-source packages available to anyone.

One of these code packages, OpenMC, is based on Monte Carlo simulations — a statistical technique for simulating complex systems in which random events play a significant role, by generating vast numbers of simulations that each involve slight variations on the others. The system Forget and his team have developed uses new approaches made possible by massively parallel computing and distributed computation. “Now we end up with a new paradigm for modern architectures in hardware, where we can do a lot of calculations directly” that used to require huge lookup tables of precomputed data, he says. And as a result, the team can more precisely capture the details of the physics, while actually streamlining the computations.

“We kind of bridge a gap between fundamental physics and high-performance computing. We dig a little bit deeper, and try to reformulate and use more fundamental physical representations,” he says. As they develop the models, he hopes to be able to simulate both long-term and short-term transients in addition to current steady state capabilities. Ultimately, “the goal is to be able to simulate a whole reactor, over its full lifetime, with as much detail as possible, for all possible operating conditions,” he says.