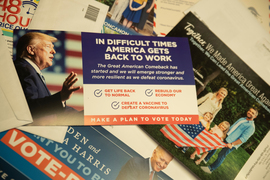

Recent U.S. elections have raised the question of whether “microtargeting,” the use of extensive online data to tailor persuasive messages to voters, has altered the playing field of politics.

Now, a newly-published study led by MIT scholars finds that while targeting is effective in some political contexts, the “micro” part of things may not be the game-changing tool some have assumed.

“In a traditional messaging context where you have one issue you’re trying to convince people on, we found that targeting did have a substantial persuasive advantage,” says David Rand, an MIT professor and co-author of the study.

Indeed, the study found that tailoring political ads based on one attribute of their intended audience — say, party affiliation — can be 70 percent more effective in swaying policy support than simply showing everyone the single ad that is expected to be most persuasive across the entire population. But targeting political ads using multiple attributes — for instance, ideology, age, and moral values — did not add any further benefit, in the study.

“We didn’t find much evidence that microtargeting works,” says Rand, who is the Erwin H. Schell Professor at the MIT Sloan School of Management. “We found we got just as much persuasive advantage from targeting based on just one attribute as we did targeting on more attributes.”

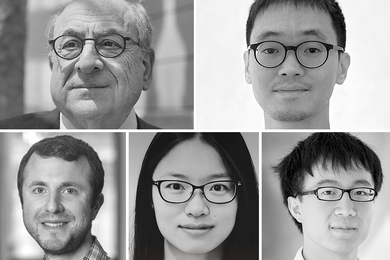

The paper, “Quantifying the Potential Persuasive Returns to Political Microtargeting,” is published in Proceedings of the National Academy of Sciences. The authors are Ben Tappin, a postdoc at the University of London and a research affiliate at the MIT’s Applied Cooperation Team; Chloe Wittenberg, a doctoral candidate in MIT’s Department of Political Science; Luke Hewitt PhD ’22, a visiting scholar at the Stanford Center on Philanthropy and Civil Society; Adam Berinsky, the Mitsui Professor of Political Science at MIT; and Rand, who is also a professor of brain and cognitive sciences at MIT and director of the Applied Cooperation Team.

Road-testing messages

Political microtargeting became the subject of extended attention after the 2016 U.S. elections, when it became widely known that the firm Cambridge Analytica had used data from Facebook to craft highly targeted messages to voters. What scholars have found less clear since then is: Did those ads work?

To assess this question, the researchers ran sets of survey experiments in 2022, using the Lucid online survey platform. In the first phase, with over 23,000 participants, the researchers created advertisements for two issue-based campaigns: one focused on the U.S. Citizenship Act of 2021, and the second focused on universal basic income. Participants were randomly divided into either a control group, which received only basic information about the relevant policy, or many different treatment groups, which each watched one video ad aimed at swaying opinions about that policy. This approach parallels the real campaign practice of trying out many types of ads, and then seeing which is the most effective.

In the second phase of the study, the researchers then simulated multiple campaign strategies. Over 5,000 participants were again assigned to either a control group — which received the same basic issue information as in the first phase — or one of three treatment groups. The members of these treatment groups saw either the best-performing message from the first phase of the study; an ad selected at random; or a “targeted” ad selected based on a machine learning model trained on data from the first phase of the study. To evaluate the added benefit of microtargeting, the researchers also varied the complexity of the targeting process used to match ads to participants — targeting these participants using between one and four personal characteristics.

Through this multi-stage design, the researchers were able to evaluate the effectiveness of a targeting strategy in comparison to multiple other, widely-used campaign ad tactics.

Ultimately, the targeting strategy performed better than these other tactics, but the microtargeted ads based on multiple voter characteristics were not more effective than those based on only one characteristic.

The researchers hope their findings will help inform ongoing debates about microtargeting and U.S. political campaigns.

“There has been a lot of speculation about the promises and perils of microtargeting for the functioning of our democratic system,” Berinsky says. “Our study allows us to evaluate in a rigorous way the potential impact of political microtargeting in the real world.”

One ad does not fit all

Rand emphasizes that the study results occupy a middle ground; microtargeting is probably not the seemingly overpowering force that people fear it to be, but targeted political ads still have an advantage much of the time.

“In terms of the implications for political advertising, it certainly seems like targeting is often going to be a good idea, and if you’re not doing that, you may be leaving persuasive power on the table,” Rand says. “At the same time, it’s clearly not mind control.”

It is important to remember that microtargeting in the context of political persuasion works differently than it does for business advertising, Rand suggests, since it is hard to generate the data needed to train the political targeting models and distribute the resulting ads.

Microtargeting in politics also works differently than it does for business advertising, Rand suggests, since it is hard to generate the data needed to train the political targeting models and distribute the resulting ads.

“If Facebook is doing microtargeting based on selling widgets, it’s able to get good feedback on whether you bought the widgets or not, and then use that data to continually optimize the targeting,” Rand says. In politics, it is much harder to obtain reliable information about voter attitudes and voting decisions — making it very difficult to effectively microtarget political ads at scale.

Although their results suggest that targeting political ads may be effective in some contexts, the researchers also note several reasons why targeting may work differently — and perhaps less effectively — outside the experimental context they developed. For one, the benefits to microtargeting varied substantially across the two main policies under study. Those benefits were even smaller in the second experiment, focused on an alternative, albeit less common, campaign situation.

As Wittenberg suggests, “It’s important to understand not just whether microtargeting is effective, but also when.”

Other work by Rand and Berinsky has been funded by Google and Meta.