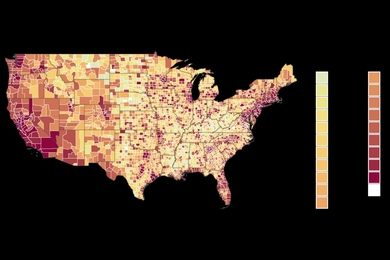

There’s no human instinct more basic than speech, and yet, for many people, talking can be taxing. One in 14 working-age Americans suffer from voice disorders that are often associated with abnormal vocal behaviors — some of which can cause damage to vocal cord tissue and lead to the formation of nodules or polyps that interfere with normal speech production.

Unfortunately, many behaviorally-based voice disorders are not well understood. In particular, patients with muscle tension dysphonia (MTD) often experience deteriorating voice quality and vocal fatigue (“tired voice”) in the absence of any clear vocal cord damage or other medical problems, which makes the condition both hard to diagnose and hard to treat.

But a team from MIT's Computer Science and Artificial Intelligence Laboratory (CSAIL) and Massachusetts General Hospital (MGH) believes that better understanding of conditions like MTD is possible through machine learning.

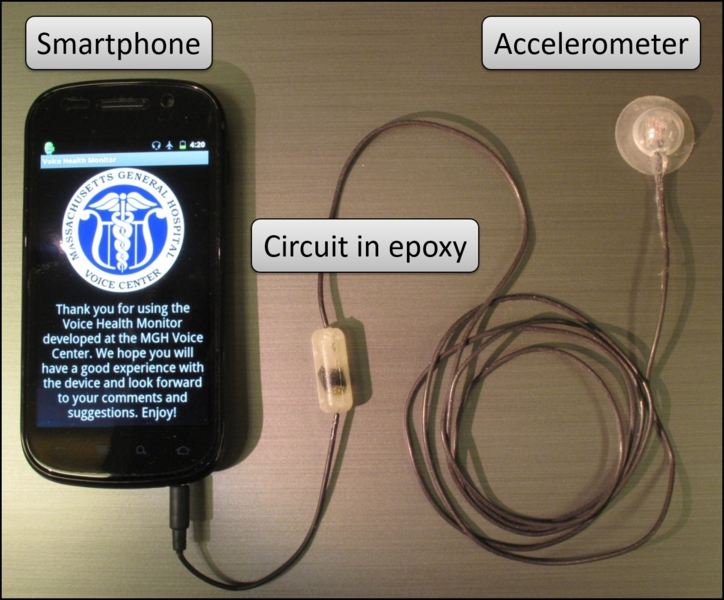

Using accelerometer data collected from a wearable device developed by researchers at the MGH Voice Center, researchers demonstrated that they can detect differences between subjects with MTD and matched controls. The same methods also showed that, after receiving voice therapy, MTD subjects exhibited behavior that was more similar to that of the controls.

“We believe this approach could help detect disorders that are exacerbated by vocal misuse, and help to empirically measure the impact of voice therapy,” says MIT PhD student Marzyeh Ghassemi, who is first author on a related paper that she presented at last week’s Machine Learning in Health Care (MLHC) conference in Los Angeles. “Our long-term goal is for such a system to be used to alert patients when they are using their voices in ways that could lead to problems.”

The paper’s co-authors include John Guttag, MIT professor of electrical engineering and computer science; Zeeshan Syed, CEO of the machine-learning startup Health[at]Scale; and physicians Robert Hillman, Daryush Mehta and Jarrad H. Van Stan of Massachusetts General Hospital.

How it works

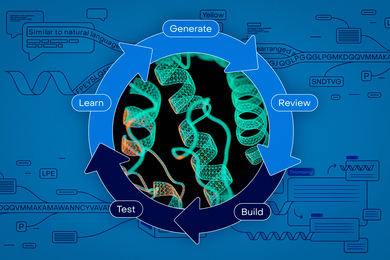

Existing approaches to applying machine learning to physiological signals often involve supervised learning, in which researchers painstakingly label data and provide desired outputs. Besides being time-consuming, such methods currently can’t actually help classify utterances as normal or abnormal, because there is currently not a good understanding of the correlations between accelerometer data and voice misuse.

Because the CSAIL team did not know when vocal misuse was occurring, they opted to use unsupervised learning, where data is unlabeled at the instance level.

“People with vocal disorders aren’t always misusing their voices, and people without disorders also occasionally misuse their voices,” says Ghassemi. “The difficult task here was to build a learning algorithm that can determine what sort of vocal cord movements are prominent in subjects with a disorder.”

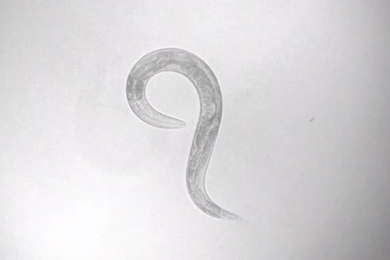

The study was broken into two groups: patients that had been diagnosed with voice disorders, and a control group of individuals without disorders. Each group went about their daily activities while wearing accelerometers on their necks that captured the motions of their vocal folds.

Researchers then looked at the two groups’ data, analyzing more than 110 million “glottal pulses” that each represent one opening and closing of the vocal folds. By comparing clusters of pulses, the team could detect significant differences between patients and controls.

The team also found that after voice therapy the distribution of patients’ glottal pulses were more similar to those of the controls. According to Guttag, this is the first such study to use machine learning to provide objective evidence of the positive effects of voice therapy.

“When a patient comes in for therapy, you might only be able to analyze their voice for 20 or 30 minutes to see what they’re doing incorrectly and have them practice better techniques,” says Susan Thibeault, a professor at the department of surgery at the University of Wisconsin School of Medicine and Public Health who was not involved in the research. “As soon as they leave, we don’t really know how well they’re doing, and so it’s exciting to think that we could eventually give patients wearable devices that use round-the-clock data to provide more immediate feedback.”

Looking ahead

One long-term goal of the work is to be able to use the data not just to improve the lives of those with voice disorders, but to potentially help diagnose specific disorders.

The team also hopes to further explore the underlying reason why certain kinds of vocal pulses are more common in patients than in controls.

“Ultimately we hope this work will lead to smartphone-based biofeedback,” says Hillman. “That sort of technology can help with the most challenging aspect of voice therapy: getting patients to actually employ the healthier vocal behaviors that they learned in therapy in their everyday lives.”

The research was funded, in part, by the Intel Science and Technology Center for Big Data, the Voice Health Institute, the National Institutes of Health (NIH) National Institute on Deafness and Other Communications Disorders, and the National Library of Medicine Biomedical Informatics Research Training.