It’s often said that humans are wired to connect: The neural wiring that helps us read the emotions and actions of other people may be a foundation for human empathy.

But for the past eight years, MIT Media Lab spinout Innerscope Research has been using neuroscience technologies that gauge subconscious emotions by monitoring brain and body activity to show just how powerfully we also connect to media and marketing communications.

“We are wired to connect, but that connection system is not very discriminating. So while we connect with each other in powerful ways, we also connect with characters on screens and in books, and, we found, we also connect with brands, products, and services,” says Innerscope’s chief science officer, Carl Marci, a social neuroscientist and former Media Lab researcher.

With this core philosophy, Innerscope — co-founded at MIT by Marci and Brian Levine MBA ’05 — aims to offer market research that’s more advanced than traditional methods, such as surveys and focus groups, to help content-makers shape authentic relationships with their target consumers.

“There’s so much out there, it’s hard to make something people will notice or connect to,” Levine says. “In a way, we aim to be the good matchmaker between content and people.”

So far, it’s drawn some attention. The company has conducted hundreds of studies and more than 100,000 content evaluations with its host of Fortune 500 clients, which include Campbell’s Soup, Yahoo, and Fox Television, among others.

And Innerscope’s studies are beginning to provide valuable insights into the way consumers connect with media and advertising. Take, for instance, its recent project to measure audience engagement with television ads that aired during the Super Bowl.

Innerscope first used biometric sensors to capture fluctuations in heart rate, skin conductance, breathing, and motion among 80 participants who watched select ads and sorted them into “winning” and “losing” commercials (in terms of emotional responses). Then their collaborators at Temple University’s Center for Neural Decision Making used functional magnetic resonance imaging (fMRI) brain scans to further measure engagement.

Ads that performed well elicited increased neural activity in the amygdala (which drives emotions), superior temporal gyrus (sensory processing), hippocampus (memory formation), and lateral prefrontal cortex (behavioral control).

“But what was really interesting was the high levels of activity in the area known as the precuneus — involved in feelings of self-consciousness — where it is believed that we keep our identity. The really powerful ads generated a heightened sense of personal identification,” Marci says.

Using neuroscience to understand marketing communications and, ultimately, consumers’ purchasing decisions is still at a very early stage, Marci admits — but the Super Bowl study and others like it represent real progress. “We’re right at the cusp of coherent, neuroscience-informed measures of how ad engagement works,” he says.

Capturing “biometric synchrony”

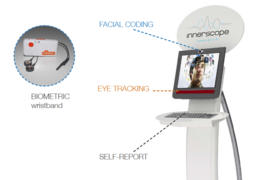

Innerscope’s arsenal consists of 10 tools: Electroencephalography and fMRI technologies measure brain waves and structures. Biometric tools — such as wristbands and attachable sensors — track heart rate, skin conductance, motion, and respiration, which reflect emotional processing. And then there’s eye-tracking, voice-analysis, and facial-coding software, as well as other tests to complement these measures.

Such technologies were used for market research long before the rise of Innerscope. But, starting at MIT, Marci and Levine began developing novel algorithms, informed by neuroscience, that find trends among audiences pointing to exact moments when an audience is engaged together — in other words, in “biometric synchrony.”

Traditional algorithms for such market research would average the responses of entire audiences, Levine explains. “What you get is an overall level of arousal — basically, did they love or hate the content?” he says. “But how is that emotion going to be useful? That’s where the hole was.”

Innerscope’s algorithms tease out real-time detail from individual reactions — comprising anywhere from 500 million to 1 billion data points — to locate instances when groups’ responses (such as surprise, excitement, or disappointment) collectively match.

As an example, Levine references an early test conducted using an episode of the television show “Lost,” where a group of strangers are stranded on a tropical island.

Levine and Marci attached biometric sensors to six separate groups of five participants. At the long-anticipated moment when the show’s “monster” is finally revealed, nearly everyone held their breath for about 10 to 15 seconds.

“What our algorithms are looking for is this group response. The more similar the group response, the more likely the stimuli is creating that response,” Levine explains. “That allows us to understand if people are paying attention and if they’re going on a journey together.”

Getting on the map

Before MIT, Marci was a neuroscientist studying empathy, using biometric sensors and other means to explore how empathy between patient and doctor can improve patient health.

“I was lugging around boxes of equipment, with wires coming out and videotaping patients and doctors. Then someone said, ‘Hey, why don’t you just go to the MIT Media Lab,’” Marci says. “And I realized it had the resources I needed.”

At the Media Lab, Marci met behavioral analytics expert and collaborator Alexander “Sandy” Pentland, the Toshiba Professor of Media Arts and Sciences, who helped him set up Bluetooth sensors around Massachusetts General Hospital to track emotions and empathy between doctors and patients with depression.

During this time, Levine, a former Web developer, had enrolled at MIT, splitting his time between the MIT Sloan School of Management and the Media Lab. “I wanted to merge an idea to understand customers better with being able to prototype anything,” he says.

After meeting Marci through a digital anthropology class, Levine proposed that they use this emotion-tracking technology to measure the connections of audiences to media. Using prototype sensor vests equipped with heart-rate monitors, stretch receptors, accelerometers, and skin-conductivity sensors, they trialed the technology with students around the Media Lab.

All the while, Levine pieced together Innerscope’s business plan in his classes at MIT Sloan, with help from other students and professors. “The business-strategy classes were phenomenal for that,” Levine says. “Right after finishing MIT, I had a complete and detailed business plan in my hands.”

Innerscope launched in 2006. But a 2008 study really accelerated the company’s growth. “NBC Universal had a big concern at the time: DVR,” Marci says. “Were people who were watching the prerecorded program still remembering the ads, even though they were clearly skipping them?”

Innerscope compared facial cues and biometrics from people who fast-forwarded ads against those who didn’t. The results were unexpected: While fast-forwarding, people stared at the screen blankly, but their eyes actually caught relevant brands, characters, and text. Because they didn’t want to miss their show, while fast-forwarding, they also had a heightened sense of engagement, signaled by leaning forward and staring fixedly.

“What we concluded was that people don’t skip ads,” Marci says. “They’re processing them in a different way, but they’re still processing those ads. That was one of those insights you couldn’t get from a survey. That put us on the map.”

Today, Innerscope is looking to expand. One project is bringing kiosks to malls and movie theaters, where the company recruits passersby for fast and cost-effective results. (Wristbands monitor emotional response, while cameras capture facial cues and eye motion.) The company is also aiming to try applications in mobile devices, wearables, and at-home sensors.

“We’re rewiring a generation of Americans in novel ways and moving toward a world of ubiquitous sensing,” Marci says. “We’ll need data science and algorithms and experts that can make sense of all that data.”