The speakers at the two-day MIT 150 symposium on computing, "Computation and the Transformation of Practically Everything," included the inventor of the World Wide Web, the founders of Akamai and iRobot, one of leaders of the Human Genome Project and five winners of the Turing Award, the field's most prestigious prize. But interspersed with the insights of computing pioneers of the 20th century were presentations by MIT's young computer science and electrical-engineering faculty on the most exciting research of the 21st.

As befit a 150th-anniversary event, many of the speakers spent at least a little time on historical reflection. A few took the long view: Tom Leighton, MIT professor of applied mathematics and founder of Akamai, a 13-year-old $7 billion company that speeds content delivery over the Internet, pointed out that in 300 B.C.E., Euclid proposed an algorithm for finding the largest common prime factor of two numbers that hasn't been bettered since; Patrick Winston, the Ford Professor of Artificial Intelligence and Computer Science, went back further still, arguing that artificial-intelligence researchers should seek to explain what suddenly prompted humans to make the cave drawings at Lascaux 100,000 years after we reached our biologically modern form.

Others invoked the early days of computing. David Mindell, Dibner Professor of the History of Engineering and Manufacturing and chair of the MIT150 steering committee, extolled the differential analyzer — a mechanical device developed in the late 1920s by MIT Professor of Electrical Engineering Vannevar Bush and his students — as probably the first programmable computer. "All it took was a week with a wrench and oil adjusting rods," Mindell said. Ed Lazowska, who holds the Bill and Melinda Gates Chair in Computer Science and Engineering at the University of Washington, got what was probably the biggest laugh of the symposium's first day when, complete with well-timed PowerPoint illustration, he pointed out that "the computational power of the computer that guided man to the moon is literally embodied in the Furby."

Big guns

But for the most part, the history recounted by the symposium's speakers was the history they had a hand in making. The five Turing Award winners discussed their groundbreaking work in the order in which they received the prize. Professor emeritus Fernando Corbató, who won in 1990 for his contributions to real-time computers that could run more than one application simultaneously, described "the frozen attitude of industrialists" who, in the early 1960s, seemed to find nothing wrong with loading data onto a computer in the evening in order to get an answer by the next morning.

Adjunct professor Butler Lampson, who was at Xerox's Palo Alto Research Center (PARC) in the heady days when it was inventing most of the technologies that enabled the personal-computer revolution, said that PARC researchers had even worked on protocols for a large, decentralized network that anticipated the Internet, but that they had been forbidden to discuss their research publicly at the time. According to Lampson, Vinton Cerf, who (with Bob Kahn) is commonly described as one of the two "fathers of the Internet," gave a lecture at Stanford in the 1970s in which PARC researchers asked him so many penetrating questions that at some point he paused and said, "You've done this before, haven't you?"

Andy Yao of China's Tsinghua University and CSAIL professor Ron Rivest won in 2000 and 2002, respectively, and their work has important points of contact. Yao won, in part, for his demonstration that computers could generate sequences of numbers that, to all intents and purposes, were perfectly random. Rivest won for helping develop the RSA encryption system, which still protects most online financial transactions. RSA depends crucially on random-number generation, and Rivest joked that if Yao, who taught at MIT in 1975 and 1976, had stuck around for a few more years, the algorithm might be known as RSAY. "Or YRSA," Yao quipped.

Institute Professor Barbara Liskov won in 2008 for her work on abstract data types, a vital component of "object-oriented" programming languages such as C++ and Java, which changed the way programming is done. Liskov explained that she had an "aha moment" in the fall of 1972 and spent several years building working systems that instantiated it; then, in 1980, she abruptly moved into the largely unrelated research area of distributed systems. At the end of the session, when the panelists were asked to provide one-sentence summations of what they'd learned over their careers, Liskov said, "Avoid doing incremental work. If that means changing fields, do it."

Practically everything

In keeping with the symposium's title, many of the sessions were organized around fields that computation had transformed. In the travel and entertainment session, Jeremy Wertheimer SM '89, PhD '96, co-founder of ITA Software (which Google is trying to buy for $700 million), described how a single airline, in an attempt to manage the tradeoff between filling seats and maximizing fares, can offer more than 500 different fares between just two cities, all determined and tracked algorithmically. Tony DeRose, who heads Pixar Animation Studios' research group, took the stage joking that it was almost 30 years to the day since he had been rejected from MIT. He went on to mention that in 1995, when Pixar released the first Toy Story, it had taken four hours to computationally generate each frame of the film. In 2010, however, when the studio released Toy Story 3, its computers were 600 times as powerful. So it took … eight hours to generate each frame of film. Moore's law hadn't been able to keep up with the complexity of the algorithms that govern animated films' lighting effects.

A session on business and economics dealt primarily with the havoc that electronic trading and algorithmic pricing of securities could wreak — and has wreaked — on the financial sector. The MIT Sloan School of Management's Andrew Lo pointed out that computation is also part of the solution, allowing researchers to, for instance, reverse-engineer the closely guarded hedge-fund investment strategies that led to the "quant meltdown" of 2007. Former CEO of the New York Stock Exchange John Thain '77 argued for the importance of human oversight of computerized trading, offering by way of example a case in which the NYSE's specialists were able to intercept a mistaken order to sell 500 million, rather than five million, shares of one company's stock.

In a session on the life sciences, Eric Lander, who was one of the leaders of the Human Genome Project, said that in 1999, he and his colleagues were "so proud of ourselves" because they had managed to sequence a billion letters of human DNA in only a year, but that technological advances since the draft human genome was first presented meant that last year alone, biological researchers had sequenced 125,000 billion letters. And in a session titled "Computing for Everyone," Tim Berners-Lee, the inventor of the World Wide Web, pleaded on behalf of a discipline he called "Web science," which would employ analytic techniques from fields as far flung as math and psychology "to understand how information propagates across the Web." That type of analysis is a "duty," Berners-Lee argued, lest "the Web turn suddenly into some network that spreads unfounded rumors and conspiracy theories."

Sprinkled amid the themed sessions and panel discussions were several rapid-fire presentations by MIT faculty on a bewildering variety of recent research. Russ Tedrake described how the same control system that led to his group's perching plane could also improve the power output of wind turbines whose turbulence interferes with each others' ability to capture wind energy. Shafi Goldwasser described new techniques that would allow servers in the computational "cloud" to perform operations on encrypted information without decrypting it, preserving user privacy. Dina Katabi described a new method of encoding Internet video so that fluctuations in the strength of wireless connections wouldn't cause it to cut out, showing a video that drew gasps from the audience. Many faculty described research that MIT News has previously reported on, including that of Daniela Rus, Scott Aaronson and Rob Miller.

As befit a 150th-anniversary event, many of the speakers spent at least a little time on historical reflection. A few took the long view: Tom Leighton, MIT professor of applied mathematics and founder of Akamai, a 13-year-old $7 billion company that speeds content delivery over the Internet, pointed out that in 300 B.C.E., Euclid proposed an algorithm for finding the largest common prime factor of two numbers that hasn't been bettered since; Patrick Winston, the Ford Professor of Artificial Intelligence and Computer Science, went back further still, arguing that artificial-intelligence researchers should seek to explain what suddenly prompted humans to make the cave drawings at Lascaux 100,000 years after we reached our biologically modern form.

Others invoked the early days of computing. David Mindell, Dibner Professor of the History of Engineering and Manufacturing and chair of the MIT150 steering committee, extolled the differential analyzer — a mechanical device developed in the late 1920s by MIT Professor of Electrical Engineering Vannevar Bush and his students — as probably the first programmable computer. "All it took was a week with a wrench and oil adjusting rods," Mindell said. Ed Lazowska, who holds the Bill and Melinda Gates Chair in Computer Science and Engineering at the University of Washington, got what was probably the biggest laugh of the symposium's first day when, complete with well-timed PowerPoint illustration, he pointed out that "the computational power of the computer that guided man to the moon is literally embodied in the Furby."

Big guns

But for the most part, the history recounted by the symposium's speakers was the history they had a hand in making. The five Turing Award winners discussed their groundbreaking work in the order in which they received the prize. Professor emeritus Fernando Corbató, who won in 1990 for his contributions to real-time computers that could run more than one application simultaneously, described "the frozen attitude of industrialists" who, in the early 1960s, seemed to find nothing wrong with loading data onto a computer in the evening in order to get an answer by the next morning.

Adjunct professor Butler Lampson, who was at Xerox's Palo Alto Research Center (PARC) in the heady days when it was inventing most of the technologies that enabled the personal-computer revolution, said that PARC researchers had even worked on protocols for a large, decentralized network that anticipated the Internet, but that they had been forbidden to discuss their research publicly at the time. According to Lampson, Vinton Cerf, who (with Bob Kahn) is commonly described as one of the two "fathers of the Internet," gave a lecture at Stanford in the 1970s in which PARC researchers asked him so many penetrating questions that at some point he paused and said, "You've done this before, haven't you?"

Andy Yao of China's Tsinghua University and CSAIL professor Ron Rivest won in 2000 and 2002, respectively, and their work has important points of contact. Yao won, in part, for his demonstration that computers could generate sequences of numbers that, to all intents and purposes, were perfectly random. Rivest won for helping develop the RSA encryption system, which still protects most online financial transactions. RSA depends crucially on random-number generation, and Rivest joked that if Yao, who taught at MIT in 1975 and 1976, had stuck around for a few more years, the algorithm might be known as RSAY. "Or YRSA," Yao quipped.

Institute Professor Barbara Liskov won in 2008 for her work on abstract data types, a vital component of "object-oriented" programming languages such as C++ and Java, which changed the way programming is done. Liskov explained that she had an "aha moment" in the fall of 1972 and spent several years building working systems that instantiated it; then, in 1980, she abruptly moved into the largely unrelated research area of distributed systems. At the end of the session, when the panelists were asked to provide one-sentence summations of what they'd learned over their careers, Liskov said, "Avoid doing incremental work. If that means changing fields, do it."

Practically everything

In keeping with the symposium's title, many of the sessions were organized around fields that computation had transformed. In the travel and entertainment session, Jeremy Wertheimer SM '89, PhD '96, co-founder of ITA Software (which Google is trying to buy for $700 million), described how a single airline, in an attempt to manage the tradeoff between filling seats and maximizing fares, can offer more than 500 different fares between just two cities, all determined and tracked algorithmically. Tony DeRose, who heads Pixar Animation Studios' research group, took the stage joking that it was almost 30 years to the day since he had been rejected from MIT. He went on to mention that in 1995, when Pixar released the first Toy Story, it had taken four hours to computationally generate each frame of the film. In 2010, however, when the studio released Toy Story 3, its computers were 600 times as powerful. So it took … eight hours to generate each frame of film. Moore's law hadn't been able to keep up with the complexity of the algorithms that govern animated films' lighting effects.

A session on business and economics dealt primarily with the havoc that electronic trading and algorithmic pricing of securities could wreak — and has wreaked — on the financial sector. The MIT Sloan School of Management's Andrew Lo pointed out that computation is also part of the solution, allowing researchers to, for instance, reverse-engineer the closely guarded hedge-fund investment strategies that led to the "quant meltdown" of 2007. Former CEO of the New York Stock Exchange John Thain '77 argued for the importance of human oversight of computerized trading, offering by way of example a case in which the NYSE's specialists were able to intercept a mistaken order to sell 500 million, rather than five million, shares of one company's stock.

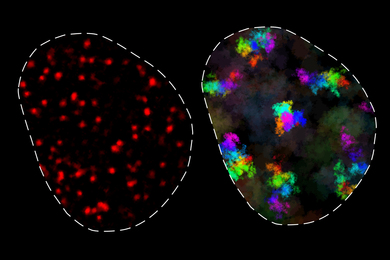

In a session on the life sciences, Eric Lander, who was one of the leaders of the Human Genome Project, said that in 1999, he and his colleagues were "so proud of ourselves" because they had managed to sequence a billion letters of human DNA in only a year, but that technological advances since the draft human genome was first presented meant that last year alone, biological researchers had sequenced 125,000 billion letters. And in a session titled "Computing for Everyone," Tim Berners-Lee, the inventor of the World Wide Web, pleaded on behalf of a discipline he called "Web science," which would employ analytic techniques from fields as far flung as math and psychology "to understand how information propagates across the Web." That type of analysis is a "duty," Berners-Lee argued, lest "the Web turn suddenly into some network that spreads unfounded rumors and conspiracy theories."

Sprinkled amid the themed sessions and panel discussions were several rapid-fire presentations by MIT faculty on a bewildering variety of recent research. Russ Tedrake described how the same control system that led to his group's perching plane could also improve the power output of wind turbines whose turbulence interferes with each others' ability to capture wind energy. Shafi Goldwasser described new techniques that would allow servers in the computational "cloud" to perform operations on encrypted information without decrypting it, preserving user privacy. Dina Katabi described a new method of encoding Internet video so that fluctuations in the strength of wireless connections wouldn't cause it to cut out, showing a video that drew gasps from the audience. Many faculty described research that MIT News has previously reported on, including that of Daniela Rus, Scott Aaronson and Rob Miller.