Over the past two decades, scientists have shown that babies only a few months old have a solid grasp on basic rules of the physical world. They understand that objects can’t wink in and out of existence, and that objects can’t “teleport” from one spot to another.

Now, an international team of researchers co-led by MIT’s Josh Tenenbaum has found that infants can use that knowledge to form surprisingly sophisticated expectations of how novel situations will unfold.

Furthermore, the scientists developed a computational model of infant cognition that accurately predicts infants’ surprise at events that violate their conception of the physical world.

The model, which simulates a type of intelligence known as pure reasoning, calculates the probability of a particular event, given what it knows about how objects behave. The close correlation between the model’s predictions and the infants’ actual responses to such events suggests that infants reason in a similar way, says Tenenbaum, associate professor of cognitive science and computation at MIT.

“Real intelligence is about finding yourself in situations that you’ve never been in before but that have some abstract principles in common with your experience, and using that abstract knowledge to reason productively in the new situation,” he says.

The study, which appears in the May 27 issue of Science, is the first step in a long-term effort to “reverse-engineer” infant cognition by studying babies at ages 3-, 6- and 12-months (and other key stages through the first two years of life) to map out what they know about the physical and social world. That “3-6-12” project is part of a larger Intelligence Initiative at MIT, launched this year with the goal of understanding the nature of intelligence and replicating it in machines.

Tenenbaum and Luca Bonatti of the Universitat Pompeu Fabra in Barcelona are co-senior authors of the Science paper; the co-lead authors are Erno Teglas of Central European University in Hungary and Edward Vul, a former MIT student who worked with Tenenbaum and is now at the University of California at San Diego.

Measuring surprise

Elizabeth Spelke, a professor of psychology at Harvard University, did much of the pioneering work showing that babies understand abstract principles about the physical world. Spelke also demonstrated that infants’ level of surprise can be measured by how long they look at something: The more unexpected the event, the longer they watch.

Tenenbaum and Vul developed a computational model, known as an “ideal-observer model,” to predict how long infants would look at animated scenarios that were more or less consistent with their knowledge of objects’ behavior. The model starts with abstract principles of how objects can behave in general (the same principles that Spelke showed infants have), then runs multiple simulations of how objects could behave in a given situation.

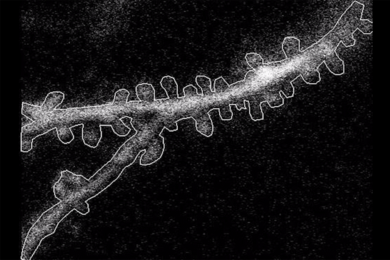

In one example, 12-month-olds were shown four objects — three blue, one red — bouncing around a container. After some time, the scene would be covered, and during that time, one of the objects would exit the container through an opening.

If the scene was blocked very briefly (0.04 seconds), infants would be surprised if one of the objects farthest from the exit had left the container. If the scene was obscured longer (2 seconds), the distance from exit became less important and they were surprised only if the rare (red) object exited first. At intermediate times, both distance to the exit and number of objects mattered.

The computational model accurately predicted how long babies would look at the same exit event under a dozen different scenarios, varying number of objects, spatial position and time delay. This marks the first time that infant cognition has been modeled with such quantitative precision, and suggests that infants reason by mentally simulating possible scenarios and figuring out which outcome is most likely, based on a few physical principles.

“We don’t yet have a unified theory of how cognition works, but we’re starting to make progress on describing core aspects of cognition that previously were only described intuitively. Now we’re describing them mathematically,” Tenenbaum says.

Spelke says the new paper offers a possible explanation for how human cognitive development can be both extremely fast and highly flexible.

“Until now, no theory has appeared to have the right properties to account for both features, because core knowledge systems tend to be limited and inflexible, whereas systems designed to learn almost anything tend to learn slowly,” she says. “The research described in this article is the first, I believe, to suggest how human infants' learning could be both fast and flexible.”

New models of cognition

In addition to performing similar studies with younger infants, Tenenbaum plans to further refine his model by adding other physical principles that babies appear to understand, such as gravity or friction. “We think infants are much smarter, in a sense, than this model is,” he says. “We now need to do more experiments and model a broader range of the existing literature to test exactly what they know.”

He is also developing similar models for infants’ “intuitive psychology,” or understanding of how other people act. Such models of normal infant cognition could help researchers figure out what goes wrong in disorders such as autism. “We have to understand more precisely what the normal case is like in order to understand how it breaks,” Tenenbaum says.

Another avenue of research is the origin of infants’ ability to understand how the world works. In a paper published in Science in March, Tenenbaum and several colleagues outlined a possible mechanism, also based on probabilistic inference, for learning abstract principles from very early sensory input. “It’s very speculative, but we understand roughly the mathematical machinery that could explain how this sort of knowledge could be learned surprisingly early from fairly minimal experience,” he says.

Now, an international team of researchers co-led by MIT’s Josh Tenenbaum has found that infants can use that knowledge to form surprisingly sophisticated expectations of how novel situations will unfold.

Furthermore, the scientists developed a computational model of infant cognition that accurately predicts infants’ surprise at events that violate their conception of the physical world.

The model, which simulates a type of intelligence known as pure reasoning, calculates the probability of a particular event, given what it knows about how objects behave. The close correlation between the model’s predictions and the infants’ actual responses to such events suggests that infants reason in a similar way, says Tenenbaum, associate professor of cognitive science and computation at MIT.

“Real intelligence is about finding yourself in situations that you’ve never been in before but that have some abstract principles in common with your experience, and using that abstract knowledge to reason productively in the new situation,” he says.

The study, which appears in the May 27 issue of Science, is the first step in a long-term effort to “reverse-engineer” infant cognition by studying babies at ages 3-, 6- and 12-months (and other key stages through the first two years of life) to map out what they know about the physical and social world. That “3-6-12” project is part of a larger Intelligence Initiative at MIT, launched this year with the goal of understanding the nature of intelligence and replicating it in machines.

Tenenbaum and Luca Bonatti of the Universitat Pompeu Fabra in Barcelona are co-senior authors of the Science paper; the co-lead authors are Erno Teglas of Central European University in Hungary and Edward Vul, a former MIT student who worked with Tenenbaum and is now at the University of California at San Diego.

Measuring surprise

Elizabeth Spelke, a professor of psychology at Harvard University, did much of the pioneering work showing that babies understand abstract principles about the physical world. Spelke also demonstrated that infants’ level of surprise can be measured by how long they look at something: The more unexpected the event, the longer they watch.

Tenenbaum and Vul developed a computational model, known as an “ideal-observer model,” to predict how long infants would look at animated scenarios that were more or less consistent with their knowledge of objects’ behavior. The model starts with abstract principles of how objects can behave in general (the same principles that Spelke showed infants have), then runs multiple simulations of how objects could behave in a given situation.

In one example, 12-month-olds were shown four objects — three blue, one red — bouncing around a container. After some time, the scene would be covered, and during that time, one of the objects would exit the container through an opening.

If the scene was blocked very briefly (0.04 seconds), infants would be surprised if one of the objects farthest from the exit had left the container. If the scene was obscured longer (2 seconds), the distance from exit became less important and they were surprised only if the rare (red) object exited first. At intermediate times, both distance to the exit and number of objects mattered.

The computational model accurately predicted how long babies would look at the same exit event under a dozen different scenarios, varying number of objects, spatial position and time delay. This marks the first time that infant cognition has been modeled with such quantitative precision, and suggests that infants reason by mentally simulating possible scenarios and figuring out which outcome is most likely, based on a few physical principles.

“We don’t yet have a unified theory of how cognition works, but we’re starting to make progress on describing core aspects of cognition that previously were only described intuitively. Now we’re describing them mathematically,” Tenenbaum says.

Spelke says the new paper offers a possible explanation for how human cognitive development can be both extremely fast and highly flexible.

“Until now, no theory has appeared to have the right properties to account for both features, because core knowledge systems tend to be limited and inflexible, whereas systems designed to learn almost anything tend to learn slowly,” she says. “The research described in this article is the first, I believe, to suggest how human infants' learning could be both fast and flexible.”

New models of cognition

In addition to performing similar studies with younger infants, Tenenbaum plans to further refine his model by adding other physical principles that babies appear to understand, such as gravity or friction. “We think infants are much smarter, in a sense, than this model is,” he says. “We now need to do more experiments and model a broader range of the existing literature to test exactly what they know.”

He is also developing similar models for infants’ “intuitive psychology,” or understanding of how other people act. Such models of normal infant cognition could help researchers figure out what goes wrong in disorders such as autism. “We have to understand more precisely what the normal case is like in order to understand how it breaks,” Tenenbaum says.

Another avenue of research is the origin of infants’ ability to understand how the world works. In a paper published in Science in March, Tenenbaum and several colleagues outlined a possible mechanism, also based on probabilistic inference, for learning abstract principles from very early sensory input. “It’s very speculative, but we understand roughly the mathematical machinery that could explain how this sort of knowledge could be learned surprisingly early from fairly minimal experience,” he says.