Computer chips have stopped getting faster. To keep improving chips’ performance, manufacturers have turned to adding more “cores,” or processing units, to each chip. In principle, a chip with two cores can run twice as fast as a chip with only one core, a chip with four cores four times as fast, and so on.

But breaking up computational tasks so that they run efficiently on multiple cores is a difficult task, and it only gets harder as the number of cores increases. So a number of ambitious research projects, including one at MIT, are reinventing computing, from chip architecture all the way up to the design of programming languages, to ensure that adding cores continues to translate to improved performance.

To managers of large office networks or Internet server farms, this is a daunting prospect. Is the computing landscape about to change completely? Will information-technology managers have to relearn their trade from scratch?

Probably not, say a group of MIT researchers. In a paper they’re presenting on Oct. 4 at the USENIX Symposium on Operating Systems Design and Implementation in Toronto, the researchers argue that, for at least the next few years, the Linux operating system should be able to keep pace with changes in chip design.

Linux is an open-source operating system, meaning that any programmer who chooses to may modify its code, adding new features or streamlining existing ones. By the same token, however, any public distribution of those modifications must be free of charge, which makes Linux popular among managers of large data centers. Programmers around the world have contributed thousands of hours of their time to the continuing improvement of Linux.

Clogged counter

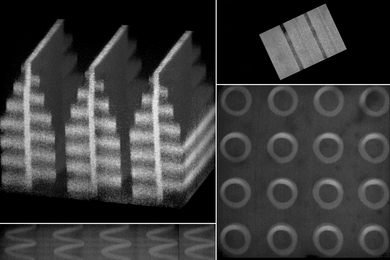

To get a sense of how well Linux will run on the chips of the future, the MIT researchers built a system in which eight six-core chips simulated the performance of a 48-core chip. Then they tested a battery of applications that placed heavy demands on the operating system, activating the 48 cores one by one and observing the consequences.

At some point, the addition of extra cores began slowing the system down rather than speeding it up. But that performance drag had a surprisingly simple explanation. In a multicore system, multiple cores often perform calculations that involve the same chunk of data. As long as the data is still required by some core, it shouldn’t be deleted from memory. So when a core begins to work on the data, it ratchets up a counter stored at a central location, and when it finishes its task, it ratchets the counter down. The counter thus keeps a running tally of the total number of cores using the data. When the tally gets to zero, the operating system knows that it can erase the data, freeing up memory for other procedures.

As the number of cores increases, however, tasks that depend on the same data get split up into smaller and smaller chunks. The MIT researchers found that the separate cores were spending so much time ratcheting the counter up and down that they weren’t getting nearly enough work done. Slightly rewriting the Linux code so that each core kept a local count, which was only occasionally synchronized with those of the other cores, greatly improved the system’s overall performance.

On the job

“That basically tells you how scalable things already are,” says Frans Kaashoek, one of three MIT computer-science professors who, along with four students, conducted the research. “The fact that that is the major scalability problem suggests that a lot of things already have been fixed. You could imagine much more important things to be problems, and they’re not. You’re down to simple reference counts.” Nor, Kaashoek says, do Linux contributors need a trio of MIT professors looking over their shoulders. “Our claim is not that our fixes are the ones that are going to make Linux more scalable,” Kaashoek says. “The Linux community is completely capable of solving these problems, and they will solve them. That’s our hypothesis. In fact, we don’t have to do the work. They’ll do it.”

Kaashoek does say, however, that while the problem with the reference counter was easy to repair, it was not easy to identify. “There’s a bunch of interesting research to be done on building better tools to help programmers pinpoint where the problem is,” he says. “We have written a lot of little tools to help us figure out what’s going on, but we’d like to make that process much more automated.”

"The big question in the community is, as the number of cores on a processor goes up, will we have to completely rethink how we build operating systems," says Remzi Arpaci-Dusseau, a professor of computer science at the University of Wisconsin. "This paper is one of the first to systematically address that question."

Someday, Arpaci-Dusseau says, if the number of cores on a chip gets "significantly beyond 48," new architectures and operating systems may become necessary. But "for the next five, eight years," he says, "I think this paper answers pretty definitively that we probably don't have to completely rethink things, which is great, because it really helps direct resources and research toward more relevant problems."

Arpaci-Dusseau points out, too, that the MIT researchers "showed that finding the problems is the hard part. What that hints at for the rest of the community is that building techniques — whether they're software techniques or hardware techniques or both — that help to identify these problems is going to be a rich new area as we go off into this multicore world."

But breaking up computational tasks so that they run efficiently on multiple cores is a difficult task, and it only gets harder as the number of cores increases. So a number of ambitious research projects, including one at MIT, are reinventing computing, from chip architecture all the way up to the design of programming languages, to ensure that adding cores continues to translate to improved performance.

To managers of large office networks or Internet server farms, this is a daunting prospect. Is the computing landscape about to change completely? Will information-technology managers have to relearn their trade from scratch?

Probably not, say a group of MIT researchers. In a paper they’re presenting on Oct. 4 at the USENIX Symposium on Operating Systems Design and Implementation in Toronto, the researchers argue that, for at least the next few years, the Linux operating system should be able to keep pace with changes in chip design.

Linux is an open-source operating system, meaning that any programmer who chooses to may modify its code, adding new features or streamlining existing ones. By the same token, however, any public distribution of those modifications must be free of charge, which makes Linux popular among managers of large data centers. Programmers around the world have contributed thousands of hours of their time to the continuing improvement of Linux.

Clogged counter

To get a sense of how well Linux will run on the chips of the future, the MIT researchers built a system in which eight six-core chips simulated the performance of a 48-core chip. Then they tested a battery of applications that placed heavy demands on the operating system, activating the 48 cores one by one and observing the consequences.

At some point, the addition of extra cores began slowing the system down rather than speeding it up. But that performance drag had a surprisingly simple explanation. In a multicore system, multiple cores often perform calculations that involve the same chunk of data. As long as the data is still required by some core, it shouldn’t be deleted from memory. So when a core begins to work on the data, it ratchets up a counter stored at a central location, and when it finishes its task, it ratchets the counter down. The counter thus keeps a running tally of the total number of cores using the data. When the tally gets to zero, the operating system knows that it can erase the data, freeing up memory for other procedures.

As the number of cores increases, however, tasks that depend on the same data get split up into smaller and smaller chunks. The MIT researchers found that the separate cores were spending so much time ratcheting the counter up and down that they weren’t getting nearly enough work done. Slightly rewriting the Linux code so that each core kept a local count, which was only occasionally synchronized with those of the other cores, greatly improved the system’s overall performance.

On the job

“That basically tells you how scalable things already are,” says Frans Kaashoek, one of three MIT computer-science professors who, along with four students, conducted the research. “The fact that that is the major scalability problem suggests that a lot of things already have been fixed. You could imagine much more important things to be problems, and they’re not. You’re down to simple reference counts.” Nor, Kaashoek says, do Linux contributors need a trio of MIT professors looking over their shoulders. “Our claim is not that our fixes are the ones that are going to make Linux more scalable,” Kaashoek says. “The Linux community is completely capable of solving these problems, and they will solve them. That’s our hypothesis. In fact, we don’t have to do the work. They’ll do it.”

Kaashoek does say, however, that while the problem with the reference counter was easy to repair, it was not easy to identify. “There’s a bunch of interesting research to be done on building better tools to help programmers pinpoint where the problem is,” he says. “We have written a lot of little tools to help us figure out what’s going on, but we’d like to make that process much more automated.”

"The big question in the community is, as the number of cores on a processor goes up, will we have to completely rethink how we build operating systems," says Remzi Arpaci-Dusseau, a professor of computer science at the University of Wisconsin. "This paper is one of the first to systematically address that question."

Someday, Arpaci-Dusseau says, if the number of cores on a chip gets "significantly beyond 48," new architectures and operating systems may become necessary. But "for the next five, eight years," he says, "I think this paper answers pretty definitively that we probably don't have to completely rethink things, which is great, because it really helps direct resources and research toward more relevant problems."

Arpaci-Dusseau points out, too, that the MIT researchers "showed that finding the problems is the hard part. What that hints at for the rest of the community is that building techniques — whether they're software techniques or hardware techniques or both — that help to identify these problems is going to be a rich new area as we go off into this multicore world."