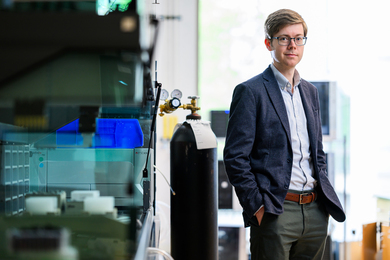

Brad Buran, a Harvard-MIT Division of Health Sciences and Technology (HST) graduate student, lost his hearing to pneumococcal meningitis when he was 14 months old. Today, the fifth-year doctoral candidate studies in HST's Speech and Hearing Biosciences and Technology program is becoming an expert in the neuroscience of speech and hearing.

Because he is immersed in an environment filled with researchers investigating hearing loss, speech therapy, linguistics, and cochlear implants, Buran sometimes becomes the subject of probing conversations. This constant scrutiny might be off-putting for some, but for Buran, it is fodder for his own musings about the way his brain works.

"It's interesting, because so many people in the program are specialists in an area that relates to me personally," says Buran.

His growing scientific expertise, combined with his personal experience, allow him to go from talking with a programmer about ways to improve cochlear implant coding strategies to discussing linguistics with a speech pathologist without missing a beat. And according to classmate Adrian "K.C." Lee, having Buran as a classmate, colleague and friend enriches his own learning experience by revealing to him the nuances of social communications, such as how people perceive accents (Lee is Australian) or pick up idioms.

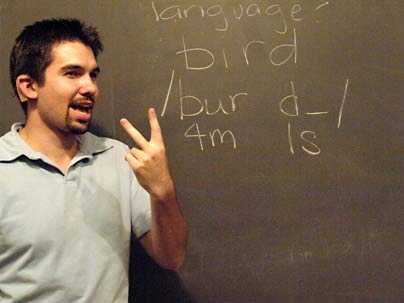

Buran uses a technique called cued speech to follow lectures, conversations, and the subtle cues hearing people take for granted, such as the rustle of papers as the class flips to the next page. This technique turns sounds--from speech to sneezes and even page turns--into hand gestures to create a visual likeness of what he is unable to hear.

For instance, the word "bat" involves three sounds: "b," "a," and "t." Cued speech combines three cues--hand gestures paired with locations around the mouth and throat--to convey these distinct sounds visually. These gestures help Buran distinguish "bat" from "pat," two words that look the same to a lip reader. At the same time, they preserve similarities, allowing him to see that the words rhyme.

Cued speech has played a significant role in helping Buran reach his potential as a scientist by giving him access to the same information as his hearing peers. In this regard, cued speech differs from American Sign Language (ASL). ASL is a distinct language, not a way to represent English with the hands. Translating English into ASL requires interpretation of the meaning and then approximating it using signs.

In contrast, "Cued speech is a modality for language, like speaking or writing," says Tom Shull, a Boston-area cued speech professional. "When I cue, I'm not an interpreter. I'm a transliterator."

Transliteration transparently relays language phoneme by phoneme. "The sounds are just going into the transliterator and coming out as gestures. All the decoding happens at Brad's end," says Lee.

Because cued speech is not a language, it is relatively easy to learn. Lee and more than a dozen of Buran's classmates learned cued speech after meeting Buran. In fact, one friend nearly mastered the technique over a weekend. Now, the group cues during meetings, classes and social events.

"He's certainly not isolated by his impairment," says Buran's advisor Charles Liberman, an HST professor and director of the Eaton-Peabody Laboratory of Auditory Physiology at Massachusetts Eye and Ear Infirmary. "He's in there, organizing [things], galvanizing his classmates to take cued speech classes or to go skiing."

Buran also has a cochlear implant and is learning to use it more effectively. He aims to communicate well on the phone, in large groups and in noisy environments. By listening to himself, he is also improving his speech and intonation. "I can be a very sarcastic person, but you have to see it in my face," he said. "I am not good at using my voice for that yet."

Meanwhile, Buran thinks constantly about his own research. Though he now studies the physiology and molecular biology of the inner ear, his real interest is in language cognition. Because he acquired language both aurally and visually and because he speaks with his hands as well as his voice, he often thinks about how this works inside his brain. "When you decide to say something, what creates the appropriate motor sequence?" How does his brain pick between moving his mouth to speak and his hands to cue?

"No one really understands how all of that works," says Buran. More than likely, someday, he will.

A version of this article appeared in MIT Tech Talk on October 3, 2007 (download PDF).