Imagine that one day you’re riding the train and decide to hop the turnstile to avoid paying the fare. It probably won’t have a big impact on the financial well-being of your local transportation system. But now ask yourself, “What if everyone did that?” The outcome is much different — the system would likely go bankrupt and no one would be able to ride the train anymore.

Moral philosophers have long believed this type of reasoning, known as universalization, is the best way to make moral decisions. But do ordinary people spontaneously use this kind of moral judgment in their everyday lives?

In a study of several hundred people, MIT and Harvard University researchers have confirmed that people do use this strategy in particular situations called “threshold problems.” These are social dilemmas in which harm can occur if everyone, or a large number of people, performs a certain action. The authors devised a mathematical model that quantitatively predicts the judgments they are likely to make. They also showed, for the first time, that children as young as 4 years old can use this type of reasoning to judge right and wrong.

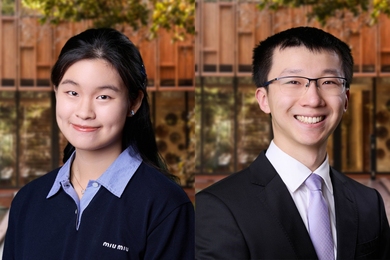

“This mechanism seems to be a way that we spontaneously can figure out what are the kinds of actions that I can do that are sustainable in my community,” says Sydney Levine, a postdoc at MIT and Harvard and the lead author of the study.

Other authors of the study are Max Kleiman-Weiner, a postdoc at MIT and Harvard; Laura Schulz, an MIT professor of cognitive science; Joshua Tenenbaum, a professor of computational cognitive science at MIT and a member of MIT’s Center for Brains, Minds, and Machines and Computer Science and Artificial Intelligence Laboratory (CSAIL); and Fiery Cushman, an assistant professor of psychology at Harvard. The paper is appearing this week in the Proceedings of the National Academy of Sciences.

Judging morality

The concept of universalization has been included in philosophical theories since at least the 1700s. Universalization is one of several strategies that philosophers believe people use to make moral judgments, along with outcome-based reasoning and rule-based reasoning. However, there have been few psychological studies of universalization, and many questions remain regarding how often this strategy is used, and under what circumstances.

To explore those questions, the MIT/Harvard team asked participants in their study to evaluate the morality of actions taken in situations where harm could occur if too many people perform the action. In one hypothetical scenario, John, a fisherman, is trying to decide whether to start using a new, more efficient fishing hook that will allow him to catch more fish. However, if every fisherman in his village decided to use the new hook, there would soon be no fish left in the lake.

The researchers found that many subjects did use universalization to evaluate John’s actions, and that their judgments depended on a variety of factors, including the number of people who were interested in using the new hook and the number of people using it that would trigger a harmful outcome.

To tease out the impact of those factors, the researchers created several versions of the scenario. In one, no one else in the village was interested in using the new hook, and in that scenario, most participants deemed it acceptable for John to use it. However, if others in the village were interested but chose not to use it, then John’s decision to use it was judged to be morally wrong.

The researchers also found that they could use their data to create a mathematical model that explains how people take different factors into account, such as the number of people who want to do the action and the number of people doing it that would cause harm. The model accurately predicts how people’s judgments change when these factors change.

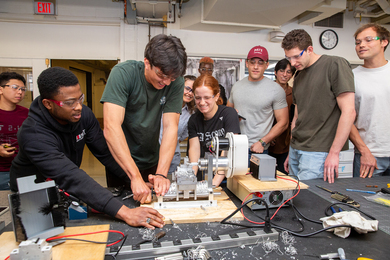

In their last set of studies, the researchers created scenarios that they used to test judgments made by children between the ages of 4 and 11. One story featured a child who wanted to take a rock from a path in a park for his rock collection. Children were asked to judge if that was OK, under two different circumstances: In one, only one child wanted a rock, and in the other, many other children also wanted to take rocks for their collections.

The researchers found that most of the children deemed it wrong to take a rock if everyone wanted to, but permissible if there was only one child who wanted to do it. However, the children were not able to specifically explain why they had made those judgments.

“What's interesting about this is we discovered that if you set up this carefully controlled contrast, the kids seem to be using this computation, even though they can't articulate it,” Levine says. “They can't introspect on their cognition and know what they're doing and why, but they seem to be deploying the mechanism anyway.”

In future studies, the researchers hope to explore how and when the ability to use this type of reasoning develops in children.

Collective action

In the real world, there are many instances where universalization could be a good strategy for making decisions, but it’s not necessary because rules are already in place governing those situations.

“There are a lot of collective action problems in our world that can be solved with universalization, but they're already solved with governmental regulation,” Levine says. “We don't rely on people to have to do that kind of reasoning, we just make it illegal to ride the bus without paying.”

However, universalization can still be useful in situations that arise suddenly, before any government regulations or guidelines have been put in place. For example, at the beginning of the Covid-19 pandemic, before many local governments began requiring masks in public places, people contemplating wearing masks might have asked themselves what would happen if everyone decided not to wear one.

The researchers now hope to explore the reasons why people sometimes don’t seem to use universalization in cases where it could be applicable, such as combating climate change. One possible explanation is that people don’t have enough information about the potential harm that can result from certain actions, Levine says.

The research was funded by the John Templeton Foundation, the Templeton World Charity Foundation, and the Center for Brains, Minds, and Machines.