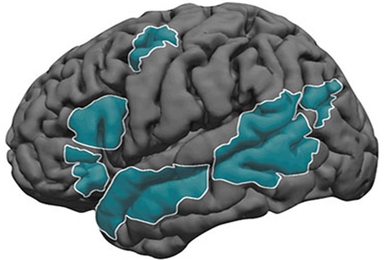

Among the many things rodents have taught neuroscientists is that, in a region called the hippocampus, the brain creates a new map for every unique spatial context — for instance, a different room or maze. But scientists have so far struggled to learn how animals decide when a context is novel enough to merit creating, or at least revising, these mental maps. In a study in eLife, MIT and Harvard University researchers propose a new understanding: The process of “remapping” can be mathematically modeled as a feat of probabilistic reasoning by the rodents.

The approach offers scientists a new way to interpret many experiments that depend on measuring remapping to investigate learning and memory. Remapping is integral to that pursuit, because animals (and people) associate learning closely with context, and hippocampal maps indicate which context an animal believes itself to be in.

“People have previously asked ‘What changes in the environment cause the hippocampus to create a new map?’ but there haven’t been any clear answers,” says lead author Honi Sanders. “It depends on all sorts of factors, which means that how the animals define context has been shrouded in mystery.”

Sanders is a postdoc in the lab of co-author Matthew Wilson, Sherman Fairchild Professor in The Picower Institute for Learning and Memory and the departments of Biology and Brain and Cognitive Sciences at MIT. He is also a member of the Center for Brains, Minds and Machines. The pair collaborated with Samuel Gershman, a professor of psychology at Harvard.

A fundamental problem with remapping that has frequently led labs to report conflicting, confusing, or surprising results, is that scientists cannot simply assure their rats that they have moved from experimental Context A to Context B, or that they are still in Context A, even if some ambient condition, like temperature or odor, has inadvertently changed. It is up to the rat to explore and infer that conditions like the maze shape, or smell, or lighting, or the position of obstacles and rewards, or the task they must perform, have or have not changed enough to trigger a full or partial remapping.

So, rather than trying to understand remapping measurements based on what the experimental design is supposed to induce, Sanders, Wilson, and Gershman argue that scientists should predict remapping by mathematically accounting for the rat’s reasoning using Bayesian statistics, which quantify the process of starting with an uncertain assumption and then updating it as new information emerges.

“You never experience exactly the same situation twice. The second time is always slightly different,” Sanders says. “You need to answer the question: ‘Is this difference just the result of normal variation in this context or is this difference actually a different context?’ The first time you experience the difference you can’t be sure, but after you’ve experienced the context many times and get a sense of what variation is normal and what variation is not, you can pick up immediately when something is out of line.”

The trio call their approach “hidden state inference” because to the animal, the possible change of context is a hidden state that must be inferred.

In the study, the authors describe several cases in which hidden state inference can help explain the remapping, or the lack of it, observed in prior studies.

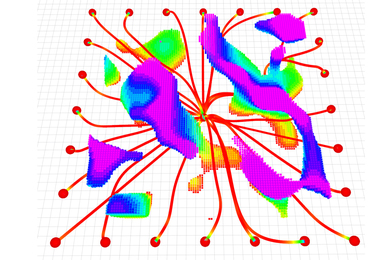

For instance, in many studies it’s been difficult to predict how changing some of the cues that a rodent navigates by in a maze (e.g., a light or a buzzer) will influence whether it makes a completely new map or partially remaps the current one, and by how much. Mostly the data has showed there isn’t an obvious “one-to-one” relationship of cue change and remapping. But the new model predicts how, as more cues change, a rodent can transition from becoming uncertain about whether an environment is novel (and therefore partially remapping) to becoming sure enough of that to fully remap.

In another, the model offers a new prediction to resolve a remapping ambiguity that has arisen when scientists have incrementally “morphed” the shape of rodent enclosures. Multiple labs, for instance, found different results when they familiarized rats with square and round environments and then tried to measure how and whether they remap when placed in intermediate shapes, such as an octagon. Some labs saw complete remapping, while others observed only partial remapping. The new model predicts how that could be true: rats exposed to the intermediate environment after longer training would be more likely to fully remap than those exposed to the intermediate shape earlier in training, because with more experience they would be more sure of their original environments, and therefore more certain that the intermediate one was a real change.

The math of the model even includes a variable that can account for differences between individual animals. Sanders is looking at whether rethinking old results in this way could allow researchers to understand why different rodents respond so variably to similar experiments.

Ultimately, Sanders says, he hopes the study will help fellow remapping researchers adopt a new way of thinking about surprising results — by considering the challenge their experiments pose to their subjects.

“Animals are not given direct access to context identities, but have to infer them,” he says. “Probabilistic approaches capture the way that uncertainty plays a role when inference occurs. If we correctly characterize the problem the animal is facing, we can make sense of differing results in different situations because the differences should stem from a common cause: the way that hidden state inference works.”

The U.S. National Science Foundation funded the research.