Olympic skaters can launch, perform multiple aerial turns, and land gracefully, anticipating imperfections and reacting quickly to correct course. To make such elegant movements, the brain must have an internal model of the body to control, predict, and make almost-instantaneous adjustments to motor commands. So-called “internal models” are a fundamental concept in engineering and have long been suggested to underlie control of movement by the brain, but what about processes that occur in the absence of movement, such as contemplation, anticipation, planning?

Using a novel combination of task design, data analysis, and modeling, MIT neuroscientist Mehrdad Jazayeri and colleagues now provide compelling evidence that the core elements of an internal model also control purely mental processes.

“During my thesis, I realized that I’m interested not so much in how our senses react to sensory inputs, but instead in how my internal model of the world helps me make sense of those inputs,” says Jazayeri, the Robert A. Swanson Career Development Professor of Life Sciences, a member of MIT’s McGovern Institute for Brain Research, and the senior author of the study.

Indeed, understanding the building blocks exerting control of such mental processes could help to paint a better picture of disruptions in mental disorders, such as schizophrenia.

Internal models for mental processes

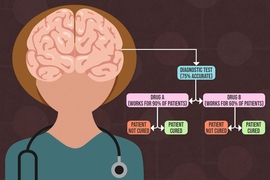

Scientists working on the motor system have long theorized that the brain overcomes noisy and slow signals using an accurate internal model of the body. This internal model serves three critical functions: it provides motor to control movement, simulates upcoming movement to overcome delays, and uses feedback to make real-time adjustments.

“The framework that we currently use to think about how the brain controls our actions is one that we have borrowed from robotics: We use controllers, simulators, and sensory measurements to control machines and train operators,” explains Reza Shadmehr, a professor at the Johns Hopkins School of Medicine who was not involved with the study. “That framework has largely influenced how we imagine our brain controlling our movements.”

Jazazyeri and colleagues wondered whether the same framework might explain the control principles governing mental states in the absence of any movement.

“When we’re simply sitting, thoughts and images run through our heads and, fundamental to intellect, we can control them,” explains lead author Seth Egger, a former postdoc in the Jazayeri lab who is now at Duke University. “We wanted to find out what’s happening between our ears when we are engaged in thinking.”

Imagine, for example, a sign language interpreter keeping up with a fast speaker. To track speech accurately, the translator continuously anticipates where the speech is going, rapidly adjusting when the actual words deviate from the prediction. The interpreter could be using an internal model to anticipate upcoming words, and use feedback to make adjustments on the fly.

1-2-3-Go

Hypothesizing about how the components of an internal model function in scenarios such as translation is one thing. Cleanly measuring and proving the existence of these elements is much more complicated, as the activity of the controller, simulator, and feedback are intertwined. To tackle this problem, Jazayeri and colleagues devised a clever task with primate models in which the controller, simulator, and feedback act at distinct times.

In this task, called “1-2-3-Go,” the animal sees three consecutive flashes (1, 2, and 3) that form a regular beat, and learns to make an eye movement (Go) when they anticipate the 4th flash should occur. During the task, researchers measured neural activity in a region of the frontal cortex they had previously linked to the timing of movement.

Jazayeri and colleagues had clear predictions about when the controller would act (between the third flash and “Go”) and when feedback would be engaged (with each flash of light). The key surprise came when researchers saw evidence for the simulator anticipating the third flash. This unexpected neural activity has dynamics that resemble the controller, but was not associated with a response. In other words, the researchers uncovered a covert plan that functions as the simulator, thus uncovering all three elements of an internal model for a mental process, the planning and anticipation of “Go” in the “1-2-3-Go” sequence.

“Jazayeri’s work is important because it demonstrates how to study mental simulation in animals,” explains Shadmehr, “and where in the brain that simulation is taking place.”

Having found how and where to measure an internal model in action, Jazayeri and colleagues now plan to ask whether these control strategies can explain how primates effortlessly generalize their knowledge from one behavioral context to another. For example, how does an interpreter rapidly adjust when someone with widely different speech habits takes the podium? This line of investigation promises to shed light on high-level mental capacities of the primate brain that simpler animals seem to lack, that go awry in mental disorders, and that designers of artificial intelligence systems so fondly seek.