A new activity offered during January’s Independent Activities Period (IAP) showcased fully autonomous 1:10-scale model cars navigating through MIT's underground tunnels in a robot race. Rapid Autonomous Complex-Environment Competing Ackermann-steering Robot (RACECAR) vehicles featured a high-performance embedded computer, a rich sensor suite, an open-source robotics software framework, and student-developed autonomy algorithms that allowed the cars to navigate MIT’s tunnels as fast as possible. The winning team’s vehicle performed an almost flawless run at an average speed of 7.1 mph.

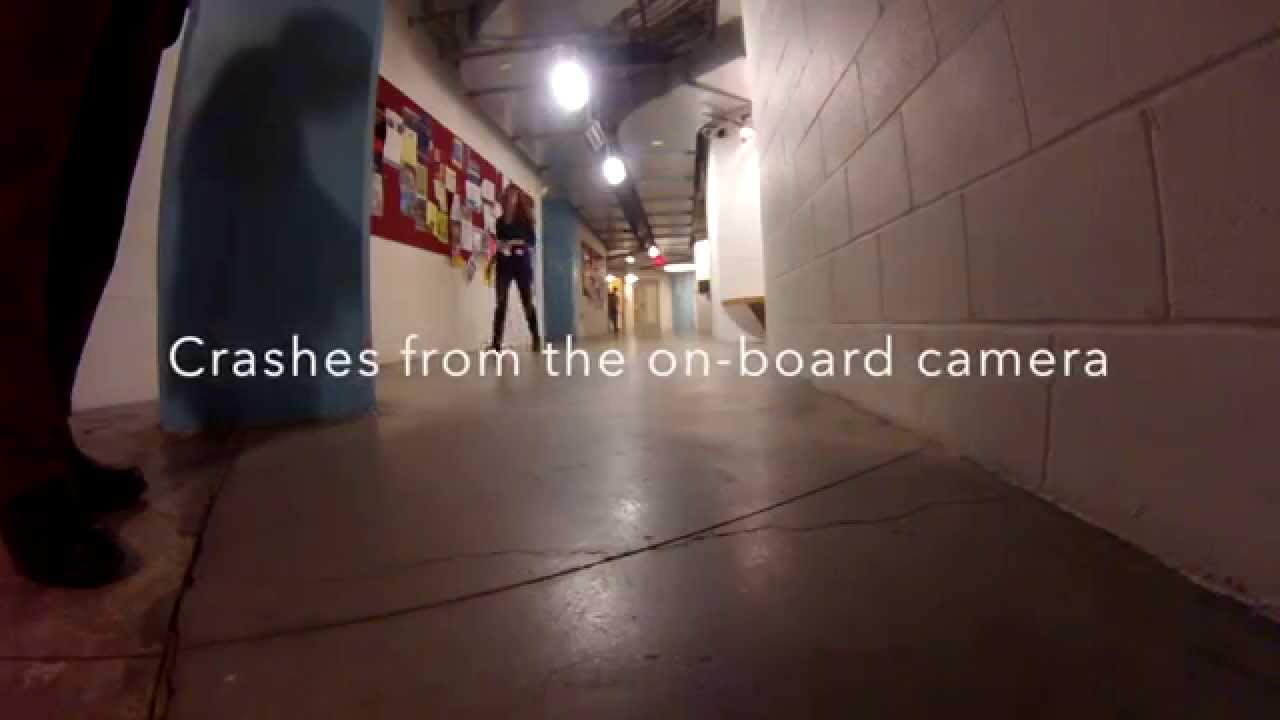

The class was divided into four student teams. Provided with an assembled robot and basic software infrastructure, each team was tasked with designing, implementing, and testing autonomy algorithms to guide the vehicle through MIT’s tunnels at high speeds while avoiding contact with walls, doorways, and other obstacles. With MIT Department of Facilities assistance to clear the environment, each team demonstrated its algorithms via a robot race in the tunnel network below the Stata Center.

The robot chassis was based on a 1:10-scale radio-controlled racecar modified to accept onboard control of its steering and throttle actuators. To perceive its motion and the local environment, the robot was outfitted with a heterogeneous set of sensors, including a scanning laser range finder, camera, inertial measurement unit, and visual odometer. Sensor data and autonomy algorithms were processed on board with an NVIDIA Jetson Tegra K1 embedded computer. The Tegra K1 processor features a 192-core general-purpose graphics processing unit. The MIT IAP activity was one of the first to integrate the emerging embedded supercomputers into an educational event.

The robot’s software leverages the Robot Operating System (ROS) framework to facilitate rapid development. ROS is a collection of open-source drivers, algorithms, tools, and libraries widely used by robotics researchers and industry. Students integrated existing software modules, such as drivers for reading sensor data, alongside their custom algorithms to rapidly compose a complete autonomous system.

A key challenge for the students was developing algorithms capable of crunching the data from all sensors in real time and making quick decisions to accelerate, brake, or turn the car without losing control. Each team pursued its own solution, although most converged on reactive planning approaches using the laser ranger to track its position relative to corridor walls.

Unlike more traditional laboratory exercises in which students work on equipment in the lab during lab hours, the class featured an “inverted lab“ experience in which students were given the cars so that they had access to them at all times; hence, they could practice their skills in hackathon-style small challenges against one other.

The class featured seven lectures on algorithmic robotics. Topics included the robot operating system; selected topics in algorithmic robotics, such as sensing, perception, control, and planning algorithms; and several case studies. The lectures were followed by a two-day hackathon in which most of the software development took place.

Three out of four teams successfully completed the 515-foot race course. The best-performing car completed the track in less than 50 seconds at an average speed of more than 7 mph, roughly equivalent to a human’s running speed.

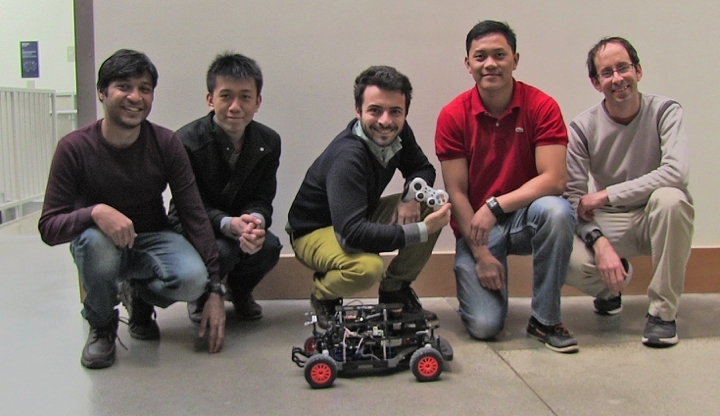

The class was jointly developed by the Department of Aeronautics and Astronautics (AeroAstro) and MIT Lincoln Laboratory. The instructors were AeroAstro and Laboratory for Information and Decisions Systems Professor Sertac Karaman and Michael Boulet, Owen Guldner, and Michael Park of Lincoln Laboratory.

The instructors say that an important aspect of the class was enhancing embedded systems and robotics education at MIT. They are considering holding the class again in 2016, possibly expanding the competition to an autonomous vehicle’s exploration of a larger section of MIT’s 1.5-km tunnel network.