The design of aromas — the flavors of packaged food and drink and the scents of cleaning products, toiletries and other household items — is a multibillion-dollar business. The big flavor companies spend tens of millions of dollars every year on research and development, including a lot of consumer testing.

But making sense of taste-test results is difficult. Subjects’ preferences can vary so widely that no clear consensus may emerge. Collecting enough data about each subject would allow flavor companies to filter out some of the inconsistencies, but after about 40 flavor samples, subjects tend to suffer “smell fatigue,” and their discriminations become unreliable. So companies are stuck making decisions on the basis of too little data, much of it contradictory.

One of the biggest flavor companies in the world has turned to researchers in MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) for help. To analyze taste-test results, the CSAIL researchers are using genetic programming, in which mathematical models compete with each other to fit the available data and then cross-pollinate to produce models that are more accurate still.

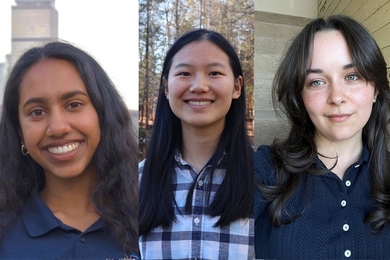

The Swiss flavor company Givaudan asked CSAIL principal research scientist Una-May O’Reilly, postdoc Kalyan Veeramachaneni and the University of Antwerp’s Ekaterina Vladislavleva to help interpret the results of tests in which 69 subjects evaluated 36 different combinations of seven basic flavors, assigning each a score according to its olfactory appeal.

For each subject, O’Reilly and her colleagues randomly generate mathematical functions that predict scores according to the concentrations of different flavors. Each function is assessed according to two criteria: accuracy and simplicity. A function that, for example, predicts a subject’s preferences fairly accurately using a single factor — say, concentration of butter — could prove more useful than one that yields a slightly more accurate prediction but requires a complicated mathematical manipulation of all seven variables.

After all the functions have been assessed, those that provide poor predictions are winnowed out. Elements of the survivors are randomly recombined to produce a new generation of functions; those are then evaluated for accuracy and simplicity. The whole process is repeated about 30 times, until it converges on a set of functions that accord well with the preferences of a single subject.

Because O’Reilly and her colleagues’ method produces profiles of individual test subjects’ tastes, it can sort them into distinct groups. It could be, for instance, that test subjects tend to have strong preferences for either cinnamon or nutmeg but not both. By marketing one product to cinnamon lovers and another to nutmeg lovers, a company could do much better than by marketing one product to both. “For every one of these 36 flavors, someone hated it and someone liked it,” O’Reilly says. “If you try to identify a flavor that the whole panel likes, you end up settling for a little bit less.”

O’Reilly and her colleagues haven’t had an opportunity to empirically determine whether their models correctly predict subjects’ responses to new flavors. So to try to establish their model’s accuracy, they instead built another model. First, they developed a set of mathematical functions that represent subjects’ true taste preferences. Then they showed that, given the limitations of particular test designs, their algorithms could still divine those preferences. Although they developed the model purely to validate their approach, O’Reilly says, flavor researchers were intrigued by the possibility of using it to develop more accurate and efficient test protocols.

“People have been playing with these [evolutionary] techniques for decades,” says Lee Spector, a professor of computer science at Hampshire College and editor-in-chief of the journal Genetic Programming and Evolvable Machines, where the MIT researchers’ latest paper appears. “One of the reasons that they haven’t made a big splash until recently is that people haven’t really figured out, I think, where they can pay off big.” Taste preference, Spector says, “is a pretty brilliant area in which to apply the evolutionary methods — and it looks as though they’re working, also, so that’s exciting.”

But making sense of taste-test results is difficult. Subjects’ preferences can vary so widely that no clear consensus may emerge. Collecting enough data about each subject would allow flavor companies to filter out some of the inconsistencies, but after about 40 flavor samples, subjects tend to suffer “smell fatigue,” and their discriminations become unreliable. So companies are stuck making decisions on the basis of too little data, much of it contradictory.

One of the biggest flavor companies in the world has turned to researchers in MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) for help. To analyze taste-test results, the CSAIL researchers are using genetic programming, in which mathematical models compete with each other to fit the available data and then cross-pollinate to produce models that are more accurate still.

The Swiss flavor company Givaudan asked CSAIL principal research scientist Una-May O’Reilly, postdoc Kalyan Veeramachaneni and the University of Antwerp’s Ekaterina Vladislavleva to help interpret the results of tests in which 69 subjects evaluated 36 different combinations of seven basic flavors, assigning each a score according to its olfactory appeal.

For each subject, O’Reilly and her colleagues randomly generate mathematical functions that predict scores according to the concentrations of different flavors. Each function is assessed according to two criteria: accuracy and simplicity. A function that, for example, predicts a subject’s preferences fairly accurately using a single factor — say, concentration of butter — could prove more useful than one that yields a slightly more accurate prediction but requires a complicated mathematical manipulation of all seven variables.

After all the functions have been assessed, those that provide poor predictions are winnowed out. Elements of the survivors are randomly recombined to produce a new generation of functions; those are then evaluated for accuracy and simplicity. The whole process is repeated about 30 times, until it converges on a set of functions that accord well with the preferences of a single subject.

Because O’Reilly and her colleagues’ method produces profiles of individual test subjects’ tastes, it can sort them into distinct groups. It could be, for instance, that test subjects tend to have strong preferences for either cinnamon or nutmeg but not both. By marketing one product to cinnamon lovers and another to nutmeg lovers, a company could do much better than by marketing one product to both. “For every one of these 36 flavors, someone hated it and someone liked it,” O’Reilly says. “If you try to identify a flavor that the whole panel likes, you end up settling for a little bit less.”

O’Reilly and her colleagues haven’t had an opportunity to empirically determine whether their models correctly predict subjects’ responses to new flavors. So to try to establish their model’s accuracy, they instead built another model. First, they developed a set of mathematical functions that represent subjects’ true taste preferences. Then they showed that, given the limitations of particular test designs, their algorithms could still divine those preferences. Although they developed the model purely to validate their approach, O’Reilly says, flavor researchers were intrigued by the possibility of using it to develop more accurate and efficient test protocols.

“People have been playing with these [evolutionary] techniques for decades,” says Lee Spector, a professor of computer science at Hampshire College and editor-in-chief of the journal Genetic Programming and Evolvable Machines, where the MIT researchers’ latest paper appears. “One of the reasons that they haven’t made a big splash until recently is that people haven’t really figured out, I think, where they can pay off big.” Taste preference, Spector says, “is a pretty brilliant area in which to apply the evolutionary methods — and it looks as though they’re working, also, so that’s exciting.”