CAMBRIDGE, Mass.--Like a high-tech version of a child's interactive "touch and feel" book, this computer interface lets you feel a virtual object to learn its shape, and whether it's rough as sandpaper, hard as rock or hot as fire. What use is that, you might ask? Plenty, if you're in the business of creating new ways for humans and computers to interact.

One of the stickiest problems in developing advanced human-computer interfaces is finding a way to simulate touch. Without it, virtual reality isn't very real.

Now a group of researchers at MIT's Artificial Intelligence Laboratory have found a way to communicate the tactile sense of a virtual object -- its shape, texture, temperature, weight and rigidity -- and let you change those characteristics through a device called the PHANToM haptic interface.

For instance, you could deform a box's shape by poking it with your finger, and actually feel the side of the box give way. Or you could throw a virtual ball against a virtual wall and feel the impact when you catch the rebound.

"In the same way that a video monitor displays visual or graphic information to your eyes, the haptic 'display' lets you feel the physical information with your finger," said Dr. J. Kenneth Salisbury, a principal research scientist in MIT's Department of Mechanical Engineering and head of haptics research at the AI Lab. "It's very unlike video in that you can modify a scene by touching it."

The original PHANToM device was developed a few years ago by Thomas Massie, then an undergraduate, and Dr. Salisbury; inspiration for the device grew from collaboration between Dr. Salisbury and Dr. Mandayam Srinivasan of the Research Lab for Electronics. Since then, MIT's haptics researchers have continued to create enhancements, such as the ability to communicate a virtual object's temperature, texture and elasticity.

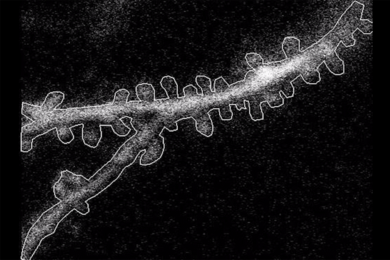

The PHANToM haptic interface is a small, desktop device that looks a bit like a desk lamp. Instead of a bulb on the end of the arm, it has a stylus grip or thimble for the user's fingertip. When connected to a computer, the device works sort of like a tactile mouse, except in 3-D. Three small motors give force feedback to the user by exerting pressure on the grip or thimble.

Although haptic devices predated PHANToM, those models primarily had been used to control remote robots that handled hazardous materials, said Dr. Salisbury. They cost up to $200,000 each and required a team of experts to develop the interface and software to adapt them for an application.

"The beauty of the PHANToM is people can use it within minutes of getting it," he said. "They can plug it in and get started. I like to compare it to the PC revolution. Once computers were enormous and prohibitively expensive. Now PCs are everywhere."

The PHANToM interface's novelty lies in its small size, relatively low cost (about $20,000) and its simplification of tactile information. Rather than displaying information from many different points, this haptic device provides high-fidelity feedback to simulate touching at a single point.

"Imagine closing your eyes, holding a pen and touching everything in your office. You could actually tell a lot about those objects from that single point of contact. You'd recognize your computer keyboard, the monitor, the telephone, desktop and so on," said Dr. Salisbury.

The PHANToM haptic interface is currently being manufactured by SensAble Technology, Inc. in Cambridge. It has been sold in more than 17 countries to organizations such as Hewlett-Packard, GE, Toyota, Volkswagen, LEGO, Western Mining, Pennsylvania State University Medical School and Brigham and Women's Hospital, which are using it for applications ranging from medical training to industrial design.

Haptic devices are used in surgical training, allowing students to suture virtual vessels before they work on real tissue. Visually impaired people can also benefit from the interface, according to Dr. Salisbury, who said that mathematicians can feel the 3-D graphic equivalent of an equation and blind children can play specially designed computer games using the device.

Current enhancements at MIT include a thermal application developed by graduate student Mark Ottenmeyer. A thermo-electric cell under the user's finger actually heats up or cools down to the exact temperature of the virtual wall it touches. "The coolest thing is watching people's reaction when they feel the temperature change," said Mr. Ottenmeyer. He said that within the year, he hopes to raise a virtual object's temperature through the transference of heat from a user's fingertip.

Donald Green, also a graduate student in the AI Lab, has designed algorithms to permit the system to mimic the texture of sandpaper. When the user drags the stylus grip through the air, the haptic device actually makes it feel and sound as if the stylus is scraping sandpaper. Another enhancement lets you feel the 3-D sculpture you're creating on screen.

Haptics research elsewhere includes an enhancement for fabric rendering, which means in theory, researchers could create an infant's "Pat the Bunny" program with a virtual fuzzy fabric swatch.

"It's difficult to predict which of the many applications areas will dominate, but in the growing haptics community, there's palpable excitement," said Dr. Salisbury.

Funding for MIT's haptic research has been provided in part by the Office of Naval Research, DARPA, NASA and the National Institute of Health and others.