Enabling computers to process visual information in much the same way that people do is the goal of Dr. Edward Adelson, who is presenting a report on his work to the American Association for the Advancement of Science later this month.

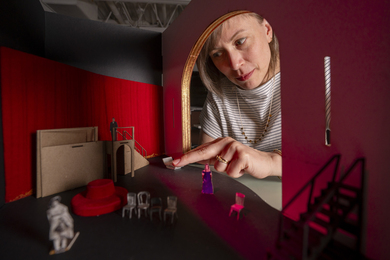

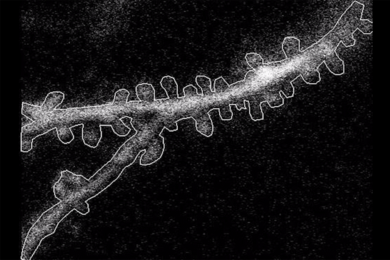

Dr. Adelson, associate professor of vision science in the Department of Brain and Cognitive Sciences and the Media Lab, is blending research in brain physiology, human perception and computer vision to learn more about how the human visual system works and how to apply those processes to digital images. In one application, he and his colleagues transform a moving video sequence into a set of overlapping layers. For example, a sequence shot from a moving car shows a tree moving in front of a house; this is automatically decomposed by the computer into separate layers with independent motions. The video sequence can later be resynthesized by sending a description of the layers and their motions. This description of the scene is more efficient and useful than the usual digital representations.

The technique is inspired by the processing that takes place in mid-level human vision, Dr. Adelson said. It uses information about motion, texture, surfaces and depth, but does not depend on high-level processes such as object recognition.

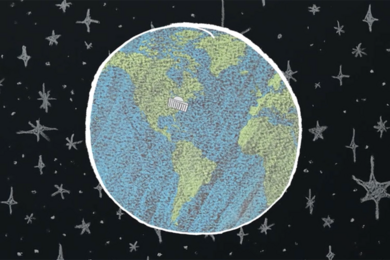

Dr. Adelson's work, which is funded by the Media Lab's Television of Tomorrow program, has potentially broad implications for increasing the efficiency of video transmission and storage, and image editing and manipulation. Standard digital video systems require data rates on the order of two to eight megabits per second, even after compression with the state-of-the-art encoding systems such as MPEG (Motion Picture Experts Group).

To further increase the efficiency, Dr. Adelson said, "we're trying to squeeze bits out while still transmitting an image that's visually faithful as far as the human eye is concerned." With further development, the layered representation may allow another factor of 10 in compression. As a bonus, the images are represented in a more meaningful format that is useful for image editing and indexing.

A version of this article appeared in MIT Tech Talk on February 15, 1995.