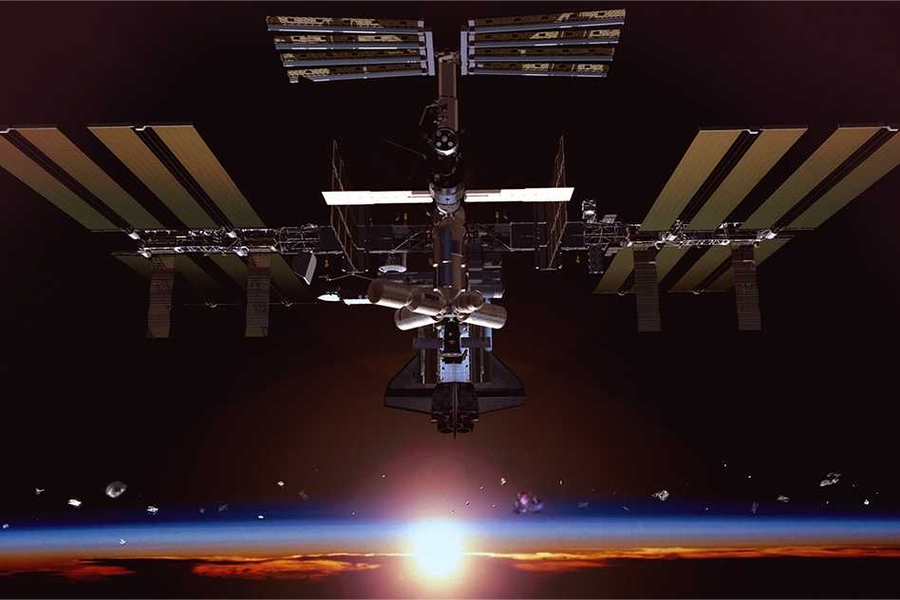

Objects in space tend to spin — and spin in a way that’s totally different from the way they spin on earth. Understanding how objects are spinning, where their centers of mass are, and how their mass is distributed is crucial to any number of actual or potential space missions, from cleaning up debris in the geosynchronous orbit favored by communications satellites to landing a demolition crew on a comet.

In a forthcoming issue of the Journal of Field Robotics, MIT researchers will describe a new algorithm for gauging the rotation of objects in zero gravity using only visual information. And at the International Conference on Intelligent Robots and Systems this month, they will report the results of a set of experiments in which they tested the algorithm aboard the International Space Station.

On all but one measure, their algorithm was very accurate, even when it ran in real time on the microprocessor of a single, volleyball-size experimental satellite. On the remaining measure, which indicates the distribution of the object’s mass, the algorithm didn’t fare quite as well when running in real time — although its estimate may still be adequate for many purposes. But it was much more accurate when it had slightly longer to run on a more powerful computer.

Space trash

“There are satellites that are basically dead, that are in the ‘geostationary graveyard,’ a few hundred kilometers from the normal geostationary orbit,” says Alvar Saenz-Otero, a principal research scientist in MIT’s Department of Aeronautics and Astronautics. “With over 6,000 satellites operating in space right now, people are thinking about recycling. Can we get to that satellite, observe how it’s spinning, and learn its dynamic behavior so that we can dock to it?”

Moreover, “there’s a lot of space trash these days,” Saenz-Otero adds. “There are thousands of pieces of broken satellites in space. If you were to send a supermassive spacecraft up there, yes, you could collect all of those, but it would cost lots of money. But if you send a small spacecraft, and you try to dock to a small, tumbling thing, you also are going to start tumbling. So you need to observe that thing that you know nothing about so you can grab it and control it.”

Joining Saenz-Otero on the paper are lead author Brent Tweddle, who was an MIT graduate student in aeronautics and astronautics when the work was done and is now at NASA’s Jet Propulsion Laboratory; his fellow grad student Tim Setterfield; AeroAstro Professor David Miller; and John Leonard, a professor of mechanical and ocean engineering.

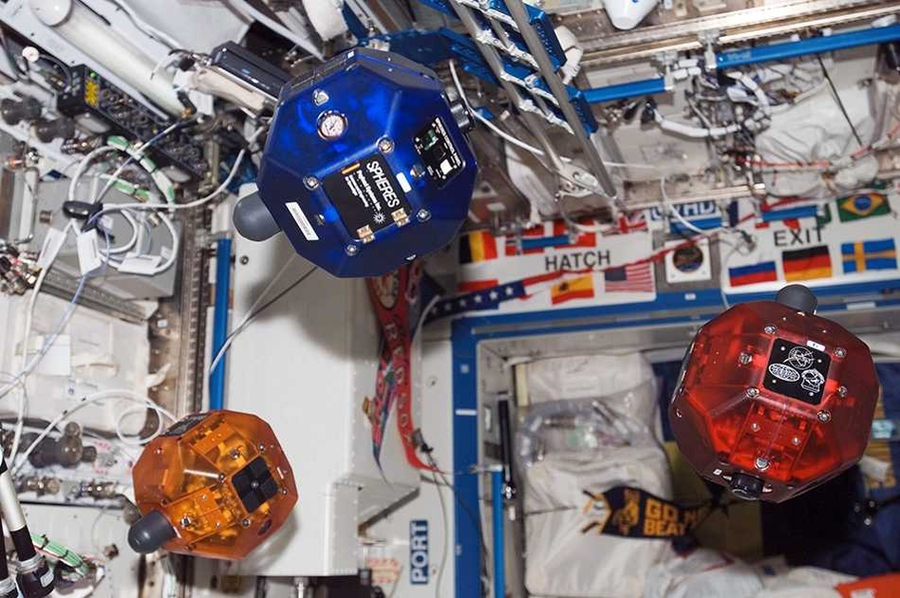

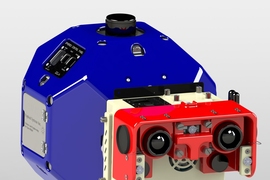

The researchers tested their algorithm using two small satellites deployed to the space station through MIT’s SPHERES project, which envisions that herds of coordinated satellites the size of volleyballs would assist human crews on future space missions. One SPHERES satellite spun in place while another photographed it with a stereo camera.

Cart and horse

One approach to determining the dynamic characteristics of a spinning object, Tweddle explains, would be to first build a visual model of it and then base estimates of its position, orientation, linear and angular velocity, and inertial properties on analysis of the model. But the MIT researchers’ algorithm instead begins estimating all of those characteristics at the same time.

“If you’re just building a map, it’ll optimize things based on just what you see, and put no constraints on how you move,” Tweddle explains. “Your trajectory will likely be very jumpy when you finish that optimization. The spin rate’s going to jump around, and the angular velocity is not going to be smooth or constant. When you add in the dynamics, that actually constrains the motion to follow a nice, smooth, flowing trajectory.”

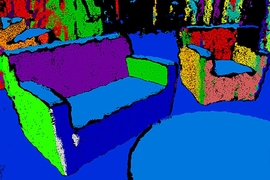

In order to build up all its estimates simultaneously, the algorithm needs to adopt a probabilistic approach. Its first guess about, say, angular velocity won’t be perfectly accurate, but the relationship to the correct measurement can be characterized by a probabilistic distribution. The standard way to model probabilities is as a Gaussian distribution — the bell curve familiar from, for example, the graph of the range of heights in a population.

“Gaussian distributions have a lot of probability in the middle and very little probability out at the tails, and they go from positive infinity to negative infinity,” Tweddle says. “That doesn’t really map well with estimating something like the mass of an object, because there’s a zero probability that the mass of the object is going to be negative.” The same is true of inertial properties, Tweddle says, which are mathematically more complicated but are, in some respects, analogously constrained to positive values.

Rational solution

What Tweddle discovered — “through trial and error,” he says — is that the natural log of the ratio between moments of inertia around the different rotational axes of the object could, in fact, be modeled by a Gaussian distribution. So that’s what the MIT algorithm estimates.

“That’s a novel modeling approach that makes this type of framework work a lot better,” Tweddle says. “Now that I look at it, I think, ‘Why didn’t I think of that in the first place?’”

“The rationale there is that we may need to, or want to, go up and service satellites that have been up there a long time for which those properties [such as center of mass and inertia] aren’t well known,” says Larry Matthies, supervisor of the Computer Vision Group at the Jet Propulsion Laboratory, “either because the original design data is hard to get our hands on, or not available, or — this may be less plausible — the numbers have changed on orbit from the original design, or maybe it’s actually somebody else’s satellite that we want to go up and mess around with.”

Moreover, Matthies says, even in cases in which design data are available, satellites may have mobile appendages whose position significantly affects their moments of inertia. “Maybe there are articulations on the satellite, and how those properties are affected by the various booms and things has to be considered,” he says. “If there’s some uncertainty in that geometry, again, you would need this kind of information.”

The work was funded by both NASA and the U.S. Defense Advanced Projects Research Agency (DARPA).