Consider the following scenario: A scout surveys a high-rise building that’s been crippled by an earthquake, trapping workers inside. After looking for a point of entry, the scout carefully navigates through a small opening. An officer radios in, “Go look down that corridor and tell me what you see.” The scout steers through smoke and rubble, avoiding obstacles and finding two trapped people, reporting their location via live video. A SWAT team is then sent to lead the workers safely out of the building.

Despite its heroics, though, the scout is impervious to thanks. It just sets its sights on the next mission, like any robot would do.

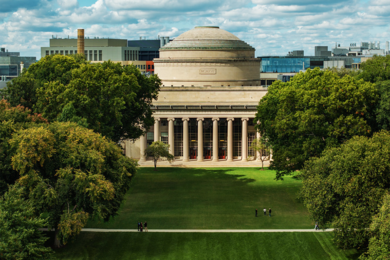

In the not-too-distant future, such robotics-driven missions will be a routine part of disaster response, predicts Nicholas Roy, an MIT associate professor of aeronautics and astronautics. From Roy’s perspective, robots are ideal for dangerous and covert tasks, such as navigating nuclear disasters or spying on enemy camps. They can be small and resilient — but more importantly, they can save valuable manpower.

The key hurdle to such a scenario is robotic intelligence: Flying through unfamiliar territory while avoiding obstacles is an incredibly complex computational task. Understanding verbal commands in natural language is even trickier.

Both challenges are major objectives in Roy’s research — and with both, he aims to design machine-learning systems that can navigate the noise and uncertainty of the real world. He and a team of students in the Robust Robotics Group, in MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL), are designing robotic systems that “do more things intelligently by themselves,” as he puts it.

For instance, the team is building micro-aerial vehicles (MAVs), about the size of a small briefcase, that navigate independently, without the help of a global positioning system (GPS). Most drones depend on GPS to get around, which limits the areas they can cover. In contrast, Roy and his students are outfitting quadrotors — MAVs propelled by four mini-chopper blades — with sensors and sensor processing, to orient themselves without relying on GPS data.

“You can’t fly indoors or quickly between buildings, or under forest canopies stealthily if you rely on GPS,” Roy says. “But if you put sensors onboard, like laser range finders and cameras, then the vehicle can sense the environment, it can avoid obstacles, it can track its own position relative to landmarks it can see, and it can just do more stuff.”

The group is also building “social robots” that understand natural language. Roy and his students are mathematically modeling the way humans speak to one another, using the models to build robots people can easily interact with. So far, the team has programmed several vehicles to process and respond to natural language, including a robotic forklift that follows commands such as, “Pick up the tire pallet off the truck and set it down.”

Hitting the ground flying

Roy’s first experience with robotics was as an undergraduate at McGill University in Montreal, Canada. During his junior year, the Vancouver native worked for a summer at the university’s Centre for Intelligent Machines, on what he calls “a pretty crazy project.” At the time, in the mid-1990s, the sensors available to track a robot’s position were fairly limited. Often, scientists used wheel odometry — the number of wheel rotations — to estimate a robot’s distance traveled and relative position.

However, the accuracy of this method varied with the surfaces the robot traversed. So Roy and McGill computer scientist Gregory Dudek set about characterizing various surfaces — such as wood, carpet and cement — based on their sounds. The experience inspired Roy to work toward adapting robotics to real-life scenarios.

“My sense was, by working with real data and real robots in the real world, you could get a much clearer sense about what’s hard about intelligence,” Roy says. “I still think that’s true.”

After graduating with a bachelor’s degree in physics and a master’s in computer science, Roy headed south to pursue a PhD at Carnegie Mellon University. There, he worked on two major autonomous robot projects: MINERVA, a talking, interactive museum tour guide; and Pearl, a home health aid for the elderly. Both projects required the robots to interact with humans; MINERVA also needed to navigate a noisy, crowded museum. Roy’s team equipped the robots with a suite of sensors and processing software, enabling them to map their environments and communicate with humans.

When Roy finished his PhD, he took the knowledge he’d gained in ground robotics to the skies. In 2003, he joined MIT’s Department of Aeronautics and Astronautics as an assistant professor. The first project he tackled was a seemingly simple one: to design a robot that can estimate its position and fly through an open window.

“For a ground robot, this is trivial,” Roy says. “You could reasonably have it as a homework problem at the undergraduate level. But for an air vehicle, there are many things that make it hard.”

It took Roy and his students, Abraham Bachrach, Ruijie He and Sam Prentice, two and a half years to accomplish the task. In 2008, the team demonstrated that its quadrotor, outfitted with sensors and processors, was able to map an unfamiliar environment and navigate through a cardboard cutout. In 2009, Roy entered the vehicle in the International Aerial Robotics Competition (IARC), where it had to navigate an intricate system of hallways, dead ends and openings to locate an LED control panel. The MIT team successfully completed the mission that year, and became the first team in the 19-year history of the IARC to do so its first time. Roy and his students won top honors along with $10,000 in prize money.

Roy is now experimenting with 3-D sensing, equipping quadrotors with stereo cameras to sense and build 3-D maps of an environment. He’s also making inroads on a new challenge: designing intelligent fixed-wing vehicles that can fly over larger distances, such as to track weather patterns or animal migrations.

However, Roy says the advantage of speed is also a challenge: While a quadrotor can hover in place to estimate its location, a small plane cannot. The problem, while significant, is among the many challenges Roy is eager to tackle.

“You feel like you’re enjoying the risk process as much as anything else,” Roy says. “And that’s really important to me, to have fun with the things I’m doing.”

Despite its heroics, though, the scout is impervious to thanks. It just sets its sights on the next mission, like any robot would do.

In the not-too-distant future, such robotics-driven missions will be a routine part of disaster response, predicts Nicholas Roy, an MIT associate professor of aeronautics and astronautics. From Roy’s perspective, robots are ideal for dangerous and covert tasks, such as navigating nuclear disasters or spying on enemy camps. They can be small and resilient — but more importantly, they can save valuable manpower.

The key hurdle to such a scenario is robotic intelligence: Flying through unfamiliar territory while avoiding obstacles is an incredibly complex computational task. Understanding verbal commands in natural language is even trickier.

Both challenges are major objectives in Roy’s research — and with both, he aims to design machine-learning systems that can navigate the noise and uncertainty of the real world. He and a team of students in the Robust Robotics Group, in MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL), are designing robotic systems that “do more things intelligently by themselves,” as he puts it.

For instance, the team is building micro-aerial vehicles (MAVs), about the size of a small briefcase, that navigate independently, without the help of a global positioning system (GPS). Most drones depend on GPS to get around, which limits the areas they can cover. In contrast, Roy and his students are outfitting quadrotors — MAVs propelled by four mini-chopper blades — with sensors and sensor processing, to orient themselves without relying on GPS data.

“You can’t fly indoors or quickly between buildings, or under forest canopies stealthily if you rely on GPS,” Roy says. “But if you put sensors onboard, like laser range finders and cameras, then the vehicle can sense the environment, it can avoid obstacles, it can track its own position relative to landmarks it can see, and it can just do more stuff.”

The group is also building “social robots” that understand natural language. Roy and his students are mathematically modeling the way humans speak to one another, using the models to build robots people can easily interact with. So far, the team has programmed several vehicles to process and respond to natural language, including a robotic forklift that follows commands such as, “Pick up the tire pallet off the truck and set it down.”

Hitting the ground flying

Roy’s first experience with robotics was as an undergraduate at McGill University in Montreal, Canada. During his junior year, the Vancouver native worked for a summer at the university’s Centre for Intelligent Machines, on what he calls “a pretty crazy project.” At the time, in the mid-1990s, the sensors available to track a robot’s position were fairly limited. Often, scientists used wheel odometry — the number of wheel rotations — to estimate a robot’s distance traveled and relative position.

However, the accuracy of this method varied with the surfaces the robot traversed. So Roy and McGill computer scientist Gregory Dudek set about characterizing various surfaces — such as wood, carpet and cement — based on their sounds. The experience inspired Roy to work toward adapting robotics to real-life scenarios.

“My sense was, by working with real data and real robots in the real world, you could get a much clearer sense about what’s hard about intelligence,” Roy says. “I still think that’s true.”

After graduating with a bachelor’s degree in physics and a master’s in computer science, Roy headed south to pursue a PhD at Carnegie Mellon University. There, he worked on two major autonomous robot projects: MINERVA, a talking, interactive museum tour guide; and Pearl, a home health aid for the elderly. Both projects required the robots to interact with humans; MINERVA also needed to navigate a noisy, crowded museum. Roy’s team equipped the robots with a suite of sensors and processing software, enabling them to map their environments and communicate with humans.

When Roy finished his PhD, he took the knowledge he’d gained in ground robotics to the skies. In 2003, he joined MIT’s Department of Aeronautics and Astronautics as an assistant professor. The first project he tackled was a seemingly simple one: to design a robot that can estimate its position and fly through an open window.

“For a ground robot, this is trivial,” Roy says. “You could reasonably have it as a homework problem at the undergraduate level. But for an air vehicle, there are many things that make it hard.”

It took Roy and his students, Abraham Bachrach, Ruijie He and Sam Prentice, two and a half years to accomplish the task. In 2008, the team demonstrated that its quadrotor, outfitted with sensors and processors, was able to map an unfamiliar environment and navigate through a cardboard cutout. In 2009, Roy entered the vehicle in the International Aerial Robotics Competition (IARC), where it had to navigate an intricate system of hallways, dead ends and openings to locate an LED control panel. The MIT team successfully completed the mission that year, and became the first team in the 19-year history of the IARC to do so its first time. Roy and his students won top honors along with $10,000 in prize money.

Roy is now experimenting with 3-D sensing, equipping quadrotors with stereo cameras to sense and build 3-D maps of an environment. He’s also making inroads on a new challenge: designing intelligent fixed-wing vehicles that can fly over larger distances, such as to track weather patterns or animal migrations.

However, Roy says the advantage of speed is also a challenge: While a quadrotor can hover in place to estimate its location, a small plane cannot. The problem, while significant, is among the many challenges Roy is eager to tackle.

“You feel like you’re enjoying the risk process as much as anything else,” Roy says. “And that’s really important to me, to have fun with the things I’m doing.”