When the devastating earthquake centered near Sendai, Japan, struck in March, Kenneth Oye knew he was in a safe place: a parking lot in Tokyo, with a delegation representing the U.S.-Japan Council, a nonprofit group devoted to strengthening relations between the countries. After all, open areas are safe, and besides, Japan’s earthquake-safety codes are among the world’s most stringent.

On the other hand, when word got out that the nuclear reactors near Fukushima were suffering problems, Oye became concerned. Japan’s nuclear-power industry has a significant history of troubles, such as an accident and management-ordered cover-up at one plant in 1999; Oye, who has studied the subject, says there have been instances of a “lack of integrity and forthrightness” in the country when it comes to nuclear power.

How can the regulatory system in one country be so proactive about one type of risk, and ineffective regarding another? In the case of Japan, Oye suggests, it is a matter of vision — literally. In a country with regular earthquakes, the public can see when building codes are lacking. But with nuclear power, problems usually remain out of public view, reducing pressure on the country’s regulators.

“Sometimes you need visible manifestations of failure and visible manifestations of improvement, and this is a place where it’s clear,” Oye says of Japan’s mixed record on public safety.

As an associate professor in MIT’s Department of Political Science and the Engineering Systems Division, Oye has become an expert in the way governments assess the potential risks posed by new technologies. And since 2004, as a founder of MIT’s Program on Emerging Technologies (PoET), Oye has been working to create an ongoing forum through which policymakers, scientists and other scholars can discuss the best ways of regulating technologies such as synthetic biology and ubiquitous computing.

“These issues are not simply going to vanish if we don’t talk about them,” Oye says.

Planned adaptation: Preparing for change

Oye’s own intellectual trajectory started well outside the study of technology. He received his undergraduate degree in economics and political science from Swarthmore College and his PhD in political science from Harvard University, then spent years focusing on Cold War and post-Cold War U.S. foreign policy. By the mid-1990s, however, Oye started focusing more on international technology policies, especially in the area of energy. He even helped draft a report at the time, presented to Japanese officials, suggesting improvements in Japan’s nuclear-power system.

More recently, Oye has become a champion of what he calls “planned adaptation” in regulation. Since in any given industry technologies — and our knowledge of their associated risks and benefits — can change rapidly, he asserts that government officials should make regulatory systems that are designed to incorporate advances in knowledge.

This is not a common practice, as Oye’s research has shown. In one recent paper published in Technological Forecasting & Social Change, Oye and two co-authors examined 32 federal regulatory programs in the areas of health, environment and technological safety where adaptive regulation was formally required. In only 16 of the cases was there enough evidence to draw conclusions about the programs; in just five of those cases, the programs systematically updated their regulations to account for new evidence.

As it happens, some of these safety programs, such as the National Transportation Safety Board’s investigations of air crashes, also involve mishaps that can be highly visible. Oye thinks the long-term decline in U.S. air fatalities derives in part from regulatory changes following some high-profile air disasters of the late 1970s. Still, he acknowledges, there are many factors involved in the adoption of a solid regulation scheme, including the willingness and ability of a given industry to adapt.

“None of us is naïve enough to say the improvement came entirely from these reforms,” Oye says of the practices of the Federal Aviation Administration (FAA). “But it is fair to say the FAA pressure [on industry] came before the results changed.”

A bug in the system

Oye believes that oversight regimes that update themselves have a better chance of consistently dealing with new risks. One precept of PoET is that technology is highly unpredictable — often to our benefit, but at the cost of making it harder to anticipate problems, too.

“Even if you use the best methods to project the implications of technologies, you’re still going to get it wrong frequently,” Oye says. For that matter, he adds, “technologists themselves are not particularly good at projecting the implications of their technologies, and smart technologists often decline to offer such projections.”

PoET, which was launched by a five-year grant from the National Science Foundation, regularly seeks to link technologists, industry representatives and regulators in workshops where all parties can learn about the risks of cutting-edge technologies. In January 2011, for instance, PoET helped organize one such event at the Woodrow Wilson International Center for Scholars, a part of the Smithsonian Institution.

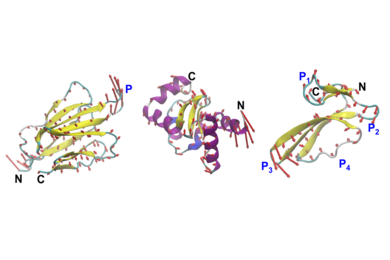

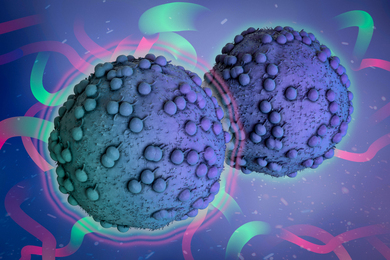

In this case, the workshop’s focus was on synthetic biology, with an emphasis on engineered organisms. Participants examined two versions of a bug designed to serve as an arsenic detector in groundwater in South Asia: one using a standard E. coli strain, and the other using re-engineered “rE. coli” modified by biologists including George Church of Harvard and Peter Carr of MIT to limit horizontal gene flow, the transfer of genes among species. “Everyone understood that the basic idea [of the genetic engineering] is to make the bug more exotic, to limit the likelihood of gene flow and make it safer,” Oye says. “But the weirder the bug is, the more stringent the regulatory hurdles. The mismatch between regulatory templates and management of the bug was obvious.”

As with many areas of technology, synthetic biology will prompt more discussion in the near future. And PoET seems likely to maintain an active role in this public conversation.

“A program like PoET can provide a very rare opportunity for people from political science, history and the physical sciences to work together,” says Gautam Mukunda PhD ’10, an assistant professor at Harvard Business School who participated in the program as a graduate student. “Political decisions have to be made in environments that are deeply uncertain. … That kind of discussion is going to become more and more important as technology takes up a larger space in our politics.”

For his part, Oye places a premium on avoiding the kind of silence that surrounded Japanese nuclear power in past decades. “Do people sometimes hype the dangers and risks of technology?” he asks. “Of course. But you shouldn’t be afraid to raise concerns. The cooperative ethos of the technologists we work with is sensible. Why not reach out, and try to do things right?”

On the other hand, when word got out that the nuclear reactors near Fukushima were suffering problems, Oye became concerned. Japan’s nuclear-power industry has a significant history of troubles, such as an accident and management-ordered cover-up at one plant in 1999; Oye, who has studied the subject, says there have been instances of a “lack of integrity and forthrightness” in the country when it comes to nuclear power.

How can the regulatory system in one country be so proactive about one type of risk, and ineffective regarding another? In the case of Japan, Oye suggests, it is a matter of vision — literally. In a country with regular earthquakes, the public can see when building codes are lacking. But with nuclear power, problems usually remain out of public view, reducing pressure on the country’s regulators.

“Sometimes you need visible manifestations of failure and visible manifestations of improvement, and this is a place where it’s clear,” Oye says of Japan’s mixed record on public safety.

As an associate professor in MIT’s Department of Political Science and the Engineering Systems Division, Oye has become an expert in the way governments assess the potential risks posed by new technologies. And since 2004, as a founder of MIT’s Program on Emerging Technologies (PoET), Oye has been working to create an ongoing forum through which policymakers, scientists and other scholars can discuss the best ways of regulating technologies such as synthetic biology and ubiquitous computing.

“These issues are not simply going to vanish if we don’t talk about them,” Oye says.

Planned adaptation: Preparing for change

Oye’s own intellectual trajectory started well outside the study of technology. He received his undergraduate degree in economics and political science from Swarthmore College and his PhD in political science from Harvard University, then spent years focusing on Cold War and post-Cold War U.S. foreign policy. By the mid-1990s, however, Oye started focusing more on international technology policies, especially in the area of energy. He even helped draft a report at the time, presented to Japanese officials, suggesting improvements in Japan’s nuclear-power system.

More recently, Oye has become a champion of what he calls “planned adaptation” in regulation. Since in any given industry technologies — and our knowledge of their associated risks and benefits — can change rapidly, he asserts that government officials should make regulatory systems that are designed to incorporate advances in knowledge.

This is not a common practice, as Oye’s research has shown. In one recent paper published in Technological Forecasting & Social Change, Oye and two co-authors examined 32 federal regulatory programs in the areas of health, environment and technological safety where adaptive regulation was formally required. In only 16 of the cases was there enough evidence to draw conclusions about the programs; in just five of those cases, the programs systematically updated their regulations to account for new evidence.

As it happens, some of these safety programs, such as the National Transportation Safety Board’s investigations of air crashes, also involve mishaps that can be highly visible. Oye thinks the long-term decline in U.S. air fatalities derives in part from regulatory changes following some high-profile air disasters of the late 1970s. Still, he acknowledges, there are many factors involved in the adoption of a solid regulation scheme, including the willingness and ability of a given industry to adapt.

“None of us is naïve enough to say the improvement came entirely from these reforms,” Oye says of the practices of the Federal Aviation Administration (FAA). “But it is fair to say the FAA pressure [on industry] came before the results changed.”

A bug in the system

Oye believes that oversight regimes that update themselves have a better chance of consistently dealing with new risks. One precept of PoET is that technology is highly unpredictable — often to our benefit, but at the cost of making it harder to anticipate problems, too.

“Even if you use the best methods to project the implications of technologies, you’re still going to get it wrong frequently,” Oye says. For that matter, he adds, “technologists themselves are not particularly good at projecting the implications of their technologies, and smart technologists often decline to offer such projections.”

PoET, which was launched by a five-year grant from the National Science Foundation, regularly seeks to link technologists, industry representatives and regulators in workshops where all parties can learn about the risks of cutting-edge technologies. In January 2011, for instance, PoET helped organize one such event at the Woodrow Wilson International Center for Scholars, a part of the Smithsonian Institution.

In this case, the workshop’s focus was on synthetic biology, with an emphasis on engineered organisms. Participants examined two versions of a bug designed to serve as an arsenic detector in groundwater in South Asia: one using a standard E. coli strain, and the other using re-engineered “rE. coli” modified by biologists including George Church of Harvard and Peter Carr of MIT to limit horizontal gene flow, the transfer of genes among species. “Everyone understood that the basic idea [of the genetic engineering] is to make the bug more exotic, to limit the likelihood of gene flow and make it safer,” Oye says. “But the weirder the bug is, the more stringent the regulatory hurdles. The mismatch between regulatory templates and management of the bug was obvious.”

As with many areas of technology, synthetic biology will prompt more discussion in the near future. And PoET seems likely to maintain an active role in this public conversation.

“A program like PoET can provide a very rare opportunity for people from political science, history and the physical sciences to work together,” says Gautam Mukunda PhD ’10, an assistant professor at Harvard Business School who participated in the program as a graduate student. “Political decisions have to be made in environments that are deeply uncertain. … That kind of discussion is going to become more and more important as technology takes up a larger space in our politics.”

For his part, Oye places a premium on avoiding the kind of silence that surrounded Japanese nuclear power in past decades. “Do people sometimes hype the dangers and risks of technology?” he asks. “Of course. But you shouldn’t be afraid to raise concerns. The cooperative ethos of the technologists we work with is sensible. Why not reach out, and try to do things right?”