In the 1950s and ’60s — when MIT’s Warren McCulloch and Walter Pitts were building networks of artificial neurons, John McCarthy and Marvin Minsky were helping to create the discipline of artificial intelligence and Noam Chomsky was revolutionizing the study of linguistics — hopes were high that tools emerging from the new science of computation would soon unravel the mysteries of human thought.

As the computational complexity of even the most common human cognitive tasks became clear, however, researchers trimmed their sails. Today, “artificial intelligence,” or AI, generally refers to the type of technology that helps focus point-and-shoot cameras or lets people verbally navigate airline reservation systems.

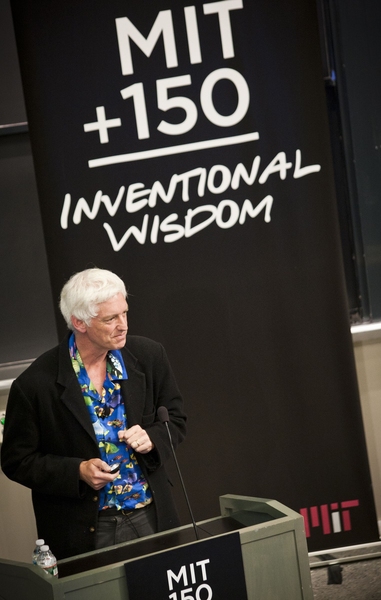

The central theme of “Brains, Minds and Machines” — the last of a series of symposia celebrating MIT’s 150th anniversary — is that it’s time for artificial-intelligence research, cognitive science and neuroscience to get ambitious again. The symposium was, in part, a launch party for MIT’s Intelligence Initiative, or I2, a new program spanning all three disciplines and aiming, as its web site puts it, to “answer the cosmic question of just how intelligence works.”

The conference kicked off Tuesday, May 3, with an evening session titled “The Golden Age,” featuring a panel of pioneers in the study of the mind. Sydney Brenner, who shared the 2002 Nobel Prize in physiology or medicine for his work on unraveling the genetic code, cautioned that genes are not simple blueprints for building organisms, but rather participants in a dynamic process that reflects many different stages of our evolutionary history. A given gene’s role may be precisely to inhibit the activity of some other gene that had previously been selected for, said Brenner, a senior distinguished fellow at the Salk Institute for Biological Studies.

Minsky, a professor emeritus at the MIT Media Lab, was the only panelist who seemed content to reminisce about the 1950s and ’60s — the “golden age” of the panel’s title. He recalled that in the early 1960s, when MIT’s AI Lab was getting off the ground, he offered to send some of his graduate students to Brenner’s lab to help automate mapping of the C. elegans roundworm’s nervous system — a task that ended up taking Brenner 20 years. Minsky said Brenner refused, saying, “‘All of my students will realize that your subject is more exciting — and easier.’ Do you remember that?” Minsky asked. Brenner grinned and nodded.

Among the panel’s other highlights was Chomsky’s contention that, because humans are able to instantly infer the syntactic relationships between words that are far apart from each other in long sentences, the brain must represent sentence structure hierarchically rather than linearly. Emilio Bizzi, an Institute Professor and co-founder of the McGovern Institute for Brain Research, described his lab’s discovery that the bewildering number of neural connections between the spinal cord and the muscles of the trunk and limbs are grouped into a finite set of control modules; different movements, he said, are the result of activating different modules in different orders. That discovery raises as many questions as it answers, but Bizzi said he’s confident that the “many labs that are pursuing [this] in machines” all but ensure progress in this area.

Revisiting robotics

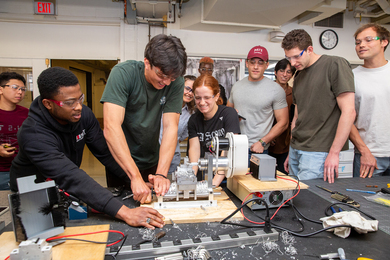

At Wednesday’s first session, “Vision and Action,” panelists described recent advances in robotics and discussed the obstacles still facing the field.

Rodney Brooks, the Panasonic Professor Emeritus of Robotics at MIT and founder of iRobot, said developing robots that can function in the real world and interact with their surroundings has been a long, difficult process. “Perception is effortless for people, but in robots, it’s still largely unsolved,” he said.

As Brooks stood on the stage of Kresge Auditorium, he reached into his pocket and pulled out a set of keys, declaring he was not at all confident he could design a robot to do the same simple task. In fact, it would be far more difficult than designing a robot-controlled plane that could fly from Boston to Los Angeles, he said: The set of conditions that need to be taken into account to lift keys from a pocket changes every few milliseconds, while flying usually involves long periods with no change in conditions.

Most of the early successes in robotics have come in designing robots to perform relatively simple tasks such as vacuuming a room, a task now performed by millions of iRobot’s Roomba robots. The company’s machines are also being used to measure radiation levels at Japan’s crippled Fukushima nuclear reactor, Brooks said.

Brooks recently retired from MIT to work full time at his new venture, Heartland, which is developing a new generation of industrial robots that he hopes will help revive manufacturing in the United States.

Panelist Takeo Kanade, a professor at the Robotics Institute of Carnegie Mellon University, described the driverless cars he and his students have built, making use of computerized vision.

As Kanade put it, the key to achieving computer vision is developing a system that can process and describe a scene, which necessitates identifying its individual components. One of the biggest obstacles is the difficulty — for machines — of recognizing the context in which an object appears.

The importance of teamwork

At a panel titled “Social Cognition and Collective Intelligence,” scientists delved into the phenomenon of humans pooling their intelligence, sometimes aided by computers. Moderator Thomas Malone, director of MIT’s Center for Collective Intelligence, noted in his introduction that “intelligence is not just something that happens inside individual brains. It also arises within groups of individuals.”

For thousands of years, groups such as families, countries, armies and companies have been behaving in ways that seem intelligent. More recently, the Internet has expanded collective intelligence even further, with the development of sites such as Wikipedia and Google that make it easy to consolidate individual contributions.

Humans and computers working together can form a collective far more intelligent than any single person or computer, Malone said.

“As the world becomes more and more highly connected, through all kinds of electronic devices, it will be increasingly useful to think of all humans and computers on the planet as a single global brain,” he said.

The 25-year itch

On Wednesday evening, a panel addressed the question, “Why is it time to try again?” Moderator Tomaso Poggio, the Eugene McDermott Professor in the Brain Sciences and Human Behavior and director of the Center for Biological and Computational Learning, suggested that, like the influenza virus, an infectious interest in artificial intelligence captures the scientific community about every 25 years. In the 1960s, Marvin Minsky’s work started the first wave; then around 1985, interest in machine learning and neural networks took off. Now, the time is right for another such wave, he said, in part because of the massive computing power now available.

MIT President Susan Hockfield, a member of the panel, said she was honored to participate, even though, as she noted, she left her career as a neuroscientist behind when she came to MIT six and a half years ago. She agreed with Poggio that now is the time to “push neuroscience toward a new understanding of intelligence,” adding that because of its interdisciplinary approach, “MIT is the place where we can make real inroads into some of these problems.”

Robert Desimone, the Doris and Don Berkey Professor of Neuroscience and director of the McGovern Institute for Brain Research, described some of the new tools now available to neuroscientists to trace anatomical brain connections and monitor brain activity through electrical recording or imaging. He also mentioned the budding field of optogenetics, which allows researchers to control neurons with light.

These tools don’t make discoveries on their own, Desimone added. “What it takes is putting very powerful tools into the hands of very clever people,” he said.

Biologist and Institute Professor Phillip Sharp talked about the importance of genomics in learning about how the human brain works. In the 10 years since the first draft of the human genome was completed, he pointed out, scientists have already identified genetic markers for psychotic diseases such as schizophrenia.

Co-moderator Josh Tenenbaum, an associate professor of computational cognitive science, pointed out the limitations of the highly specialized artificial intelligence systems that have been built so far. “If you take a well-defined task and throw enough resources at it, you can build a system that achieves human-level, even expert-level performance at that task,” he said. However, there’s no such thing as a robot that can learn languages, play chess, sing and dance, negotiate a job offer and interact in social relationships — that is, no robot that can do everything a human can do.

As the computational complexity of even the most common human cognitive tasks became clear, however, researchers trimmed their sails. Today, “artificial intelligence,” or AI, generally refers to the type of technology that helps focus point-and-shoot cameras or lets people verbally navigate airline reservation systems.

The central theme of “Brains, Minds and Machines” — the last of a series of symposia celebrating MIT’s 150th anniversary — is that it’s time for artificial-intelligence research, cognitive science and neuroscience to get ambitious again. The symposium was, in part, a launch party for MIT’s Intelligence Initiative, or I2, a new program spanning all three disciplines and aiming, as its web site puts it, to “answer the cosmic question of just how intelligence works.”

The conference kicked off Tuesday, May 3, with an evening session titled “The Golden Age,” featuring a panel of pioneers in the study of the mind. Sydney Brenner, who shared the 2002 Nobel Prize in physiology or medicine for his work on unraveling the genetic code, cautioned that genes are not simple blueprints for building organisms, but rather participants in a dynamic process that reflects many different stages of our evolutionary history. A given gene’s role may be precisely to inhibit the activity of some other gene that had previously been selected for, said Brenner, a senior distinguished fellow at the Salk Institute for Biological Studies.

Minsky, a professor emeritus at the MIT Media Lab, was the only panelist who seemed content to reminisce about the 1950s and ’60s — the “golden age” of the panel’s title. He recalled that in the early 1960s, when MIT’s AI Lab was getting off the ground, he offered to send some of his graduate students to Brenner’s lab to help automate mapping of the C. elegans roundworm’s nervous system — a task that ended up taking Brenner 20 years. Minsky said Brenner refused, saying, “‘All of my students will realize that your subject is more exciting — and easier.’ Do you remember that?” Minsky asked. Brenner grinned and nodded.

Among the panel’s other highlights was Chomsky’s contention that, because humans are able to instantly infer the syntactic relationships between words that are far apart from each other in long sentences, the brain must represent sentence structure hierarchically rather than linearly. Emilio Bizzi, an Institute Professor and co-founder of the McGovern Institute for Brain Research, described his lab’s discovery that the bewildering number of neural connections between the spinal cord and the muscles of the trunk and limbs are grouped into a finite set of control modules; different movements, he said, are the result of activating different modules in different orders. That discovery raises as many questions as it answers, but Bizzi said he’s confident that the “many labs that are pursuing [this] in machines” all but ensure progress in this area.

Revisiting robotics

At Wednesday’s first session, “Vision and Action,” panelists described recent advances in robotics and discussed the obstacles still facing the field.

Rodney Brooks, the Panasonic Professor Emeritus of Robotics at MIT and founder of iRobot, said developing robots that can function in the real world and interact with their surroundings has been a long, difficult process. “Perception is effortless for people, but in robots, it’s still largely unsolved,” he said.

As Brooks stood on the stage of Kresge Auditorium, he reached into his pocket and pulled out a set of keys, declaring he was not at all confident he could design a robot to do the same simple task. In fact, it would be far more difficult than designing a robot-controlled plane that could fly from Boston to Los Angeles, he said: The set of conditions that need to be taken into account to lift keys from a pocket changes every few milliseconds, while flying usually involves long periods with no change in conditions.

Most of the early successes in robotics have come in designing robots to perform relatively simple tasks such as vacuuming a room, a task now performed by millions of iRobot’s Roomba robots. The company’s machines are also being used to measure radiation levels at Japan’s crippled Fukushima nuclear reactor, Brooks said.

Brooks recently retired from MIT to work full time at his new venture, Heartland, which is developing a new generation of industrial robots that he hopes will help revive manufacturing in the United States.

Panelist Takeo Kanade, a professor at the Robotics Institute of Carnegie Mellon University, described the driverless cars he and his students have built, making use of computerized vision.

As Kanade put it, the key to achieving computer vision is developing a system that can process and describe a scene, which necessitates identifying its individual components. One of the biggest obstacles is the difficulty — for machines — of recognizing the context in which an object appears.

The importance of teamwork

At a panel titled “Social Cognition and Collective Intelligence,” scientists delved into the phenomenon of humans pooling their intelligence, sometimes aided by computers. Moderator Thomas Malone, director of MIT’s Center for Collective Intelligence, noted in his introduction that “intelligence is not just something that happens inside individual brains. It also arises within groups of individuals.”

For thousands of years, groups such as families, countries, armies and companies have been behaving in ways that seem intelligent. More recently, the Internet has expanded collective intelligence even further, with the development of sites such as Wikipedia and Google that make it easy to consolidate individual contributions.

Humans and computers working together can form a collective far more intelligent than any single person or computer, Malone said.

“As the world becomes more and more highly connected, through all kinds of electronic devices, it will be increasingly useful to think of all humans and computers on the planet as a single global brain,” he said.

The 25-year itch

On Wednesday evening, a panel addressed the question, “Why is it time to try again?” Moderator Tomaso Poggio, the Eugene McDermott Professor in the Brain Sciences and Human Behavior and director of the Center for Biological and Computational Learning, suggested that, like the influenza virus, an infectious interest in artificial intelligence captures the scientific community about every 25 years. In the 1960s, Marvin Minsky’s work started the first wave; then around 1985, interest in machine learning and neural networks took off. Now, the time is right for another such wave, he said, in part because of the massive computing power now available.

MIT President Susan Hockfield, a member of the panel, said she was honored to participate, even though, as she noted, she left her career as a neuroscientist behind when she came to MIT six and a half years ago. She agreed with Poggio that now is the time to “push neuroscience toward a new understanding of intelligence,” adding that because of its interdisciplinary approach, “MIT is the place where we can make real inroads into some of these problems.”

Robert Desimone, the Doris and Don Berkey Professor of Neuroscience and director of the McGovern Institute for Brain Research, described some of the new tools now available to neuroscientists to trace anatomical brain connections and monitor brain activity through electrical recording or imaging. He also mentioned the budding field of optogenetics, which allows researchers to control neurons with light.

These tools don’t make discoveries on their own, Desimone added. “What it takes is putting very powerful tools into the hands of very clever people,” he said.

Biologist and Institute Professor Phillip Sharp talked about the importance of genomics in learning about how the human brain works. In the 10 years since the first draft of the human genome was completed, he pointed out, scientists have already identified genetic markers for psychotic diseases such as schizophrenia.

Co-moderator Josh Tenenbaum, an associate professor of computational cognitive science, pointed out the limitations of the highly specialized artificial intelligence systems that have been built so far. “If you take a well-defined task and throw enough resources at it, you can build a system that achieves human-level, even expert-level performance at that task,” he said. However, there’s no such thing as a robot that can learn languages, play chess, sing and dance, negotiate a job offer and interact in social relationships — that is, no robot that can do everything a human can do.