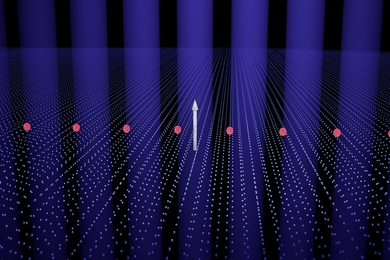

Computers can usually out-compute the human brain, but there are some tasks, such as visual object recognition, that the brain performs easily yet are very challenging for computers. To explore this phenomenon, neuroscientists have long used rapid categorization tasks, in which subjects indicate whether an object from a specific class (such as an animal) is present or not in the image.

Now, MIT researchers report that a computer model designed to mimic the way the brain processes visual information performs as well as humans do on rapid categorization tasks. The model even tends to make similar errors as humans, possibly because it so closely follows the organization of the brain's visual system.

The work, which appears in the online early edition of the Proceedings of the National Academy of Sciences the week of April 2, could lead to better artificial vision systems and augmented sensory prostheses.

"We created a model that takes into account a host of quantitative anatomical and physiological data about the visual cortex and tries to simulate what happens in the first 100 milliseconds or so after we see an object," explained senior author Tomaso Poggio, the Eugene McDermott Professor of Brain and Cognitive Sciences and a member of MIT's McGovern Institute for Brain Research.

"This is the first time a model has been able to reproduce human behavior on that kind of task," said Poggio. His co-authors are Aude Oliva, a cognitive neuroscientist in the MIT Department of Brain and Cognitive Sciences, and Thomas Serre, a postdoctoral associate at the McGovern Institute.

The work supports a long-held hypothesis that rapid categorization happens without any feedback from cognitive or other areas of the brain. In other words, rapid or immediate object recognition occurs in one feed-forward sweep through the ventral stream of the visual cortex.

The results further indicate that the model can help neuroscientists make predictions and drive new experiments to explore brain mechanisms involved in human visual perception, cognition and behavior.

"We have not solved vision yet," Poggio cautioned, "but this model of immediate recognition may provide the skeleton of a theory of vision."

For cognitive neuroscientists, these results add to the convergence of evidence about the feed-forward hypothesis for rapid categorization.

"There could be other mechanisms involved, but this a big step forward in understanding how humans see," said Oliva. "For me, it's putting light in the black box and gives direction to design new experiments, for instance to explore perception in clutter."

Earlier this year the Poggio team demonstrated that this biologically inspired computer model can also learn to recognize objects from real-world examples and identify relevant objects in complex scenes.

This research was supported by grants from the National Institutes of Health, Defense Advanced Research Projects Agency, Office of Naval Research and National Science Foundation.

A version of this article appeared in MIT Tech Talk on April 4, 2007 (download PDF).