A powerful, massively parallel computer being developed in the Artificial Intelligence Laboratory has the potential to greatly increase the speed-to-cost ratio for solving problems, and its designers hope it will fuel a major shift in how such computers are conceived of and built.

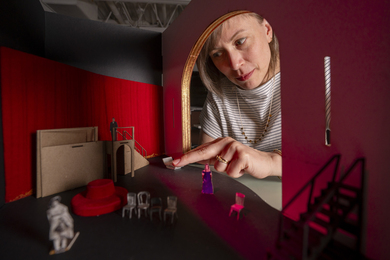

The "J-Machine" produced by William J. Dally, associate professor of electrical engineering and computer science, and several of his graduate students, currently incorporates 1,024 nodes, each consisting of a computer chip along with one megabyte of memory. These nodes, or message-driven processors (MDPs), are packed onto 16 boards with 64 MDPs apiece; those boards are stacked together so that each node can communicate with its four neighbors on the same board and the two above and below it, explained Andrew Chang, a research staff member who helped with the system design. Chip design is the work of graduate students Richard Lethin, John Keen and Stuart Fiske, former graduate student Peter Nuth and research scientist Michael Noakes.

The computer also has 16 hard disk drives of 400 megabytes each. The MDP boards are mounted at a 45-degree angle to achieve a compromise between easy accessibility and small footprint, giving the J-Machine an overall appearance of a tilted gray washing machine.

The purpose of the research project is to study ways of building a computer that can solve problems of a given size faster by increasing the number of processors and holding memory size constant, rather than a larger problem in the same amount of time, which is how computer advances are traditionally measured. "Our goal is to change the way people think about parallel computers," Professor Dally said.

Central to the philosophy behind the J-Machine is the idea of fine-grained hardware partitioning, meaning that the amount of memory per processor is relatively small. Many ordinary desktop computers have 16 megabytes of memory, or RAM, used by a single processor, but the J-Machine has one megabyte per processor for a total of 1,024 megabytes (one gigabyte) of memory. This is comparable to having one worker responsible for finding and using one file folder of information rather than a whole drawer, Mr. Lethin explained. With a higher proportion of processors, more tasks or parts of a problem can be worked on simultaneously without any loss of speed. "You can read and write to that memory a lot quicker," he said.

Over the years, the processor proportion of a computer's cost has been dropping. In 1967, in a computer that could execute one million instructions per second (Mips) with one megabyte of memory, half the cost was for the processor. That proportion had fallen to 11 percent of the cost by 1979 and is now less than one percent, Professor Dally noted. Conventional parallel computers boost performance by staying with the current ratio and increasing the amount of both processors and memory, but the J-Machine designers increased the proportion of processors to boost Mips capability. (In fact, the "J" stands for jellybean because, like the candy, the computer's chips "are sort of cheap and plentiful," Mr. Lethin said.) The marginal cost of adding more processing power is very low. This gives the potential for much higher performance than same-cost conventional parallel or sequential computers. "You get a much better cost performance by going to a finer grain size," Professor Dally said.

This concept has been difficult to export to industry, he added; "it's such a big change from how people do business now that it's not been widely adapted." Not all companies are reluctant, however; Cray Research is developing a product based on the J-Machine research, which is being sponsored by the Advanced Research Projects Agency.

Also assisting with the project is Intel. The company manufactured the MDPs (which are based on its own 25-MHz 486 chip found in many home computers) from a design by the MIT group. Each chip has 1.1 million transistors and so many interconnections that the fine lines are a solid blur even on a wall-size drawing made by Intel's computer-aided design equipment.

Another innovative feature of the J-Machine is in its internal communications. Unlike other computers, network hardware is integrated directly into each MDP instead of being a separate component. The J-Machine also uses a transmission mechanism created by Professor Dally called wormhole routing, which speeds messages that must pass through several nodes. With wormhole routing, a node can start passing along a message to the next node before it has finished receiving it from its predecessor. As a result, the computer needs only three instructions to send a four-word message between processors, whereas Intel's fastest parallel computer needs 600 instructions to perform the same task, Professor Dally said.

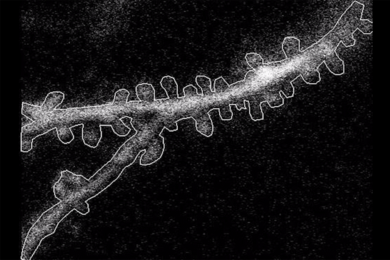

Communication between MDPs on separate boards is made possible by conductive rubber strips compressed between the boards. The material has embedded in it a series of fine silicon lines 2/1000 of an inch apart though which messages can travel. Although this material is not new, the MIT scientists changed its aspect ratio (the ratio of a segment's length and height) for use in the J-machine. It takes an average of 1.5 microseconds to send a message between nodes, compared to upwards of 1,000 microseconds between nodes in Ethernet-linked computers found in many offices, Mr. Lethin said.

With such fast and plentiful hardware routes and intersections, avoiding Manhattan-like traffic jams in software execution can be a problem. "If you're programming, it's a hard issue, trying to coordinate it and not having collisions and having each [node] knowing what the others are doing," he said. Other graduate students in Professor Dally's group are working on another chip that will be able to do adaptive routing, or perceiving electronic clogs and almost instantly detouring around them.

The J-Machine's software, like its hardware, is largely custom-made. Its programs are in Concurrent Smalltalk (a language invented by Professor Dally and implemented by Waldemar Horwat, who recently received his PhD at MIT), and in Message-Driven C, which was developed by scientists at Caltech.

Other institutions are using J-Machines for research in computer science and other fields. Researchers at Caltech with whom Professor Dally's group is collaborating are working on software development using a computer with 512 nodes and 24 hard disk drives for software development; they are also using it to analyze magnetic resonance imaging and fluid dynamics data. Argonne National Laboratory has a machine with the same configuration that is being used for work on genetic sequence matching and climate modeling, while Northwestern University uses it for studying molecular dynamics. The J-Machine is available to any member of the MIT community who is working on a problem that requires a great deal of computation.

A version of this article appeared in the July 20, 1994 issue of MIT Tech Talk (Volume 39, Number 1).