At the top of many automation wish lists is a particularly time-consuming task: chores.

The moonshot of many roboticists is cooking up the proper hardware and software combination so that a machine can learn “generalist” policies (the rules and strategies that guide robot behavior) that work everywhere, under all conditions. Realistically, though, if you have a home robot, you probably don’t care much about it working for your neighbors. MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) researchers decided, with that in mind, to attempt to find a solution to easily train robust robot policies for very specific environments.

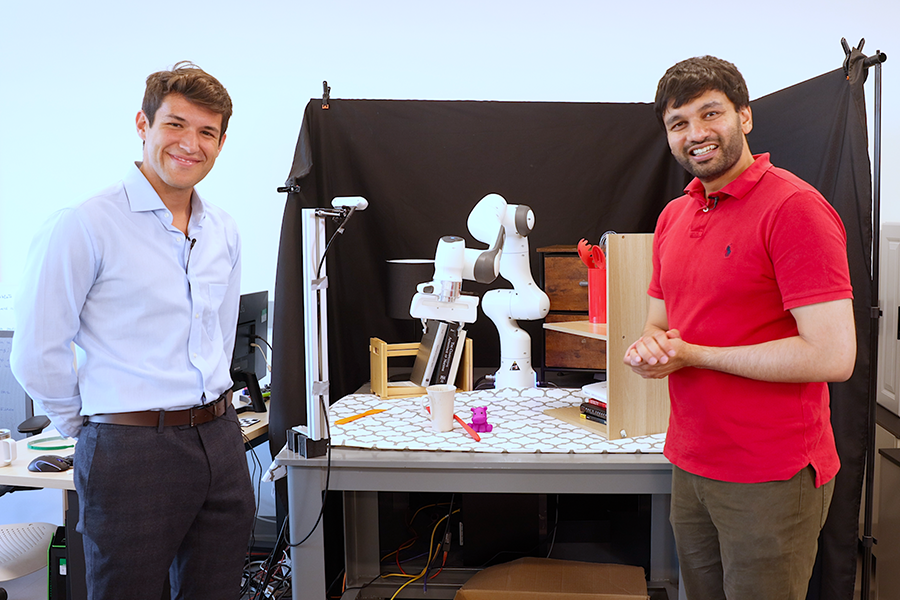

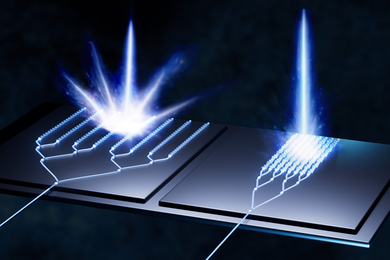

“We aim for robots to perform exceptionally well under disturbances, distractions, varying lighting conditions, and changes in object poses, all within a single environment,” says Marcel Torne Villasevil, MIT CSAIL research assistant in the Improbable AI lab and lead author on a recent paper about the work. “We propose a method to create digital twins on the fly using the latest advances in computer vision. With just their phones, anyone can capture a digital replica of the real world, and the robots can train in a simulated environment much faster than the real world, thanks to GPU parallelization. Our approach eliminates the need for extensive reward engineering by leveraging a few real-world demonstrations to jump-start the training process.”

Taking your robot home

RialTo, of course, is a little more complicated than just a simple wave of a phone and (boom!) home bot at your service. It begins by using your device to scan the target environment using tools like NeRFStudio, ARCode, or Polycam. Once the scene is reconstructed, users can upload it to RialTo’s interface to make detailed adjustments, add necessary joints to the robots, and more.

The refined scene is exported and brought into the simulator. Here, the aim is to develop a policy based on real-world actions and observations, such as one for grabbing a cup on a counter. These real-world demonstrations are replicated in the simulation, providing some valuable data for reinforcement learning. “This helps in creating a strong policy that works well in both the simulation and the real world. An enhanced algorithm using reinforcement learning helps guide this process, to ensure the policy is effective when applied outside of the simulator,” says Torne.

Testing showed that RialTo created strong policies for a variety of tasks, whether in controlled lab settings or more unpredictable real-world environments, improving 67 percent over imitation learning with the same number of demonstrations. The tasks involved opening a toaster, placing a book on a shelf, putting a plate on a rack, placing a mug on a shelf, opening a drawer, and opening a cabinet. For each task, the researchers tested the system’s performance under three increasing levels of difficulty: randomizing object poses, adding visual distractors, and applying physical disturbances during task executions. When paired with real-world data, the system outperformed traditional imitation-learning methods, especially in situations with lots of visual distractions or physical disruptions.

“These experiments show that if we care about being very robust to one particular environment, the best idea is to leverage digital twins instead of trying to obtain robustness with large-scale data collection in diverse environments,” says Pulkit Agrawal, director of Improbable AI Lab, MIT electrical engineering and computer science (EECS) associate professor, MIT CSAIL principal investigator, and senior author on the work.

As far as limitations, RialTo currently takes three days to be fully trained. To speed this up, the team mentions improving the underlying algorithms and using foundation models. Training in simulation also has its limitations, and currently it’s difficult to do effortless sim-to-real transfer and simulate deformable objects or liquids.

The next level

So what’s next for RialTo’s journey? Building on previous efforts, the scientists are working on preserving robustness against various disturbances while improving the model’s adaptability to new environments. “Our next endeavor is this approach to using pre-trained models, accelerating the learning process, minimizing human input, and achieving broader generalization capabilities,” says Torne.

“We’re incredibly enthusiastic about our 'on-the-fly' robot programming concept, where robots can autonomously scan their environment and learn how to solve specific tasks in simulation. While our current method has limitations — such as requiring a few initial demonstrations by a human and significant compute time for training these policies (up to three days) — we see it as a significant step towards achieving 'on-the-fly' robot learning and deployment,” says Torne. “This approach moves us closer to a future where robots won’t need a preexisting policy that covers every scenario. Instead, they can rapidly learn new tasks without extensive real-world interaction. In my view, this advancement could expedite the practical application of robotics far sooner than relying solely on a universal, all-encompassing policy.”

“To deploy robots in the real world, researchers have traditionally relied on methods such as imitation learning from expert data, which can be expensive, or reinforcement learning, which can be unsafe,” says Zoey Chen, a computer science PhD student at the University of Washington who wasn’t involved in the paper. “RialTo directly addresses both the safety constraints of real-world RL [robot learning], and efficient data constraints for data-driven learning methods, with its novel real-to-sim-to-real pipeline. This novel pipeline not only ensures safe and robust training in simulation before real-world deployment, but also significantly improves the efficiency of data collection. RialTo has the potential to significantly scale up robot learning and allows robots to adapt to complex real-world scenarios much more effectively.”

"Simulation has shown impressive capabilities on real robots by providing inexpensive, possibly infinite data for policy learning,” adds Marius Memmel, a computer science PhD student at the University of Washington who wasn’t involved in the work. “However, these methods are limited to a few specific scenarios, and constructing the corresponding simulations is expensive and laborious. RialTo provides an easy-to-use tool to reconstruct real-world environments in minutes instead of hours. Furthermore, it makes extensive use of collected demonstrations during policy learning, minimizing the burden on the operator and reducing the sim2real gap. RialTo demonstrates robustness to object poses and disturbances, showing incredible real-world performance without requiring extensive simulator construction and data collection.”

Torne wrote this paper alongside senior authors Abhishek Gupta, assistant professor at the University of Washington, and Agrawal. Four other CSAIL members are also credited: EECS PhD student Anthony Simeonov SM ’22, research assistant Zechu Li, undergraduate student April Chan, and Tao Chen PhD ’24. Improbable AI Lab and WEIRD Lab members also contributed valuable feedback and support in developing this project.

This work was supported, in part, by the Sony Research Award, the U.S. government, and Hyundai Motor Co., with assistance from the WEIRD (Washington Embodied Intelligence and Robotics Development) Lab. The researchers presented their work at the Robotics Science and Systems (RSS) conference earlier this month.