Imagine you’re walking in your dimly lit hallway. You’ve donned a pair of glasses that augment your reality. But the new object in your environment — a sleeping dragon the size of a cat — looks disappointingly flat and cartoonish.

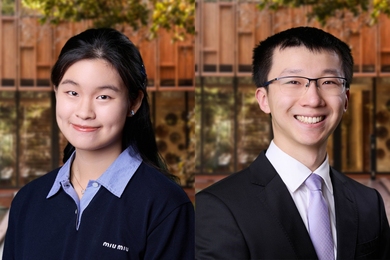

“You can tell it’s really fake, because the lighting [on it] doesn’t match your environment,” explains Elisa Young. A senior in MIT's Department of Electrical Engineering and Computer Science (EECS), Young is researching how to make simulated objects reflect the light in a user’s environment. That way, they could look more like objects in the real world.

“I have such love for visual things in combination with computer science,” says Young, who is enthused to erode the boundary between virtual and concrete realities. She smiles at the thought. “It’s really cool to be like, ‘Oh, I’m living in a movie.’”

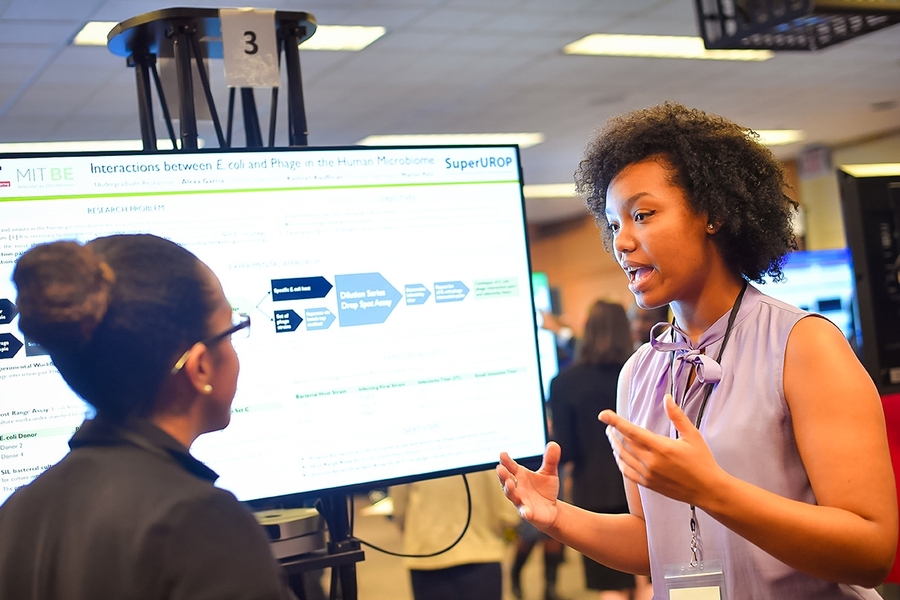

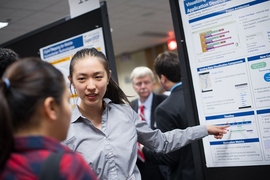

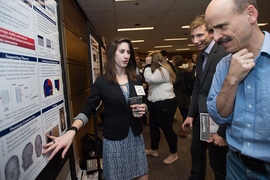

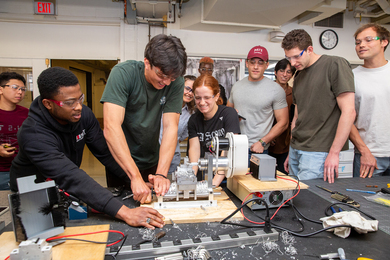

Young was among 151 students presenting their work on Thursday, Dec. 8, at the SuperUROP Research Review. Students enrolled in the School of Engineering SuperUROP program — an advanced Undergraduate Research Opportunities Program (UROP) — who undertake yearlong research projects shared their first term’s progress with faculty and graduate student mentors.

The relevance of the students’ work — research to enable faster commutes, use robots to help people, optimize buildings, and bolster defenses against massive cyber attacks — made an impression. “It’s amazing to see them tackling these tough problems,” said Anantha Chandrakasan, head of EECS. Chandrakasan created the program in 2012 to provide students with an immersive, graduate-level research experience.

Cooper Sloan wants to make catching the bus easier using machine learning, a technique that allows computers to pick out subtle patterns. Using the last five years of GPS data from Boston buses, Sloan is unraveling the dynamics of the bus system, which has some quirks. “Slow buses tend to get slower and fast buses tend to get faster, which results in clumping,” says the EECS senior. Training a neural net, the architecture by which machines learn, will enable software to better predict bus arrival times — something commuters are waiting for.

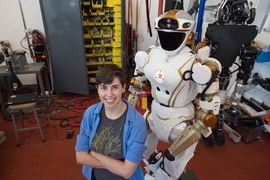

Machines could also better assist people right in their homes, including senior citizens who have trouble walking. To that end, Alex LaGrassa is training robots to understand human speech. Right now, robots can easily act on pre-programmed information, she explains — for example, "Grab the Blue Ribbon muffin mix." Yet in the flurry of a real kitchen, a person might say "grab the blue box" — using a description rather than a proper name. An EECS junior, LaGrassa is programming a robot to understand natural speech and actively search for objects that meet the speaker’s criteria. “It has that extra step of figuring out ‘What is this person talking about?’,” she says. Having robots bridge that linguistic divide between human and machine would be helpful, says LaGrassa, “Because, you know, requiring people to know how to program is problematic.”

Brenda Stern, a senior in civil and environmental engineering, is looking to design buildings with a smaller carbon footprint. Most people of think of the energy consumed during construction or once a building is up and running. But Stern focuses on the energy that went into creating the building materials — like the concrete or steel columns, slabs, and framework. They all have embodied carbon, or the carbon emissions associated with their production. “I’m finding ways to minimize these materials to create more sustainable structures,” she says.

Valerie Sarge is working on hardware, trying to do more with less data. An EECS junior, Sarge is researching how to transform low-resolution images into high-resolution facsimiles. By using field-programmable gate arrays, or FPGAs, she can program hardware with a neural net to extrapolate information, mimicking the way a human would fill in the dots: “We quickly hallucinate the information that your brain expects to see.” That means you could download a low resolution video onto your phone, and watch it in high-definition.

The goal is to push current limits of computing power by moving some of the computational demand from software into hardware. If scientists can achieve that, Sarge says, “every type of research, every type of analysis, every type of study that requires computing power will become easier.”

Another SuperUROP is preparing hardware for space. Madeleine Waller, an EECS senior, is running tests to make the Transiting Exoplanet Survey Satellite (TESS), scheduled for launch in December 2017, more resilient.

The satellite will contend with meddlesome cosmic rays, which can cause computer chip bits to flip, creating errors in the data. Waller is testing the satellite’s FPGA by intentionally injecting errors into a model data stream. “We’re trying to trigger all the watchdog protocols in the system,” she says. Ensuring they work would give researchers a better chance of confidently detecting exoplanets.

But while some threats to systems are random, others are maliciously calculated. In October, Hyunjoon Song, a senior in EECS, saw news that millions of infected bots had attacked Akamai, a network server company. The strike had wielded an army of “internet of things” devices, such as digital cameras and video recorders. Collectively dubbed the Mirai botnet, the bots battered Akamai with 620 Gb per second to attempt to overload the company's servers in what's known as a distributed denial of service (DDoS) attack. “This was one of the most powerful DDoS attacks ever,” he says. “And the problem is that there are billions more of these devices that are just as vulnerable. The Mirai botnet is just the beginning.”

Song is looking at the source code and interacting with the Mirai botnet to understand its architecture and sift for vulnerabilities, in the hope of preventing more serious attacks.

“The students show incredible enthusiasm for their work,” Chandrakasan says. “I’m eager to see how their projects develop over the spring semester.”