Conservationists physically tag animals in the wild to better follow them over time. But tagging can be intrusive for many species, and difficult to accomplish in larger populations. As an alternative, scientists have photographed animals in their natural environments and catalogued the images, along with information such as individuals’ dimensions and geographic locations.

However, as images accumulate, picking out individuals from among thousands of pictures can be a monumental task. Sai Ravela, a principal research scientist in MIT’s Department of Earth, Atmospheric and Planetary Sciences, estimates that manually sifting through a catalog of 10,000 images can take one person 15 years.

“It’s an enormous amount of time,” Ravela says. “You’re reaching the edges of what people want to do with their lives.”

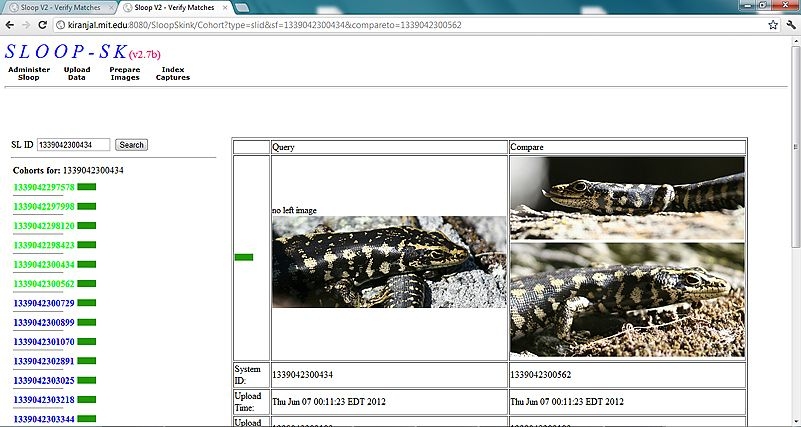

Now Ravela and his colleagues at MIT have developed computer software that automates much of the image-matching process. The system, which they’ve named SLOOP, sifts through thousands of images, using pattern-recognition algorithms to analyze features in each image, such as an animal’s arrangement of stripes or spots. The system then identifies an average of 20 most likely matches for an individual.

From there, the researchers turned to crowdsourcing, asking online users to pick the most similar pair. Based on such feedback, the system reorders the list, paring it down to fewer images likely to depict the same individual.

“It’s sort of like Google, in that you type in a search term and you get back potential matches, but ultimately you’re the judge of what’s the best match,” Ravela says. “It makes biologists’ lives a lot easier than having to go through an entire catalog.”

Ravela, along with undergraduates James Duyck and Chelsea Finn, are applying the system to various endangered and threatened species, including geckos, whale sharks and skinks. The group will present details of the system at the Mexican Conference on Pattern Recognition in June.

Beyond facial recognition

In recent years, pattern-recognition algorithms have mostly been developed for facial recognition, matching individuals by features such as eyes, noses and mouths. But Ravela says these algorithms are not sophisticated enough to parse out the enormous complexity in animal patterning.

“To distinguish an individual, like a salamander Bob from a salamander Jill, is tough,” Ravela says. “On the whole, the variability we see within species really tests our assumptions of what makes a good pattern-recognition algorithm.”

His group has developed multiple algorithms to identify matching patterns, including several that adjust for changes in an animal’s lighting, orientation and geometry, and other algorithms that overlay images, comparing the positioning of spots or stripes. The researchers use combinations of algorithms to match images, depending on a species’s particular features.

A user can upload an image to the system, along with any accompanying information such as an individual’s weight, size and location. Depending on the type of animal being catalogued, the user performs a few simple additional steps, such as marking reference points like a lizard’s eyes, nose and shoulder. The system then takes over, using algorithms to adjust the image and ranking its similarity to the rest of the catalog’s images.

Animal tracking

The image-matching system is currently used by New Zealand’s Department of Conservation to track threatened populations of skinks — small lizards that, thanks to a recovery program, have multiplied in recent years. The species’s rebound, while a good sign, poses a more difficult tracking problem for conservationists as the population grows — a problem that SLOOP is helping to solve.

“We had reached the point where two-thirds of our monitoring effort was spent in front of the computer screen, and only one-third in the field directly monitoring the lizards,” says Andy Hutcheon, program manager for the Grand and Otago Skink Recovery Plan in New Zealand. “That’s a lot of eyestrain.”

Using the system, Hutcheon and his colleagues have quickly sorted out individuals from among more than 26,000 existing images. So far, they have found 15 cases in which human error incorrectly identified an individual as two distinct lizards.

Ultimately, SLOOP may help scientists answer broader questions about animal behavior, such as on species’ breeding habits and migration patterns. For example, Hutcheon says the image-matching system has picked out at least six individuals among the entire population that have migrated between study sites, in some cases traveling up to several miles.

“Many New Zealand natives are characterized by lack of detailed data,” Hutcheon says. “New application of technology that can help us to understand their numbers and life history can only help with their conservation.”

Crowd conservation

Going a step further, Ravela’s team looked at the potential for crowdsourcing to further speed up the image-matching process.

As an experiment, the researchers posted thousands of images of salamanders, in groups of four, on Amazon’s Mechanical Turk, an online crowdsourcing marketplace. The researchers asked users to rank the images in order of similarity.

To “rate” a user’s ability, the system was programmed to know the answer to three of every four images. If a user correctly ranked these known images, the system accepted the user’s fourth answer. As incentive to participate, the researchers offered users a modest monetary reward for correct answers.

“We found about a third of the people who came were really good pattern-matchers,” Ravela says. “One guy had a 99.96 percent performance, and stayed for 3,000 comparisons.”

By combining computer-vision algorithms with crowdsourcing, the team is able to quickly identify image matches among thousands of photos with 97 percent accuracy.

The researchers are now working to further automate the system. For example, Ravela’s students are developing algorithms that will automatically separate and outline an animal from an image background. The problem is a difficult one, as the system would have to distinguish between, for instance, a lizard’s leg and a nearby twig. To solve these problems, Finn is developing matching algorithms using feature geometry, while Duyck is combining multiple pattern-matching algorithms to improve image-ranking.

“These are incredibly hard problems,” Ravela says. “On the other hand, doing these things manually is extraordinarily time-consuming. Is there a sweet spot between the two that allows us to solve real problems? That’s what SLOOP is trying to do.”

This work is supported by the National Science Foundation.