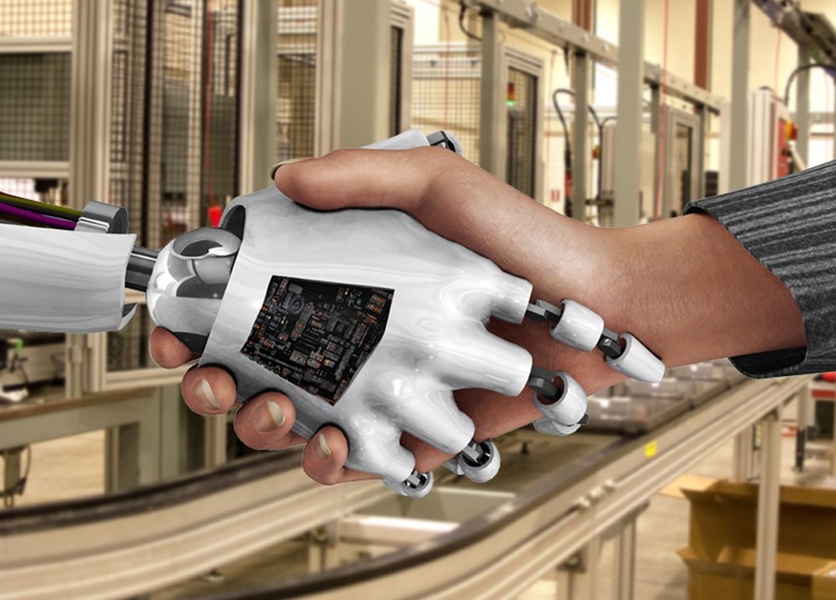

Spending a day in someone else’s shoes can help us to learn what makes them tick. Now the same approach is being used to develop a better understanding between humans and robots, to enable them to work together as a team.

Robots are increasingly being used in the manufacturing industry to perform tasks that bring them into closer contact with humans. But while a great deal of work is being done to ensure robots and humans can operate safely side-by-side, more effort is needed to make robots smart enough to work effectively with people, says Julie Shah, an assistant professor of aeronautics and astronautics at MIT and head of the Interactive Robotics Group in the Computer Science and Artificial Intelligence Laboratory (CSAIL).

“People aren’t robots, they don’t do things the same way every single time,” Shah says. “And so there is a mismatch between the way we program robots to perform tasks in exactly the same way each time and what we need them to do if they are going to work in concert with people.”

Most existing research into making robots better team players is based on the concept of interactive reward, in which a human trainer gives a positive or negative response each time a robot performs a task.

However, human studies carried out by the military have shown that simply telling people they have done well or badly at a task is a very inefficient method of encouraging them to work well as a team.

So Shah and PhD student Stefanos Nikolaidis began to investigate whether techniques that have been shown to work well in training people could also be applied to mixed teams of humans and robots. One such technique, known as cross-training, sees team members swap roles with each other on given days. “This allows people to form a better idea of how their role affects their partner and how their partner’s role affects them,” Shah says.

In a paper to be presented at the International Conference on Human-Robot Interaction in Tokyo in March, Shah and Nikolaidis will present the results of experiments they carried out with a mixed group of humans and robots, demonstrating that cross-training is an extremely effective team-building tool.

To allow robots to take part in the cross-training experiments, the pair first had to design a new algorithm to allow the devices to learn from their role-swapping experiences. So they modified existing reinforcement-learning algorithms to allow the robots to take in not only information from positive and negative rewards, but also information gained through demonstration. In this way, by watching their human counterparts switch roles to carry out their work, the robots were able to learn how the humans wanted them to perform the same task.

Each human-robot team then carried out a simulated task in a virtual environment, with half of the teams using the conventional interactive reward approach, and half using the cross-training technique of switching roles halfway through the session. Once the teams had completed this virtual training session, they were asked to carry out the task in the real world, but this time sticking to their own designated roles.

Shah and Nikolaidis found that the period in which human and robot were working at the same time — known as concurrent motion — increased by 71 percent in teams that had taken part in cross-training, compared to the interactive reward teams. They also found that the amount of time the humans spent doing nothing — while waiting for the robot to complete a stage of the task, for example — decreased by 41 percent.

What’s more, when the pair studied the robots themselves, they found that the learning algorithms recorded a much lower level of uncertainty about what their human teammate was likely to do next — a measure known as the entropy level — if they had been through cross-training.

Finally, when responding to a questionnaire after the experiment, human participants in cross-training were far more likely to say the robot had carried out the task according to their preferences than those in the reward-only group, and reported greater levels of trust in their robotic teammate. “This is the first evidence that human-robot teamwork is improved when a human and robot train together by switching roles, in a manner similar to effective human team training practices,” Nikolaidis says.

Shah believes this improvement in team performance could be due to the greater involvement of both parties in the cross-training process. “When the person trains the robot through reward it is one-way: The person says ‘good robot’ or the person says ‘bad robot,’ and it’s a very one-way passage of information,” Shah says. “But when you switch roles the person is better able to adapt to the robot’s capabilities and learn what it is likely to do, and so we think that it is adaptation on the person’s side that results in a better team performance.”

The work shows that strategies that are successful in improving interaction among humans can often do the same for humans and robots, says Kerstin Dautenhahn, a professor of artificial intelligence at the University of Hertfordshire in the U.K. “People easily attribute human characteristics to a robot and treat it socially, so it is not entirely surprising that this transfer from the human-human domain to the human-robot domain not only made the teamwork more efficient, but also enhanced the experience for the participants, in terms of trusting the robot,” Dautenhahn says.

Robots are increasingly being used in the manufacturing industry to perform tasks that bring them into closer contact with humans. But while a great deal of work is being done to ensure robots and humans can operate safely side-by-side, more effort is needed to make robots smart enough to work effectively with people, says Julie Shah, an assistant professor of aeronautics and astronautics at MIT and head of the Interactive Robotics Group in the Computer Science and Artificial Intelligence Laboratory (CSAIL).

“People aren’t robots, they don’t do things the same way every single time,” Shah says. “And so there is a mismatch between the way we program robots to perform tasks in exactly the same way each time and what we need them to do if they are going to work in concert with people.”

Most existing research into making robots better team players is based on the concept of interactive reward, in which a human trainer gives a positive or negative response each time a robot performs a task.

However, human studies carried out by the military have shown that simply telling people they have done well or badly at a task is a very inefficient method of encouraging them to work well as a team.

So Shah and PhD student Stefanos Nikolaidis began to investigate whether techniques that have been shown to work well in training people could also be applied to mixed teams of humans and robots. One such technique, known as cross-training, sees team members swap roles with each other on given days. “This allows people to form a better idea of how their role affects their partner and how their partner’s role affects them,” Shah says.

In a paper to be presented at the International Conference on Human-Robot Interaction in Tokyo in March, Shah and Nikolaidis will present the results of experiments they carried out with a mixed group of humans and robots, demonstrating that cross-training is an extremely effective team-building tool.

To allow robots to take part in the cross-training experiments, the pair first had to design a new algorithm to allow the devices to learn from their role-swapping experiences. So they modified existing reinforcement-learning algorithms to allow the robots to take in not only information from positive and negative rewards, but also information gained through demonstration. In this way, by watching their human counterparts switch roles to carry out their work, the robots were able to learn how the humans wanted them to perform the same task.

Each human-robot team then carried out a simulated task in a virtual environment, with half of the teams using the conventional interactive reward approach, and half using the cross-training technique of switching roles halfway through the session. Once the teams had completed this virtual training session, they were asked to carry out the task in the real world, but this time sticking to their own designated roles.

Shah and Nikolaidis found that the period in which human and robot were working at the same time — known as concurrent motion — increased by 71 percent in teams that had taken part in cross-training, compared to the interactive reward teams. They also found that the amount of time the humans spent doing nothing — while waiting for the robot to complete a stage of the task, for example — decreased by 41 percent.

What’s more, when the pair studied the robots themselves, they found that the learning algorithms recorded a much lower level of uncertainty about what their human teammate was likely to do next — a measure known as the entropy level — if they had been through cross-training.

Finally, when responding to a questionnaire after the experiment, human participants in cross-training were far more likely to say the robot had carried out the task according to their preferences than those in the reward-only group, and reported greater levels of trust in their robotic teammate. “This is the first evidence that human-robot teamwork is improved when a human and robot train together by switching roles, in a manner similar to effective human team training practices,” Nikolaidis says.

Shah believes this improvement in team performance could be due to the greater involvement of both parties in the cross-training process. “When the person trains the robot through reward it is one-way: The person says ‘good robot’ or the person says ‘bad robot,’ and it’s a very one-way passage of information,” Shah says. “But when you switch roles the person is better able to adapt to the robot’s capabilities and learn what it is likely to do, and so we think that it is adaptation on the person’s side that results in a better team performance.”

The work shows that strategies that are successful in improving interaction among humans can often do the same for humans and robots, says Kerstin Dautenhahn, a professor of artificial intelligence at the University of Hertfordshire in the U.K. “People easily attribute human characteristics to a robot and treat it socially, so it is not entirely surprising that this transfer from the human-human domain to the human-robot domain not only made the teamwork more efficient, but also enhanced the experience for the participants, in terms of trusting the robot,” Dautenhahn says.