To make moral judgments about other people, we often need to infer their intentions — an ability known as “theory of mind.” For example, if one hunter shoots another while on a hunting trip, we need to know what the shooter was thinking: Was he secretly jealous, or did he mistake his fellow hunter for an animal?

MIT neuroscientists have now shown they can influence those judgments by interfering with activity in a specific brain region — a finding that helps reveal how the brain constructs morality.

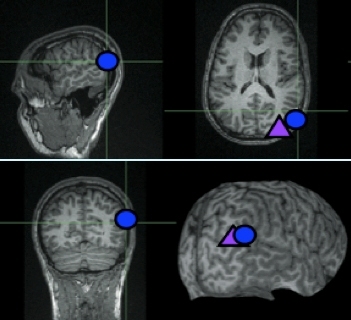

Previous studies have shown that a brain region known as the right temporo-parietal junction (TPJ) is highly active when we think about other people’s intentions, thoughts and beliefs. In the new study, the researchers disrupted activity in the right TPJ by inducing a current in the brain using a magnetic field applied to the scalp. They found that the subjects’ ability to make moral judgments that require an understanding of other people’s intentions — for example, a failed murder attempt — was impaired.

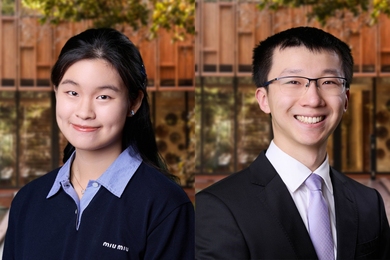

The researchers, led by Rebecca Saxe, MIT assistant professor of brain and cognitive sciences, report their findings in the Proceedings of the National Academy of Sciences the week of March 29. Funding for the research came from The National Center for Research Resources, the MIND Institute, the Athinoula A. Martinos Center for Biomedical Imaging, the Simons Foundation and the David and Lucille Packard Foundation.

The study offers “striking evidence” that the right TPJ, located at the brain’s surface above and behind the right ear, is critical for making moral judgments, says Liane Young, lead author of the paper. It’s also startling, since under normal circumstances people are very confident and consistent in these kinds of moral judgments, says Young, a postdoctoral associate in MIT’s Department of Brain and Cognitive Sciences.

“You think of morality as being a really high-level behavior,” she says. “To be able to apply (a magnetic field) to a specific brain region and change people’s moral judgments is really astonishing.”

Thinking of others

Saxe first identified the right TPJ’s role in theory of mind a decade ago — a discovery that was the subject of her MIT PhD thesis in 2003. Since then, she has used functional magnetic resonance imaging (fMRI) to show that the right TPJ is active when people are asked to make judgments that require thinking about other people’s intentions.

In the new study, the researchers wanted to go beyond fMRI experiments to observe what would happen if they could actually disrupt activity in the right TPJ. Their success marks a major step forward for the field of moral neuroscience, says Walter Sinnott-Armstrong, professor of philosophy at Duke University.

“Recent fMRI studies of moral judgment find fascinating correlations, but Young et al usher in a new era by moving beyond correlation to causation,” says Sinnott-Armstrong, who was not involved in this research.

The researchers used a noninvasive technique known as transcranial magnetic stimulation (TMS) to selectively interfere with brain activity in the right TPJ. A magnetic field applied to a small area of the skull creates weak electric currents that impede nearby brain cells’ ability to fire normally, but the effect is only temporary.

In one experiment, volunteers were exposed to TMS for 25 minutes before taking a test in which they read a series of scenarios and made moral judgments of characters’ actions on a scale of one (absolutely forbidden) to seven (absolutely permissible).

In a second experiment, TMS was applied in 500-milisecond bursts at the moment when the subject was asked to make a moral judgment. For example, subjects were asked to judge how permissible it is for a man to let his girlfriend walk across a bridge he knows to be unsafe, even if she ends up making it across safely. In such cases, a judgment based solely on the outcome would hold the perpetrator morally blameless, even though it appears he intended to do harm.

In both experiments, the researchers found that when the right TPJ was disrupted, subjects were more likely to judge failed attempts to harm as morally permissible. Therefore, the researchers believe that TMS interfered with subjects’ ability to interpret others’ intentions, forcing them to rely more on outcome information to make their judgments.

“It doesn’t completely reverse people’s moral judgments, it just biases them,” says Saxe.

When subjects received TMS to a brain region near the right TPJ, their judgments were nearly identical to those of people who received no TMS at all.

While understanding other people’s intentions is critical to judging them, it is just one piece of the puzzle. We also take into account the person’s desires, previous record and any external constraints, guided by our own concepts of loyalty, fairness and integrity, says Saxe.

“Our moral judgments are not the result of a single process, even though they feel like one uniform thing,” she says. “It’s actually a hodgepodge of competing and conflicting judgments, all of which get jumbled into what we call moral judgment.”

Saxe’s lab is now studying the role of theory of mind in judging situations where the attempted harm was not a physical threat. The researchers are also doing a study on the role of the right TPJ in judgments of people who are morally lucky or unlucky. For example, a drunk driver who hits and kills a pedestrian is unlucky, compared to an equally drunk driver who makes it home safely, but the unlucky homicidal driver tends to be judged more morally blameworthy.

MIT neuroscientists have now shown they can influence those judgments by interfering with activity in a specific brain region — a finding that helps reveal how the brain constructs morality.

Previous studies have shown that a brain region known as the right temporo-parietal junction (TPJ) is highly active when we think about other people’s intentions, thoughts and beliefs. In the new study, the researchers disrupted activity in the right TPJ by inducing a current in the brain using a magnetic field applied to the scalp. They found that the subjects’ ability to make moral judgments that require an understanding of other people’s intentions — for example, a failed murder attempt — was impaired.

The researchers, led by Rebecca Saxe, MIT assistant professor of brain and cognitive sciences, report their findings in the Proceedings of the National Academy of Sciences the week of March 29. Funding for the research came from The National Center for Research Resources, the MIND Institute, the Athinoula A. Martinos Center for Biomedical Imaging, the Simons Foundation and the David and Lucille Packard Foundation.

The study offers “striking evidence” that the right TPJ, located at the brain’s surface above and behind the right ear, is critical for making moral judgments, says Liane Young, lead author of the paper. It’s also startling, since under normal circumstances people are very confident and consistent in these kinds of moral judgments, says Young, a postdoctoral associate in MIT’s Department of Brain and Cognitive Sciences.

“You think of morality as being a really high-level behavior,” she says. “To be able to apply (a magnetic field) to a specific brain region and change people’s moral judgments is really astonishing.”

Thinking of others

Saxe first identified the right TPJ’s role in theory of mind a decade ago — a discovery that was the subject of her MIT PhD thesis in 2003. Since then, she has used functional magnetic resonance imaging (fMRI) to show that the right TPJ is active when people are asked to make judgments that require thinking about other people’s intentions.

In the new study, the researchers wanted to go beyond fMRI experiments to observe what would happen if they could actually disrupt activity in the right TPJ. Their success marks a major step forward for the field of moral neuroscience, says Walter Sinnott-Armstrong, professor of philosophy at Duke University.

“Recent fMRI studies of moral judgment find fascinating correlations, but Young et al usher in a new era by moving beyond correlation to causation,” says Sinnott-Armstrong, who was not involved in this research.

The researchers used a noninvasive technique known as transcranial magnetic stimulation (TMS) to selectively interfere with brain activity in the right TPJ. A magnetic field applied to a small area of the skull creates weak electric currents that impede nearby brain cells’ ability to fire normally, but the effect is only temporary.

In one experiment, volunteers were exposed to TMS for 25 minutes before taking a test in which they read a series of scenarios and made moral judgments of characters’ actions on a scale of one (absolutely forbidden) to seven (absolutely permissible).

In a second experiment, TMS was applied in 500-milisecond bursts at the moment when the subject was asked to make a moral judgment. For example, subjects were asked to judge how permissible it is for a man to let his girlfriend walk across a bridge he knows to be unsafe, even if she ends up making it across safely. In such cases, a judgment based solely on the outcome would hold the perpetrator morally blameless, even though it appears he intended to do harm.

In both experiments, the researchers found that when the right TPJ was disrupted, subjects were more likely to judge failed attempts to harm as morally permissible. Therefore, the researchers believe that TMS interfered with subjects’ ability to interpret others’ intentions, forcing them to rely more on outcome information to make their judgments.

“It doesn’t completely reverse people’s moral judgments, it just biases them,” says Saxe.

When subjects received TMS to a brain region near the right TPJ, their judgments were nearly identical to those of people who received no TMS at all.

While understanding other people’s intentions is critical to judging them, it is just one piece of the puzzle. We also take into account the person’s desires, previous record and any external constraints, guided by our own concepts of loyalty, fairness and integrity, says Saxe.

“Our moral judgments are not the result of a single process, even though they feel like one uniform thing,” she says. “It’s actually a hodgepodge of competing and conflicting judgments, all of which get jumbled into what we call moral judgment.”

Saxe’s lab is now studying the role of theory of mind in judging situations where the attempted harm was not a physical threat. The researchers are also doing a study on the role of the right TPJ in judgments of people who are morally lucky or unlucky. For example, a drunk driver who hits and kills a pedestrian is unlucky, compared to an equally drunk driver who makes it home safely, but the unlucky homicidal driver tends to be judged more morally blameworthy.