Augmented reality is an emerging discipline that uses handheld devices to superimpose digital data on the real world: If, say, you’re in Paris and point your phone at the Eiffel Tower, the tower’s image would pop up on-screen, along with, perhaps, information about its history or the hours that it’s open. With a TV enhancement called Surround Vision, however, researchers at MIT’s Media Lab are bringing the same technology into the living room.

Video: Melanie Gonick

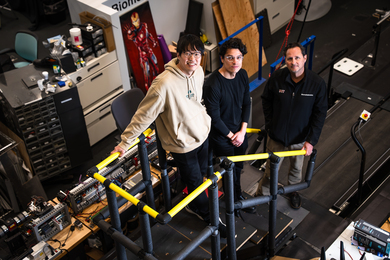

Santiago Alfaro, a graduate student in the lab of Media Lab research scientist Michael Bove, had spent a year investigating the use of cell-phone cameras as digital interfaces when he had an intriguing thought. The soundtracks of contemporary movies, TV shows and video games are often conceived in two dimensions rather than just one: Surround sound technology can allow an audience to, in effect, hear what’s happening off screen. “If you’re watching TV and you hear a helicopter in your surround sound,” Alfaro says, “wouldn’t it be cool to just turn around and be able to see that helicopter as it goes into the screen?”

Surround Vision is intended to work with standard, Internet-connected handheld devices. If a viewer wanted to see what was happening off the left edge of the television screen, she could simply point her cell phone in that direction, and an image would pop up on its screen. The technology could also allow a guest at a Super Bowl party, for instance, to consult several different camera angles on a particular play, without affecting what the other guests see on the TV screen.

To demonstrate the idea, Alfaro shot video footage of the street in front of the Media Lab from three angles simultaneously. A television set replays the footage from the center camera. If a viewer points a motion-sensitive handheld device directly at the TV, the same footage appears on the device’s screen. But if the viewer swings the device either right or left, it switches to one of the other perspectives. The viewer can, for instance, watch a bus approach on the small screen before it appears on the large screen.

Alfaro and Bove envision that, if the system were commercialized, the video playing on the handheld device would stream over the Internet: TV service providers wouldn’t have to modify their broadcasts or their set-top boxes. The handheld would simply use its built-in camera to determine its orientation toward the TV and the selected channel; for both purposes, it might cue off of the insignias that most stations display in the lower right corner of the TV screen. Viewers who opted not to use the enhanced programming need never know they were missing anything. Those who did use it wouldn’t need anything other than a smart phone and a standard Wi-Fi connection.

Many existing handheld devices have built-in motion detectors called accelerometers. But switching between viewing angles with the Surround Vision system seems to involve motion too subtle for accelerometers to register. To get his prototype up and running, Alfaro had to attach a magnetometer — a compass — to an existing handheld device and to write software that incorporated its data with that from the device’s other sensors. But Alfaro and Bove say that devices now on the market — including the most recent version of the iPhone — have magnetometers built in. The researchers have answered most of the technical questions about the system; now their chief concern is determining how best to use it.

To that end, they plan a series of user studies in the spring and summer, which will employ content developed in conjunction with a number of partners. Bove won’t name names, but, he says, “We’re looking at sports; we’re looking at children’s programming, both live action and cartoons; we’re looking at, let’s say, ordinary entertainment programs, as well as programs shot in a studio like talk shows. And we hope to have examples of several of these fairly soon. There are also one or two other things that defy categorization right now, that you sort of have to see in order to understand what they are.”

Since sports broadcasts and other live television shows already feature footage taken from multiple camera angles, they’re a natural fit for the system. Many children’s shows already encourage a kind of audience participation that could be enhanced, Bove says, and viewers of the type of criminal-forensics shows now popular could use Surround Vision to, say, see what the show’s protagonists are looking at through the microscope lens.

Of course, today’s TV shows frequently feature short scenes with quick cuts between different camera angles, which would allow little time for exploration of the virtual environment. But “the system as it stands should not be a constant feature,” Alfaro says. “We can’t ask a user to constantly hold a device up at arm’s length: They will quickly tire and not use it at all.” Alfaro suggests that as TV directors grew to appreciate the system’s strengths, they would likely modify their styles to take advantage of them.

One partner likely to participate in the user studies is Boston’s public-television station WGBH, which has a long history with Bove’s lab. “We always learn from working with Mike and his group,” says Annie Valva, WGBH’s director of technology and interactive multimedia. Whether an experimental technology leads directly to new applications, it allows the station’s staff to “look at content in the archives from a different perspective, because there’s another emerging platform to make it available,” Valva says. “The broadcast is a very limited medium, and oftentimes what we put on is what makes the best story, and what fits in the programming schedule. But with a technology like Surround Vision, it helps us leverage more value over things that we shoot and create but don’t happen to get to air.”

If the user studies conducted by Bove’s group converge on some applications that resonate with viewers, however, Surround Vision could prove to be something more than a spur to the imagination. “In the Media Lab, and even my group, there’s a combination of far-off-in-the-future stuff and very, very near-term stuff, and this is an example of the latter,” Bove says. “This could be in your home next year if a network decided to do it.”

Using ordinary cell phones, a Media Lab system would let television programs spill off the TV screen and into the living room.

Publication Date:

Caption:

In the same way that surround sound lets TV viewers hear what’s happening just off-screen, a new system from the Media Lab gives them the option of watching what’s happening, too, on the screen of a handheld device.

Credits:

Image: Melanie Gonick