NEW TECHNIQUE FOR IMAGING STEEL

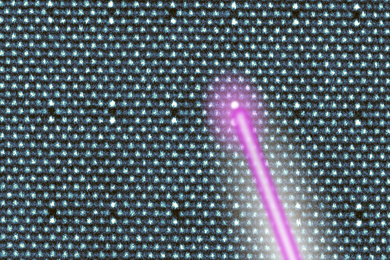

MIT researchers have developed a technology that may improve the productivity of the aluminum and steel industries.

Most aluminum and steel is produced through continuous and/or semicontinuous casting, in which molten metal is drawn from a large container and simultaneously cooled and pulled out of the liquid to form a solid strand of material up to 24 inches thick. The point at which liquid metal becomes solid-the solidification front-is a critical area for production. The shape, location and stability of the front affect such things as cracking of the solidified metal.

The ability to track the front in real time could therefore have a significant economic impact. It could, for example, permit as much as a 10 percent increase in casting productivity.

Enter the technology invented by Professor Jung-Hoon Chun and Dr. Nannaji Saka of the Department of Mechanical Engineering, and Dr. Richard Lanza of the Department of Nuclear Engineering. The tomographic-imaging technique, patented this year, uses high-energy X-rays to find the front in a process similar to that for CAT scans. Ordinary X-ray sources cannot penetrate the thick strands of steel, so a compact linear accelerator is used to produce the X-rays.

Funding for the work is provided by NSF, Idaho National Engineering Laboratory, Alcoa, Reynolds Metals, US Steel, Inland Steel and Ipsco. Other support comes from American Iron and Steel Institute and Analogic.

(Source: Know Nukes, the Department of Nuclear Engineering newsletter)

COMPUTER `WIZARD' INTERACTS WITH PEOPLE

Gandalf is a computer character capable of face-to-face interaction with people in real-time, perceiving their gestures, speech and gaze. He was developed by a recent MIT graduate working with Professor Justine Cassell, head of the MIT Media Lab's Gesture and Narrative Language group.

The aim of the research is to enable people to interact with computers the same way they interact with other humans. "When we look closely at how people communicate, we find them using not only the spoken word, but also intonation, pauses, and facial and body gestures," Professor Cassell said. "If computers could understand these non-verbal communications, they could adapt to us, instead of making us adapt to them." Currently, to interact with Gandalf the user must wear a body-tracking suit, an eye tracker and a microphone. Eventually this equipment will become unnecessary as computer-vision systems become able to perceive the users' visual and auditory behavior.

Gandalf can now answer questions about the planets of the solar system. Future work includes adding more complex natural language understanding and generation, and an increased ability to follow dialogue in real time. The work is funded by sponsors of the MIT Media Lab. For more information, go to the Web page at

(Source: Ellen Hoffman, Frames, a publication of the MIT Media Lab)

A version of this article appeared in MIT Tech Talk on November 6, 1996.