When it comes to programming video-game characters to act realistically in response to ever-changing environments, there is only so much current artificial intelligence (AI) can do.

Even skilled AI programmers can devote years to a single game. They have to consider all the events that might occur and map out characters’ possible reactions: In a first-person shooter game, for example, the AI-controlled character needs to know how to collect ammunition, seek his target, get within firing range and then escape.

What if making certain kinds of AI didn’t have to be that laborious? What if an algorithm, extrapolating from a few decisions made by players, could figure things out for itself — and even reuse those lessons from one game to the next?

And what if all this could be done by someone with no AI training at all?

”Robotany,” a game prototype from the Singapore-MIT GAMBIT Game Lab, wants to answer those questions.

Set in a garden, the game features small, robot-like creatures that take care of plants. The player manipulates graphs of the robots’ three sensory inputs — three overlapping AIs — and these manipulations teach the AIs how to direct characters in new situations.

“The scheme behind Robotany requires that we ask the user to describe what the AI should do in just a few example situations, and our algorithm deduces the rest,” says the game’s product owner, GAMBIT Game Lab’s Andrew Grant. “In essence, when faced with something the user hasn't described, the algorithm finds a similar situation that the user did specify, and goes with that.”

The game was developed as part of GAMBIT’s eight-week summer program, which brings together young artists, programmers and project managers from U.S. and Singaporean institutes.

Robotany’s 11-person team pushed game research in a unique direction by taking advantage of the human brain’s ability to identify patterns.

“With our approach,” Grant says, “we can drastically reduce the number of examples we need to make an interesting AI, well before you’d traditionally get anything good.”

Game director Jason Begy adds, “The player can effectively give the characters some instructions and then walk away indefinitely while the game runs.”

Other AI developers are enthusiastic about this new approach.

“Robotany represents a great new direction for game AI,” says Damian Isla, who was the artificial intelligence lead at Bungie Studios, makers of the “Halo” franchise. “The AIs’ brains are grown organically with help from the player, rather than painstakingly rebuilt from scratch each time by an expert programmer.”

MIT Media Lab researcher and GAMBIT summer program alumnus Jeff Orkin says solving this kind of challenge would be “one of the holy grails of AI research,” since the video game industry spends an incredible amount of time and money micromanaging the decisions that characters make. “It would be a boon to the game industry, as long as the system still provided designers with an acceptable degree of control,” he says.

The Robotany team, honored as a finalist in the student competition at the upcoming Independent Games Festival, China, also included producer Shawn Conrad of MIT; artists Hannah Lawler of the Rhode Island School of Design, Benjamin Khan of Singapore’s Nanyang Technological University and Hing Chui of the Rhode Island School of Design; quality assurance lead Michelle Teo of Ngee Ann Polytechnic; designer Patrick Rodriguez of MIT; programmers Biju Joseph Jacob of Nanyang Technological University and Daniel Ho of National University Singapore; and audio designer Ashwin Ashley Menon of Republic Polytechnic.

Resources

Video trailer

http://www.youtube.com/watch?v=K8zSY5kMscI

Poster (PDF)

http://gambit.mit.edu/images/a4_robotany.pdf

Poster thumbnail

http://gambit.mit.edu/images/a4_robotany_tmb.jpg

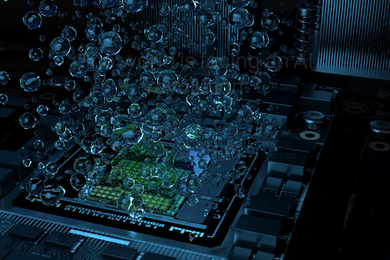

Gameplay image

http://gambit.mit.edu/images/robotany5.jpg