Neuroscientists at MIT and Harvard have made the surprising discovery that the brain sees some faces as male when they appear in one area of a person’s field of view, but female when they appear in a different location.

The findings challenge a longstanding tenet of neuroscience — that how the brain sees an object should not depend on where the object is located relative to the observer, says Arash Afraz, a postdoctoral associate at MIT’s McGovern Institute for Brain Research and lead author of a new paper on the work.

“It’s the kind of thing you would not predict — that you would look at two identical faces and think they look different,” says Afraz. He and two colleagues from Harvard, Patrick Cavanagh and Maryam Vaziri Pashkam, described their findings in the Nov. 24 online edition of the journal Current Biology.

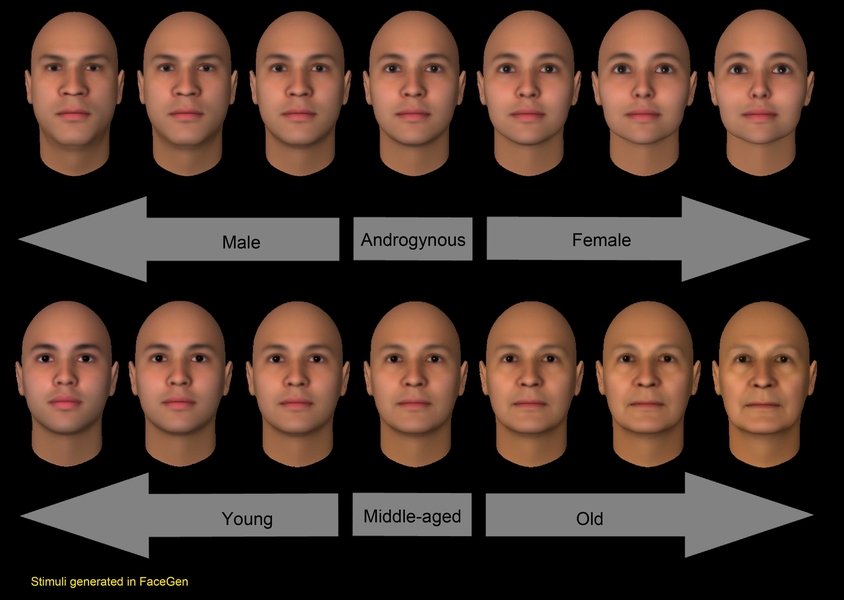

In the real world, the brain’s inconsistency in assigning gender to faces isn’t noticeable, because there are so many other clues: hair and clothing, for example. But when people view computer-generated faces, stripped of all other gender-identifying features, a pattern of biases, based on location of the face, emerges.

The researchers showed subjects a random series of faces, ranging along a spectrum of very male to very female, and asked them to classify the faces by gender. For the more androgynous faces, subjects rated the same faces as male or female, depending on where they appeared.

Study participants were told to fix their gaze at the center of the screen, as faces were flashed elsewhere on the screen for 50 milliseconds each. Assuming that the subjects sat about 22 inches from the monitor, the faces appeared to be about three-quarters of an inch tall.

The patterns of male and female biases were different for different people. That is, some people judged androgynous faces as female every time they appeared in the upper right corner, while others judged faces in that same location as male. Subjects also showed biases when judging the age of faces, but the pattern for age bias was independent from the pattern for gender bias in each individual.

Sample size

Afraz believes this inconsistency in identifying genders is due to a sampling bias, which can also be seen in statistical tools such as polls. For example, if you surveyed 1,000 Bostonians, asking if they were Democrats or Republicans, you would probably get a fairly accurate representation of these percentages in the city as a whole, because the sample size is so large. However, if you took a much smaller sample, perhaps five people who live across the street from you, you might get 100 percent Democrats, or 100 percent Republicans. “You wouldn’t have any consistency, because your sample is too small,” says Afraz.

He believes the same thing happens in the brain. In the visual cortex, where images are processed, cells are grouped by which part of the visual scene they analyze. Within each of those groups, there is probably a relatively small number of neurons devoted to interpreting gender of faces. The smaller the image, the fewer cells are activated, so cells that respond to female faces may dominate. In a different part of the visual cortex, cells that respond to male faces may dominate.

“It’s all a matter of undersampling,” says Afraz.

Michael Tarr, codirector of the Center for the Neural Basis of Cognition at Carnegie Mellon University, says the findings add to the growing evidence that the brain is not always consistent in how it perceives objects under different circumstances. He adds that the study leaves unanswered the question of why each person develops different bias patterns. “Is it just noise within the system, or is some other kind of learning occurring that they haven’t figured out yet?” asks Tarr, who was not involved in the research. “That’s really the fascinating question.”

Afraz and his colleagues looked for correlations between each subject’s bias pattern and other traits such as gender, height and handedness, but found no connections.

He is now doing follow-up studies in the lab of James DiCarlo, associate professor of neuroscience at MIT, including an investigation of whether brain cells can be recalibrated to respond to faces differently.

The findings challenge a longstanding tenet of neuroscience — that how the brain sees an object should not depend on where the object is located relative to the observer, says Arash Afraz, a postdoctoral associate at MIT’s McGovern Institute for Brain Research and lead author of a new paper on the work.

“It’s the kind of thing you would not predict — that you would look at two identical faces and think they look different,” says Afraz. He and two colleagues from Harvard, Patrick Cavanagh and Maryam Vaziri Pashkam, described their findings in the Nov. 24 online edition of the journal Current Biology.

In the real world, the brain’s inconsistency in assigning gender to faces isn’t noticeable, because there are so many other clues: hair and clothing, for example. But when people view computer-generated faces, stripped of all other gender-identifying features, a pattern of biases, based on location of the face, emerges.

The researchers showed subjects a random series of faces, ranging along a spectrum of very male to very female, and asked them to classify the faces by gender. For the more androgynous faces, subjects rated the same faces as male or female, depending on where they appeared.

Study participants were told to fix their gaze at the center of the screen, as faces were flashed elsewhere on the screen for 50 milliseconds each. Assuming that the subjects sat about 22 inches from the monitor, the faces appeared to be about three-quarters of an inch tall.

The patterns of male and female biases were different for different people. That is, some people judged androgynous faces as female every time they appeared in the upper right corner, while others judged faces in that same location as male. Subjects also showed biases when judging the age of faces, but the pattern for age bias was independent from the pattern for gender bias in each individual.

Sample size

Afraz believes this inconsistency in identifying genders is due to a sampling bias, which can also be seen in statistical tools such as polls. For example, if you surveyed 1,000 Bostonians, asking if they were Democrats or Republicans, you would probably get a fairly accurate representation of these percentages in the city as a whole, because the sample size is so large. However, if you took a much smaller sample, perhaps five people who live across the street from you, you might get 100 percent Democrats, or 100 percent Republicans. “You wouldn’t have any consistency, because your sample is too small,” says Afraz.

He believes the same thing happens in the brain. In the visual cortex, where images are processed, cells are grouped by which part of the visual scene they analyze. Within each of those groups, there is probably a relatively small number of neurons devoted to interpreting gender of faces. The smaller the image, the fewer cells are activated, so cells that respond to female faces may dominate. In a different part of the visual cortex, cells that respond to male faces may dominate.

“It’s all a matter of undersampling,” says Afraz.

Michael Tarr, codirector of the Center for the Neural Basis of Cognition at Carnegie Mellon University, says the findings add to the growing evidence that the brain is not always consistent in how it perceives objects under different circumstances. He adds that the study leaves unanswered the question of why each person develops different bias patterns. “Is it just noise within the system, or is some other kind of learning occurring that they haven’t figured out yet?” asks Tarr, who was not involved in the research. “That’s really the fascinating question.”

Afraz and his colleagues looked for correlations between each subject’s bias pattern and other traits such as gender, height and handedness, but found no connections.

He is now doing follow-up studies in the lab of James DiCarlo, associate professor of neuroscience at MIT, including an investigation of whether brain cells can be recalibrated to respond to faces differently.