Whether a machine or human is on the receiving end of the sound of a word, there are some things that have to happen for the word to be understood, said MIT speech expert Kenneth N. Stevens, one of the plenary speakers at "The Speech Chain," the 13th annual Health Sciences and Technology forum held at MIT last Thursday.

Also at the forum, more than 50 MD and PhD scholars hosted a poster session on their work, which ranges from developing handheld computers for better patient care to using ultrasound as a noninvasive cancer therapy.

To understand words, both humans and machines need an intact auditory system, a knowledge of language and a lexicon in which to search for the meaning of the word, said Dr. Stevens, the Clarence J. Lebel Professor of Electrical Engineering and Computer Science. He also is principal investigator in the Speech Communication Group of the Research Laboratory of Electronics and a faculty member in the Harvard-MIT Division of Health Sciences and Technology, which sponsored the forum.

One thing that may aid machines of the future in better "understanding" human speech is an acoustic analysis system that varies for consonants and vowels, Professor Stevens said. This would help the machine pick up the same kind of cues people use to help them determine which letter they just heard, especially similar sounding letters such as B, D and G. Right now, he said, speech recognition systems use the same method of analysis for all sounds.

Professor Stevens, who yesterday received a National Medal of Science from President Clinton in Washington, DC, first became interested in speech communication when he came to the Acoustics Laboratory at MIT as a graduate student.

He was influenced by Gunnar Fant, a visitor at the laboratory, as well as by a number of other acousticians, linguists, psychologists and engineers. His doctoral thesis research on the perception of speech-like sounds was the beginning of a long-term interest in speech perception, including perceptual cues for vowels and consonants.

Professor Stevens's background in acoustics led to the study of how sounds were generated in the vocal tract. Interaction with MIT linguists Morris Halle and Jay Keyser gave him an appreciation for the underlying discrete nature of speech events, and he became interested in the fact that some of this discreteness is represented rather directly in the acoustic speech stream.

This relation between the discrete linguistic representation of speech and its articulatory and acoustic manifestation has motivated his research in recent years. It also has led to more application-oriented research on speech synthesis on models for lexical access in running speech, and on the application of knowledge of articulatory-acoustic relations in the study of speech disorders.

ACOUSTIC CUES

People rely on cues such as where the tongue body hits the roof of the mouth in forming a letter to help figure out what sounds and words they are hearing. For instance, the tongue is released from the roof of the mouth more slowly for a "G" sound than a "D" sound, Professor Stevens said.

The entire shape and movements of the mouth change for vowels and for consonants, he said. The mouth is open for a vowel and narrowed for a consonant, and the way we say vowels or consonants changes depending on the string of words in the sentence.

An analysis of sound waves shows that there are no boundaries between the speech sounds in a sentence; the waves look like one continuous stream. Depending on the order of the words, vowels tend to get shortened and dropped. Even individual letters sound different based on where they fall within words. The "S" of school, for instance, is subtly influenced by the coming "oo" sound. "Various articulatory movements start overlapping and influencing each other," he said.

Sometimes consonants sandwiched between certain other sounds almost disappear, such as the "T" in "gently." But each feature of each word segment is represented, no matter how faintly.

The variability of human speech seems endless, but Professor Stevens said it is not random. Humans are working with an "analog system with a bandwidth of only a few hertz," he said, but speakers can introduce all kinds of variability into their speech. We need to perceive as many as 10 or 15 features of the sounds we hear to identify a single word, he said.

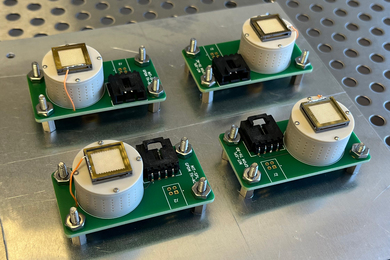

Also at the plenary session, HST faculty member Donald K. Eddington, associate professor of otology and laryngology at Harvard Medical School and principal research scientist at MIT's Research Laboratory of Electronics, spoke about the cochlear implant, a neural prosthesis for the deaf. Cochlear implants have made major strides in recent years toward providing a significant level of hearing for those who have lost hearing sometime after childhood.

A version of this article appeared in MIT Tech Talk on March 15, 2000.