This article is reprinted with permission from the September 1999 issue of Electrical Engineering and Computer Science, the department's newsletter.

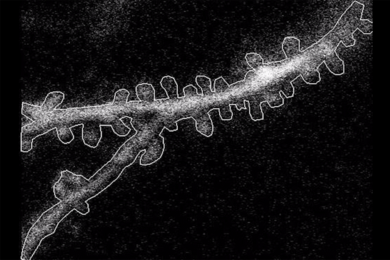

The inner ear is sensitive to sounds that vibrate the eardrum by less than the radius of a hydrogen atom, though the mechanisms by which this happens are not fully understood. Now, Associate Professor Dennis M. Freeman and colleagues in the Research Laboratory of Electronics' Auditory Physiology Research group have devised methods to "see" the motions of inner ear cells that barely blur high-resolution images from an optical microscope.

The key to the approach is the marriage of the computer with video microscopy. To gain insight into the signal-processing functions of the ear, Professor Freeman has devised techniques to make slow-motion, three-dimensional movies of sensory cells and their neighbors during sound stimulation. The movies resolve not only the motions of cells but also individual motions of the 50 to 100 microscopic sensory hairs that protrude from each sensory cell.

By analyzing these movies with algorithms from machine vision, quantitative measurements, accurate to a billionth of a meter, are possible. These measurements allow direct tests of how the million moving parts in each of our ears cooperate to provide our remarkable sense of hearing.

Many factors contributed to Professor Freeman's investigations of auditory physiology. "My family has a history of hearing problems, and I have a slight hearing problem myself," said Dr. Freeman, the W.M. Keck Career Development Associate Professor in Biomedical Engineering in the Department of Electrical Engineering and Computer Science.

He started out working with Dr. Lou Braida, the Henry Ellis Warren Professor of Electrical Engineering, on making a better hearing aid. The signal processing worked exactly as planned, but it didn't seem to help people with hearing loss. Frustrated by the setback, Professor Freeman decided to take a month off to study how the ear worked.

"I wanted to work on figuring out how the ear processed sound, with the goal of providing information that would help engineer better hearing aids. It turned out that the answers to my questions were hard to find, and it became a career in itself to understand the physiology.

"I really wanted to understand the neural code for sound, but that was already known to be quite complicated. Evidence suggested that motions of cells might be important," Professor Freeman said.

At this point, his focus shifted to physiological modeling to the hydrodynamics of sensory cells in the inner ear. He analyzed mathematical models to determine how the mechanically sensitive parts of the sensory hair cells should move. However, after the models were analyzed, there were no data available to test the theory. So the next step was to figure out how to get experimental data.

To measure motions as small as nanometers, Professor Freeman and his colleagues use video microscopy. The development of the charge-coupled device camera brought high quality and video together, but motions in the ear are much smaller than the pixels of a camera. Working on an entirely different class of problems, Berthold Horn and his colleagues at the Artificial Intelligence Lab developed powerful algorithms to determine motions from video images, algorithms that can reliably measure motions much smaller than a pixel.

Only one problem remains. The interesting motions of sensory cells in the inner ear are at audio frequencies and are faster than the fastest commercial cameras. To overcome this problem, Professor Freeman uses stroboscopic illumination to slow the apparent motion. The result is slow-motion, three-dimensional movies of inner ear motions.

He and his group have demonstrated motion measurements with much greater precision than had previously been thought possible. Using methods similar to their auditory research, they have extended their research into the realm of microelectromechanical systems (MEMS), including micro-fabricated silicon structures that measure acceleration and angular velocity.

THE FUTURE

The invention of the transistor made it possible to make much smaller electronic devices than had previously been possible. The development of very large scale integration (VLSI) is perhaps even more significant. It is inconceivable that one could fabricate a modern computer with discrete components. MEMS are allowing the large-scale integration of not just electronic, but also mechanical, optical and fluidic devices.

"What if we could make a machine with a million moving parts? Will VLSI have the same enormous effect on mechanics, optics, and fluidics that it had on electronics?" Professor Freeman said. "I suspect yes. After all, the inner ear is a biological VLSI micromechanical system. Why shouldn't we use the same ideas in making artificial systems? And our toolbox is getting bigger. It is inevitable that we will learn to assemble biological parts into artificial machines."

Today, molecular biologists can determine the structure of an ion channel and its exact sequence of amino acids. Then they can manipulate and change its structure, and figure out the relation between a molecule's structure and function. This biological endeavor looks a lot more like engineering than did any aspect of biology just 10 years ago.

Professor Freeman's research is sponsored by the NIH (the hearing project) and DARPA (the MEMS project).

A version of this article appeared in MIT Tech Talk on September 22, 1999.