MIT engineers and colleagues from Brigham and Women's Hospital, Harvard Medical School and TASC Inc. are developing the equivalent of X-ray vision for surgeons, giving them the ability to see inside a patient before the first cut.

With the new system, an outgrowth of defense-related research on computer vision, a surgeon can tell the exact location of structures like critical blood vessels and tumors. As a result, the surgeon knows precisely where to make cuts to minimize the invasiveness of surgeries. This in turn will minimize trauma to the patient and reduce the length of hospital stays.

The system, which provides a 3D map of a patient's internal anatomy superimposed like a transparency over live video of the patient, is already being used in pre-operative planning for brain surgery at Brigham and Women's Hospital. Soon the developers hope to bring the full system into the operating room to aid surgeons during an actual procedure.

The researchers believe that their system could also be useful for other surgeries, like sinus surgery, where surgeons have a limited view of where their instruments are working. Another application in development is using the system to track the growth of tumors or multiple-sclerosis (MS) lesions.

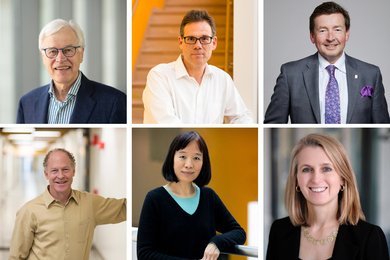

The MIT researchers involved in the work are Professors Eric L. Grimson and Tomas Lozano-Perez of the Department of Electrical Engineering and Computer Science (EECS) and graduate student Gil J. Ettinger of EECS and TASC (the MIT researchers are also affiliated with the Artificial Intelligence Laboratory).

The main collaborators are Steve J. White of TASC, and the following researchers from Brigham and Women's Hospital and Harvard Medical School: William M. Wells III, Ron Kikinis, MD, and Ferenc Jolesz, MD, (all of the Radiology Department), and Langham Gleason, MD, and Peter Black, MD, neurosurgeons from the Surgery Department.

This month the researchers published a paper on the work in the Proceedings of the First International Conference on Computer Vision, Virtual Reality and Robotics in Medicine.

PRECISE ALIGNMENT

At the heart of the system is software that allows precise registration, or alignment, of images. "Our algorithm gives us a totally automatic way of taking a view of a patient, and taking a model of the 3D internal anatomy of that patient, and exactly lining them up," Professor Grimson said.

Developing a 3D model of a patient's internal anatomy, which is done via magnetic resonance imaging (MRI), is not new. But until now surgeons haven't been able to automatically transfer that model to the patient. "That's where we come in," Professor Grimson said. "We figure out how to take that 3D model and translate it and rotate it so that it will line up exactly with the patient."

To date, the researchers have used the system in helping to plan brain surgeries for 10 patients at Brigham and Women's Hospital. In this process, the researchers first scan the patient with a laser that acquires 3D data of the exterior of the patient's head. With that data they can then automatically align the 3D MRI model of a patient's internal anatomy over live video of his head.

The resulting image is displayed on a monitor directly in front of the surgeon. Then, as the surgeon watches the monitor, he can trace onto the patient's shaved skull the position of the tumor and other significant structures. Because the video is live, he is able to watch his own hand on the monitor making these marks. "It takes a bit of hand-eye coordination, but surgeons are great at that," Professor Grimson said.

He noted that all of the patients have been "extremely enthusiastic" about the new technique. This isn't surprising: standard procedure in neurosurgery for pinpointing the location of a tumor requires that patients be fitted with a metal cage that is screwed into the skull. (MRI scans of the skull and cage are then used to determine where the surgeon should cut.)

INTO THE OR

The next step will be to move the full system into the operating room. "It will provide a way for surgeons to check where they are during an operation," Professor Grimson said. "For example, if the tumor is in a tough place, the surgeon could get partway in and then check the monitor to determine if he's getting close to a critical structure" like a major blood vessel.

Part of the system is already being used in the OR at the Brigham. During a procedure, surgeons there can view the superimposed image of the patient and his internal structures on a monitor. But the registration to create this image is currently done on the computer manually, which is time consuming and often inaccurate. "We're about to replace that with the full system, which will do the registration automatically," Professor Grimson said. That will make the process much more efficient: the automatic registration the researchers are using in pre-operative planning takes only a few minutes.

Future plans could include replacing the monitor with virtual-reality goggles. Through them the surgeon would see the patient's head with the 3D map of internal structures superimposed over it. "And as the surgeon moved around, the 3D map would also move so that it's always in registration with what the surgeon is looking at," Professor Grimson said.

"We have this joke that it's like having X-ray vision, but that's really what we're doing."

OTHER APPLICATIONS

The researchers are also working on other applications of the system in addition to brain surgery. For example, they believe it could be useful for surgeries where surgeons have a limited view of where their instruments are working. An example is endoscopic surgery, in which instruments are inserted through small openings in the body and threaded to the area of interest. Currently surgeons use a small fiber-optic scope fitted with a TV camera, also inserted through one of the openings, to give them a view of what's inside.

That view, however, is limited. With the new system, "instead of having a view of just what the camera's seeing, we'll show the surgeon in full 3D exactly where she is in the anatomy," said Professor Grimson. To date the researchers have demonstrated this with plastic skulls and other trial objects.

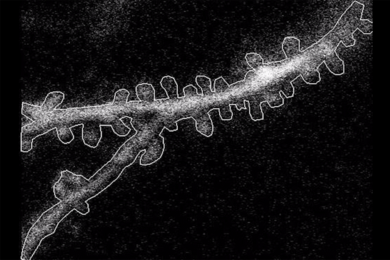

The registration software also has applications in non-surgical medical studies. For example, it is proving useful in a study at the Brigham to track the evolution of MS lesions in a patient (the resulting data can help determine the effectiveness of various drugs, etc.). In this NIH-funded study, MRI scans of the brains of 50 MS patients were performed over the course of a year (more than 20 per patient) and stored in a computer. By comparing the scans for each patient over time, clinicians can track the growth of MS lesions.

To do so, however, they must first line up each of the scans. And that's difficult, because each is taken from a slightly different position. To make matters worse, patients can have hundreds of tiny MS lesions, so "the scans must be aligned to within one millimeter for accuracy," Mr. Ettinger said.

Using the new software, however, the researchers were able to register all the scans for one patient quickly and precisely. "The software gives clinicians a tool to do this work automatically," Professor Grimson said.

The registration system, for which the team has applied for a patent, is based on years of MIT research in computer vision (one application of which is target recognition for the military). "It's a tremendous opportunity to enable technology to move from one area into a very different domain," said Professor Grimson, who is currently spending his sabbatical working on the project.

The overall project is one of at least five approaches to providing doctors with enhanced views of surgical sites. Researchers at New York University Medical Center, the Hospital for Sick Children in Toronto, Guy's Hospital in London, and INRIA in Nice, France, are developing other systems.

The MIT/Brigham/TASC work is supported at the Brigham and TASC by internal funds from those organizations. The MIT researchers are currently working "mostly on spec," Professor Grimson said, with some funding from ARPA and the NIH.

The project is only about two years old. Said Professor Grimson: "We're moving in a hurry and having a lot of fun."

A version of this article appeared in MIT Tech Talk on April 26, 1995.