MIT researchers have developed a biomedical imaging system that could ultimately replace a $100,000 piece of a lab equipment with components that cost just hundreds of dollars.

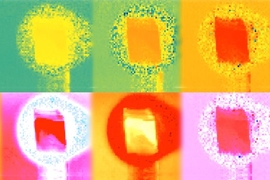

The system uses a technique called fluorescence lifetime imaging, which has applications in DNA sequencing and cancer diagnosis, among other things. So the new work could have implications for both biological research and clinical practice.

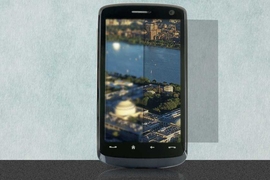

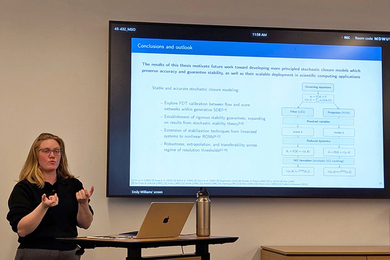

“The theme of our work is to take the electronic and optical precision of this big expensive microscope and replace it with sophistication in mathematical modeling,” says Ayush Bhandari, a graduate student at the MIT Media Lab and one of the system’s developers. “We show that you can use something in consumer imaging, like the Microsoft Kinect, to do bioimaging in much the same way that the microscope is doing.”

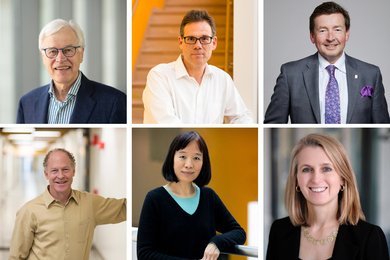

The MIT researchers reported the new work in the Nov. 20 issue of the journal Optica. Bhandari is the first author on the paper, and he’s joined by associate professor of media arts and sciences Ramesh Raskar and Christopher Barsi, a former research scientist in Raskar’s group who now teaches physics at the Commonwealth School in Boston.

Fluorescence lifetime imaging, as its name implies, depends on fluorescence, or the tendency of materials known as fluorophores to absorb light and then re-emit it a short time later. For a given fluorophore, interactions with other chemicals will shorten the interval between the absorption and emission of light in a predictable way. Measuring that interval — the “lifetime” of the fluorescence — in a biological sample treated with a fluorescent dye can reveal information about the sample’s chemical composition.

In traditional fluorescence lifetime imaging, the imaging system emits a burst of light, much of which is absorbed by the sample, and then measures how long it takes for returning light particles, or photons, to strike an array of detectors. To make the measurement as precise as possible, the light bursts are extremely short.

The fluorescence lifetimes pertinent to biomedical imaging are in the nanosecond range. So traditional fluorescence lifetime imaging uses light bursts that last just picoseconds, or thousandths of nanoseconds.

Blunt instrument

Off-the-shelf depth sensors like the Kinect, however, use light bursts that last tens of nanoseconds. That’s fine for their intended purpose: gauging objects’ depth by measuring the time it takes light to reflect off of them and return to the sensor. But it would appear to be too coarse-grained for fluorescence lifetime imaging.

The Media Lab researchers, however, extract additional information from the light signal by subjecting it to a Fourier transform. The Fourier transform is a technique for breaking signals — optical, electrical, or acoustical — into their constituent frequencies. A given signal, no matter how irregular, can be represented as the weighted sum of signals at many different frequencies, each of them perfectly regular.

The Media Lab researchers represent the optical signal returning from the sample as the sum of 50 different frequencies. Some of those frequencies are higher than that of the signal itself, which is how they are able to recover information about fluorescence lifetimes shorter than the duration of the emitted burst of light.

For each of those 50 frequencies, the researchers measure the difference in phase between the emitted signal and the returning signal. If an electromagnetic wave can be thought of as a regular up-and-down squiggle, phase is the degree of alignment between the troughs and crests of one wave and those of another. In fluorescence imaging, phase shift also carries information about the fluorescence lifetime.

Not all of the light that strikes the biological sample is absorbed; some of it is reflected back. The MIT researchers’ system takes the measurements of incoming light and fits them to a mathematical model of the overlapping intensity profiles of both reflected and re-emitted light.

Once it’s deduced the intensity profile of the reflected light, it can calculate the distance between the emitter and the sample. So unlike conventional fluorescence lifetime imaging, the researchers’ approach doesn’t require distance calibration.

Sample size

According to Bhandari, some of his colleagues were skeptical that the returning light signal contained enough information to produce accurate models of the intensity profiles. “They were not convinced that the precision of Kinect-like sensors is enough,” he says. “But lifetime and distance are two numbers. If you have two numbers, then 50 measurements is a lot. The desired information is two points, but the measurement is 50 points, so you have a ratio of one to 25. It’s enough to give you the intuition that it should be workable.”

The depth sensors that the researchers used in their experiments — the Kinect and others — had arrays of roughly 20,000 light detectors each, and the most accurate results came when the detector was 2.5 meters away from the biological sample. That setup doesn’t afford the image resolution that existing fluorescence lifetime imaging microscopes do. But while denser arrays of detectors and optics that better control the emission and gathering of light would inflate the cost of the researchers’ system beyond the $100 that a Kinect costs, it still shouldn’t be nearly as expensive as current fluorescence lifetime imaging systems.

“If I had one of these devices, what I would do is just go looking around the world at stuff,” says Adam Cohen, a professor of chemistry and chemical biology and of physics at Harvard University. “There might be all sorts of interesting things. It’s a new modality for looking around the world. It’s just like, humans can’t see polarization in the light — we only see color — and there’s all this structure that insects can see because they can see polarization that we’re just blind to. To my knowledge, there’s no living organism that can see excited-state lifetime, and so there’s this contrast modality that has not been explored by life that nonetheless might be very interesting. So I’d look at it.”