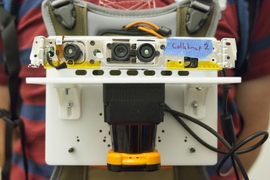

The prototype sensor included a stripped-down Microsoft Kinect camera (top) and a laser rangefinder (bottom), which looks something like a camera lens seen side-on.

Photo: Patrick Gillooly

Connected to the array of sensors is a handheld pushbutton device that the wearer can use to annotate the map. In the prototype system, depressing the button simply designates a particular location as a point of interest. But the researchers envision that emergency responders could use a similar system to add voice or text tags to the map — indicating, say, structural damage or a toxic spill.

“The operational scenario that was envisioned for this was a hazmat situation where people are suited up with the full suit, and they go in and explore an environment,” says Maurice Fallon, a research scientist in MIT’s Computer Science and Artificial Intelligence Laboratory, and lead author on the new paper. “The current approach would be to textually summarize what they had seen afterward — ‘I went into this room on the left, I saw this, I went into the next room,’ and so on. We want to try to automate that.”

Fallon is joined on the paper by professors John Leonard and Seth Teller, of, respectively, the departments of Mechanical Engineering and of Electrical Engineering and Computer Science (EECS), and EECS grad students Hordur Johannsson and Jonathan Brookshire.

Shaky aim

The new work builds on previous research on systems that enable robots to map their environments. But adapting the system so that a human could wear it required a number of modifications.

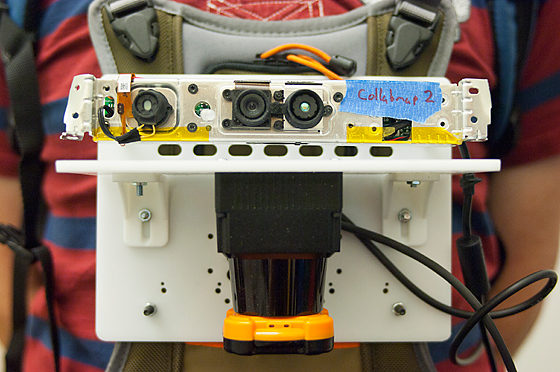

Maurice Fallon, a research scientist in MIT’s Computer Science and Artificial Intelligence Laboratory, demonstrates how the sensor is worn.

Photo: Patrick Gillooly

So in addition to the rangefinder, the researchers also equipped their sensor platform with a cluster of accelerometers and gyroscopes, a camera, and, in one group of experiments, a barometer (changes in air pressure proved to be a surprisingly good indicator of floor transitions). The gyroscopes could infer when the rangefinder was tilted — information the mapping algorithms could use in interpreting its readings — and the accelerometers provided some information about the wearer’s velocity and very good information about changes in altitude.

Adjudicating the data from all the other sensors is the camera. Every few meters, the camera takes a snapshot of its surroundings, and software extracts a couple of hundred visual features from the image — particular patterns of color, or contours, or inferred three-dimensional shapes. Each batch of features is associated with a particular location on the map.

Seeing is believing

If the person wearing the sensors returns to an area that he or she has previously visited, the system’s location estimate could be off: For instance, its compensation for the tilt of the rangefinder might not have been perfect, and a wall now looks several feet farther away than it did, or its inference of position from accelerometer data could be off. In such cases, a fresh snapshot and a comparison of the visual features with those already stored can help correct its location estimate.

The prototype of the sensor platform consists of a handful of devices attached to a sheet of hard plastic about the size of an iPad, which is worn on the chest like a backward backpack. The only sensor whose volume can’t be reduced significantly is the rangefinder, so in principle, the whole system could be shrunk to about the size of a coffee mug.

Wolfram Burgard, a professor of computer science at the University of Freiburg in Germany, says that the MIT researchers’ work is on the general topic of SLAM, or simultaneous localization and mapping. “Originally, this came out as a problem of robotics,” Burgard says. “This idea of having a SLAM system that is attached to a human’s body, for figuring out where it is, is actually innovative and pretty useful. For first responders, a technology like this one might be highly relevant.”

“With a robot, we typically assume that the robot lives in a plane,” Burgard continues. “What they definitely tackled is the problem of height and dealing with staircases, as the human walks up and down. The sensors are not always straight, because the body shakes. These are problems that they tackle in their approach, and where it actually goes beyond the standard 2-D SLAM.”

Both the U.S. Air Force and the Office of Naval Research supported the work.