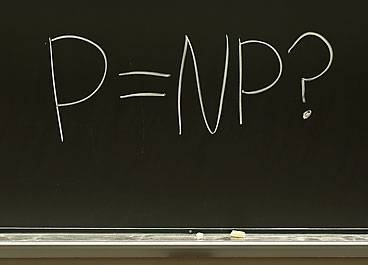

On Friday, Aug. 6, a mathematician at HP Labs named Vinay Deolalikar sent an e-mail to a host of other researchers with a 103-page attachment that purported to answer the most important outstanding question in computer science. That question is whether P = NP, and answering it will earn you $1 million from the Clay Mathematics Institute.

Last fall, MIT News published a fairly detailed explanation of what P = NP means. But roughly speaking, P is a set of relatively easy problems, NP includes a set of incredibly hard problems, and if they’re equal, then a large number of computer science problems that seem to be incredibly hard are actually relatively easy. Problems in NP include the factoring of large numbers, the selection of an optimal route for a traveling salesman, and the so-called 3-SAT problem, in which you must find values that satisfy triplets of logical conditions. For instance, is it possible to contrive an attendance list for a party that satisfies the triplet (Alice OR Bob AND Carol) AND (David AND Ernie AND NOT Alice)? (Yes: Bob, Carol, David, and Ernie were there, but Alice wasn’t.) Interestingly, the related 2-SAT problem — which instead uses pairs of conditions, like (Alice OR Ernie) — is in the set of easy problems, P, as is the closely related XOR-SAT problem.

Most computer scientists believe that P doesn’t equal NP, and that’s what Deolalikar claimed to have proved. But by the following Monday, despite being on vacation in the Mediterranean and having time only to glance through the proof, MIT Associate Professor of Electrical Engineering and Computer Science Scott Aaronson had announced on his blog that he would mortgage his house and chip in another $200,000 if Deolalikar’s proof was correct. Last week, Aaronson took a few minutes to answer three questions about P and NP.

Q. Has the proof now been shown conclusively to be wrong?

A. I would say yeah. It was clear a couple days ago that there was a very serious gap in the statistical-physics part of the argument. It was not clear at all that the argument for showing why an NP-complete problem like 3-SAT was hard wouldn’t also show that problems like XOR-SAT are hard. Now, XOR-SAT is a variant of this satisfiability problem, which is known to be in P, which has an efficient solution. So if you’re proving that a problem is hard, but your proof could also be adapted to show that an easy problem is hard, then your proof must be fallacious: it proves too much. That’s the first check that people look for when someone announces a proof of P not equal to NP. Why doesn’t it also work for the easy problems?

The problem with saying that the thing has been conclusively refuted is that whenever anyone points to a problem like this, Vinay Deolalikar has been tending to respond, “Oh, yeah, sure, well, I’m going to address that in my next draft.” So it’s kind of a moving target. But I think it’s absolutely clear right now that at least the existing version does not solve the problem and furthermore wouldn’t solve the problem without some very, very major new ideas.

Q. Why were you so certain that there was a flaw in the proof?

A. P vs. NP is an absolutely enormous problem, and one way of seeing that is that there are already vastly, vastly easier questions that would be implied by P not equal to NP but that we already don’t know how to answer. So basically, if someone is claiming to prove P not equal to NP, then they’re sort of jumping 20 or 30 nontrivial steps beyond what we know today. So the first thing you look for is, What about steps one, two, and three? Can he explain even the easier questions, how he’s answering those? So I looked at the manuscript, and I didn’t see that.

The other check is the one that I already mentioned, which is, Why does the proof fail for variants of NP-complete problems which are known to be easy? What Deolalikar was doing is, he’s trying to argue that 3-SAT is hard by looking at its statistical properties. The problem is that 2-SAT and XOR-SAT, the problems that are easy, have very, very similar statistical properties, so it did not look like something that could distinguish the hard problems from the easy ones.

We have very strong reasons to believe that these problems cannot be solved without major — enormous — advances in human knowledge. So you look at the paper and you don’t see that it’s commensurate with the scale of the problem that it’s claiming to solve. This is not a problem that’s going to be solved by just combining or pushing around the ideas that we already have.

Q. Given that most people are pretty confident that P does not equal NP, what would the proof really do for us?

A. Yes, almost all of us believe already that P is not equal to NP. But this is one of those things where it’s not so much the destination as the journey. It’s the massive amount of new understanding of computation that’s going to be needed to prove such a statement. What are we trying to prove? That for solving all these natural optimization problems, or these search problems, or a proof of a theorem, finding the best schedule for airlines, breaking cryptographic codes — all these different things, that there’s no algorithm, no matter how clever, that’s going to solve them feasibly. So in order to prove such a thing, a prerequisite to it is to understand the space of all possible efficient algorithms. That is an unbelievably tall order. So the expectation is that on the way to proving such a thing, we’re going to learn an enormous amount about efficient algorithms, beyond what we already know, and very, very likely discover new algorithms that will likely have applications that we can’t even foresee right now.

Often in the history of theoretical computer science, the same ideas that you use to prove that something’s impossible can then be turned around to show that something else is possible, and vice versa. The simplest example of that is in cryptography, where you show some problem is hard to solve, and that gives you a code that is useful. But there are many other examples.

After glancing over a 100-page proof that claimed to solve the biggest problem in computer science, Scott Aaronson bet his house that it was wrong. Why?

Publication Date: